The Complete Guide to Data Matching: Techniques, Tools, and Best Practices

Data matching is the process of comparing records across one or more datasets to identify entries that refer to the same real-world entity, such as a person, organization, product, or location. It is also known as record linkage or entity resolution, though each term carries slightly different technical connotations. Data matching uses deterministic rules, probabilistic scoring, fuzzy string algorithms, and (increasingly) machine learning models to connect records even when identifiers differ, fields are incomplete, or formatting is inconsistent.

For marketing teams cleaning suppression lists or consolidating multiple mailing files, our list matching software guide covers the merge-purge workflow end-to-end.

Location records require their own parsing, standardization, and postal-validation stack — covered in detail in our address matching software guide.

For enterprises, effective data matching is the difference between operating on fragmented, contradictory information and building a single, trusted view of customers, patients, suppliers, or citizens. According to Gartner, poor data quality costs organizations an average of $12.9 million per year, and unresolved duplicates are one of the primary drivers of that cost. This guide covers every core data matching technique, walks through the end-to-end matching process, evaluates tool selection criteria, and provides industry-specific implementation guidance.

Key Takeaways

Why Does Data Matching Matter for Enterprises?

Every enterprise accumulates records across dozens of systems: CRMs, ERPs, billing platforms, marketing automation tools, legacy databases, and third-party data feeds. According to a 2024 MuleSoft survey, the average enterprise now runs over 900 applications. Each system stores its own version of the same customers, products, and transactions, creating fragmentation that compounds over time.

The business consequences are direct and measurable. Duplicate customer records inflate marketing spend by 15–25% through redundant outreach (Experian Data Quality). Unmatched patient records in healthcare create safety risks: a 2023 AHIMA study found that 8–12% of records in a typical hospital system are duplicates, contributing to medication errors and redundant testing. In financial services, unresolved entity records weaken KYC/AML compliance and expose institutions to regulatory penalties.

Data matching solves these problems by identifying which records across systems refer to the same real-world entity, then providing the foundation for deduplication, data consolidation, and master data management (MDM). Without it, every downstream process (analytics, reporting, AI/ML training, compliance) operates on unreliable data.

"Matched 1.8 million records across three systems with under 2% false positives. Finally have a single source of truth we actually trust."

— Robert Tanaka, Director of Data Operations, Summit Financial Group

1.8M records matched across three systems

What Are the Core Data Matching Techniques?

Data matching techniques fall into four primary categories, each suited to different data conditions and accuracy requirements. Most enterprise implementations use a hybrid approach, applying deterministic matching first for high-confidence pairs and then probabilistic or ML-based methods for ambiguous cases.

Deterministic (Exact) Matching

Deterministic matching compares records field by field against exact rules. If two records share the same Social Security Number, email address, or account ID, they are declared a match. This method is fast, transparent, and highly precise when unique identifiers exist. Its limitation is obvious: it fails when identifiers are missing, misspelled, or formatted differently across systems.

A regional bank processing 4M customer records found that deterministic matching on SSN and email resolved only 62% of true duplicates because 38% of records lacked one or both identifiers. Adding probabilistic scoring to the remaining unmatched records raised resolution to 94%.

Probabilistic Matching

Probabilistic matching, rooted in the Fellegi-Sunter model developed in 1969, assigns weights to each field comparison based on how much agreement (or disagreement) on that field shifts the probability that two records are a true match. Fields with high discriminating power (like date of birth) receive higher weights; fields with low discriminating power (like gender) receive lower weights. The combined score determines whether a pair is a match, non-match, or requires manual review.

This technique handles missing data and field-level inconsistencies far better than deterministic methods. It is the standard approach in healthcare patient matching (EMPI systems), government record linkage, and large-scale customer deduplication projects.

Fuzzy Matching

Fuzzy matching applies string similarity algorithms to identify records that are close but not identical. Common algorithms include Levenshtein distance (edit distance), Jaro-Winkler similarity (optimized for short strings like names), Soundex and Metaphone (phonetic matching), and cosine similarity for token-based comparisons.

For matching person records across spelling variations, nicknames, and cultural naming conventions, see our deep dive on fuzzy name matching software.

Fuzzy matching excels at catching typographical errors, nickname variations ("Robert" vs. "Bob"), transliteration differences, and address formatting inconsistencies. The accuracy of fuzzy matching depends heavily on the similarity threshold chosen: too low and you generate false positives; too high and you miss true matches. Enterprise fuzzy matching software typically provides tunable thresholds with test-and-learn workflows to optimize this balance.

MatchLogic's fuzzy matching engine maps name variations, typos, and format inconsistencies across records, showing similarity scores for every potential match.

Machine Learning-Based Matching

ML-based matching trains classification models on labeled pairs of records (match vs. non-match) to learn complex patterns that rule-based methods miss. These models can incorporate dozens of features simultaneously: string similarities, numerical distances, pattern recognition, and even behavioral signals. Once trained, they score new record pairs with a probability estimate.

The trade-off is explainability. While ML models often achieve the highest accuracy (F1 scores above 0.97 in some benchmarks), their decision logic is harder to audit than deterministic or probabilistic rules. For regulated industries where match decisions must be auditable (healthcare, financial services, government), a hybrid approach that uses ML for candidate scoring and rule-based logic for final classification often provides the best balance.

Comparison: Data Matching Techniques

Deterministic

Probabilistic

Fuzzy

ML-Based

How Does the Data Matching Process Work?

Regardless of which technique you use, the data matching pipeline follows a consistent six-stage process. Skipping or rushing any stage degrades the accuracy of the final output.

Step 1: Data Preparation and Profiling

Before matching begins, source data must be profiled for completeness, consistency, and format. Data profiling reveals the percentage of null values per field, format inconsistencies (date formats, phone number patterns), and outliers that could distort match scores.

MatchLogic's profiling engine scans millions of records in seconds, revealing completeness scores, format chaos, and duplicate risk before any matching rules are configured.

Data preparation includes standardization (converting "Street" to "St.", parsing name components into first/middle/last) and cleansing (correcting known errors, filling deterministic nulls). The quality of this stage directly determines matching accuracy. A 2023 DAMA-DMBOK benchmark found that standardizing input data before matching improved F1 scores by 12–18% compared to matching raw data.

Step 2: Blocking and Indexing

Comparing every record to every other record (a Cartesian product) is computationally prohibitive at enterprise scale. A dataset of 10 million records would generate 50 trillion pairwise comparisons. Blocking partitions the data into subsets (blocks) that share a common attribute, such as the first three characters of a last name, ZIP code, or year of birth. Comparisons then occur only within blocks, reducing the number of pairs by 99% or more.

The trade-off is that blocking can miss true matches where the blocking key itself contains an error. Multi-pass blocking (running separate blocking passes on different keys) and sorted neighborhood algorithms mitigate this risk. For example, a 500-bed hospital system processing 2 million patient records used three blocking passes (last name + DOB, SSN last four + ZIP, phone number) and achieved 99.7% recall while reducing pairwise comparisons from 2 trillion to 14 million.

Step 3: Candidate Pair Generation

Within each block, the system generates candidate pairs for detailed comparison. Efficient pair generation algorithms (such as sorted neighborhood with a sliding window) further reduce the comparison space while maintaining high recall. The output of this stage is a list of record pairs that will proceed to scoring.

Step 4: Comparison and Scoring

Each candidate pair is compared across multiple fields using the selected matching technique. Deterministic comparisons yield binary results (match or no match). Probabilistic and fuzzy comparisons produce similarity scores per field, which are combined into an overall match score. The comparison functions must be chosen to match the data type: Jaro-Winkler for names, normalized edit distance for addresses, exact comparison for dates, and phonetic encoding for fields prone to transliteration.

MatchLogic displays match results with per-field confidence scores, showing exactly which algorithms fired and why each pair was classified as a match.

Step 5: Classification and Manual Review

Based on the combined score, each pair is classified as a match, non-match, or "possible match" requiring human review. Setting the thresholds for these classifications is critical. A threshold that is too low generates false positives (merging records that should remain separate); a threshold that is too high creates false negatives (missing true duplicates). Enterprise data matching tools provide test-and-learn environments where analysts can adjust thresholds against labeled validation sets before running production matches.

The manual review queue should be sized to the team's capacity. Best practice is to target a review queue of 1–3% of total candidate pairs. If the queue exceeds 5%, the matching rules or blocking strategy need tuning.

Step 6: Merging and Survivorship

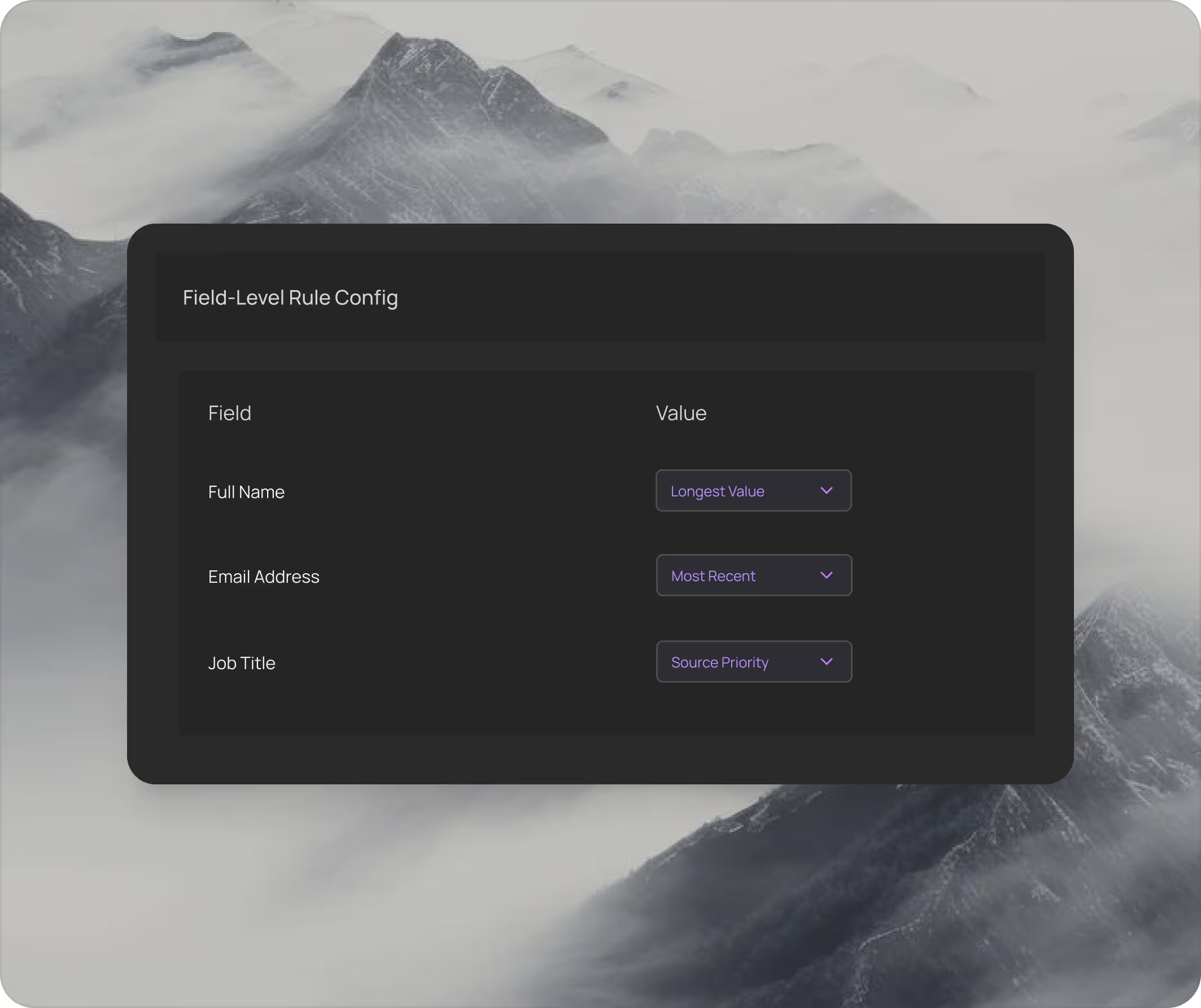

Once matches are confirmed, the system must determine which field values "survive" into the merged golden record. Survivorship rules define the logic: most recent value wins, most complete value wins, source system priority, or field-by-field custom rules. For merge purge operations, survivorship is particularly important because incorrect merges are expensive to reverse.

MatchLogic's survivorship engine lets you configure field-level merge rules: names use the longest value, dates use the most recent, addresses use the most complete source.

$2.1M

Average savings from duplicate payment prevention

<8 sec

To match 1 million records

30%

Average hidden duplicates found in first scan

How Should You Evaluate Data Matching Tools?

The data matching software market includes enterprise platforms (Informatica, IBM, SAS, MatchLogic), specialized matching tools, and open-source libraries. When evaluating options, focus on these criteria.

Algorithm Flexibility

Scalability

Deployment Model

Auditability

Integration

Standardization Built-in

MatchLogic provides profiling, cleansing, matching, and merge purge in a single platform, eliminating the need to stitch together separate tools for each stage of the data quality pipeline.

Where Is Data Matching Used Across Industries?

Healthcare: Patient Matching and EMPI

Hospitals and health systems use data matching to build Enterprise Master Patient Indexes (EMPIs) that link patient records across facilities, EHR systems, and insurance claims databases. According to a 2023 Pew Charitable Trusts study, the national patient misidentification rate ranges from 8% to 12%, contributing to an estimated $6 billion in unnecessary costs annually through duplicate tests, delayed diagnoses, and billing errors.

A 500-bed hospital system processing 2 million patient records deployed probabilistic matching with three blocking passes (name + DOB, SSN fragment + ZIP, phone number) and reduced its duplicate rate from 11.2% to 0.8% within 90 days. The system runs on-premise to comply with HIPAA's data residency provisions, processing all patient data within the hospital's secured network.

Financial Services: KYC, AML, and Fraud Detection

Banks and financial institutions use data matching for Know Your Customer (KYC) onboarding, Anti-Money Laundering (AML) screening, and fraud detection. Matching customer records against sanctions lists (OFAC, EU Sanctions) and politically exposed persons (PEP) databases requires high recall: missing a true match carries severe regulatory penalties. A Tier 2 bank processing 15 million customer records against OFAC and PEP lists used a combination of exact matching on identifiers and fuzzy matching on names, reducing false negatives by 34% while cutting false positives (and the associated manual review cost) by 22%.

"As part of the journey we've gone through with MatchLogic, we're becoming more data-first, moving from assumption to assurance around data quality."

— Daniel Hughes, VP of Analytics, Finverse Bank

Retail and E-Commerce: Customer 360

Retailers use data matching to merge customer records from point-of-sale systems, e-commerce platforms, loyalty programs, and third-party marketing lists into a unified Customer 360 profile. Without matching, the same customer who buys in-store, online, and through a mobile app appears as three separate individuals, inflating customer counts and fragmenting personalization efforts.

Government: Benefits Administration and Fraud Prevention

Government agencies use record linkage software to link records across departments (tax, benefits, health, housing) to detect fraud, ensure benefits reach eligible recipients, and improve service delivery. A classic example: the U.S. Federal Aviation Administration matched 40,000 Northern California pilot records against Social Security Administration disability payment records. Forty pilots were flagged and arrested for claiming to be both medically fit to fly and disabled enough to collect benefits.

On-Premise vs. Cloud Data Matching: When Does Each Make Sense?

The deployment model for your data matching infrastructure is not a minor architectural detail; it has direct implications for security, compliance, performance, and total cost of ownership.

MatchLogic is built as an on-premise platform precisely because the enterprises that need the most accurate, auditable, and compliant data matching, those in healthcare, financial services, and government, also require that their data never leaves their secured infrastructure. This is not a limitation; it is a deliberate architectural choice that aligns with how regulated enterprises actually operate.

Data Residency

Processing Control

Latency

Auditability

Total Cost of Ownership

Best For

What Are the Best Practices for Enterprise Data Matching?

Start with Data Profiling, Not Matching

The most common mistake in enterprise matching projects is jumping straight to algorithm configuration without first understanding the data. Profile every source dataset to identify completeness rates, format patterns, and duplicate distributions. This step takes days; skipping it costs weeks of rework later.

"First profile revealed 40% missing data and format chaos we never suspected. Helped us fix issues before migration."

— Michael Chen, VP Data Governance, Global Logistics Inc.

40% missing data identified before matching began

Use Multi-Pass Blocking

A single blocking key misses true matches where that key is corrupted. Use at least two, ideally three, independent blocking passes with different keys. Measure recall after each pass to confirm the added pass is catching new true matches.

Establish Ground Truth Early

Before running production matches, manually label a validation set of at least 500 record pairs (250 true matches, 250 true non-matches). Use this set to tune thresholds and measure accuracy. Without ground truth, you are guessing.

Measure Precision, Recall, and F1

Precision (what percentage of declared matches are correct) and recall (what percentage of true matches were found) are both critical. The F1 score balances them. For most enterprise use cases, target an F1 score above 0.95. Monitor these metrics over time as data volumes and sources change.

MatchLogic tracks confidence score distributions over time, letting you monitor whether match quality improves or degrades as data sources evolve.

Automate Ongoing Matching

Data matching is not a one-time project. New records enter the system daily. Establish automated matching workflows that run on ingest (real-time or near-real-time) and batch matching on a scheduled cadence (weekly or monthly) to catch drift. The cost of a single matching project is wasted if duplicates re-accumulate within six months.

Document Everything for Compliance

In regulated industries, every match decision must be traceable. Log the matching rules applied, the scores generated, the threshold used, and whether a human reviewer confirmed or overrode the system's recommendation. This audit trail is required under HIPAA for patient matching, SOX Section 404 for financial data integrity, and GDPR Article 5 for data accuracy.

How Does Data Matching Relate to Entity Resolution and Deduplication?

Data matching, entity resolution, and deduplication are closely related but distinct processes. Data matching is the foundational operation: comparing records to determine similarity. Entity resolution builds on matching by adding clustering (grouping all records that refer to the same entity) and canonicalization (creating a single best-version record for each entity). Data deduplication is a specific application of matching where the goal is to identify and remove or merge duplicate records within a single dataset.

MatchLogic's entity resolution engine builds on matching by clustering related records into unified entity profiles, connecting scattered fragments into a single view.

Think of it as a progression: matching identifies candidate pairs, entity resolution groups those pairs into clusters representing real-world entities, and deduplication applies merge/purge logic to produce clean, non-redundant records. Each step depends on the quality of the step before it.

Building a Data Matching Strategy That Scales

Data matching is not a feature checkbox; it is an ongoing discipline that determines the quality of every downstream data process in your organization. The techniques available (deterministic, probabilistic, fuzzy, and ML-based) each serve specific data conditions, and the most effective enterprise implementations combine multiple approaches in hybrid workflows.

The matching process itself, from profiling through blocking, scoring, classification, and merging, follows well-established stages that have been refined over decades of academic research and enterprise practice. Getting each stage right requires investment in data profiling, ground truth labeling, threshold tuning, and ongoing monitoring.

MatchLogic provides the on-premise infrastructure for enterprises that need to run this entire pipeline within their own secured environment, with full auditability and control over every match decision. Whether your priority is patient safety, regulatory compliance, customer intelligence, or operational efficiency, the foundation is the same: accurate, repeatable, auditable data matching.

"Merge purge eliminated 60,000 duplicate records from our mailing list. Cut direct mail costs by 34% in the first quarter."

— Sarah Caldwell, VP Marketing Operations, Beacon Health Partners

34% cost reduction in first quarter

When the task is connecting records across separately managed systems that share no primary key, our database matching software guide walks through the workflow stage by stage.

Frequently Asked QuestionsWhat is data matching and why do enterprises need it?

Data matching is the process of comparing records across datasets to identify entries that refer to the same real-world entity, such as a customer, patient, or supplier. Enterprises need it because fragmented records across multiple systems (CRM, ERP, billing, marketing) create duplicates that inflate costs, weaken analytics, and create compliance risk. According to Gartner, poor data quality costs organizations an average of $12.9 million per year, and unresolved duplicates are a primary contributor.

What is the difference between deterministic and probabilistic data matching?

Deterministic matching compares fields for exact equality (e.g., matching on SSN or email address) and works well when unique identifiers are present and clean. Probabilistic matching assigns weighted scores to field comparisons and calculates an overall match probability, making it effective when data is incomplete, inconsistent, or lacks unique identifiers. Most enterprise implementations use both: deterministic matching for high-confidence pairs, followed by probabilistic scoring for the remainder.

How accurate is fuzzy matching for enterprise data?

Fuzzy matching accuracy depends on the algorithm chosen, the similarity threshold configured, and the quality of the input data. Common algorithms (Jaro-Winkler, Levenshtein, Soundex) handle name variations, typos, and formatting differences effectively. With proper threshold tuning against a labeled validation set, fuzzy matching typically achieves F1 scores between 0.88 and 0.95. Combining fuzzy matching with probabilistic weighting across multiple fields pushes accuracy higher.

Can data matching run on-premise for regulated industries?

Yes. On-premise data matching platforms process all data within your organization's secured infrastructure, ensuring that sensitive records (patient data, financial records, government identifiers) never leave your network. This addresses data residency requirements under HIPAA, GDPR, SOX, and industry-specific mandates. MatchLogic is built specifically for on-premise deployment in regulated enterprise environments.

How do you measure data matching quality?

Three metrics matter most. Precision measures the percentage of declared matches that are correct (reducing false positives). Recall measures the percentage of true matches that the system found (reducing false negatives). The F1 score is the harmonic mean of precision and recall, providing a single balanced metric. Enterprise benchmarks target an F1 score above 0.95. These metrics should be tracked over time as data sources and volumes evolve.

What is blocking in data matching and why is it necessary?

Blocking partitions records into subsets that share a common attribute (like ZIP code or last name prefix), so the system only compares records within the same block instead of comparing every record to every other record. Without blocking, a dataset of 10 million records would require 50 trillion comparisons. Blocking reduces this by 99%+ while preserving high recall. Multi-pass blocking with different keys mitigates the risk of missing matches where the blocking key itself contains an error.

.svg)