Fuzzy Matching Techniques: Algorithms, Scoring, and Real-World Applications

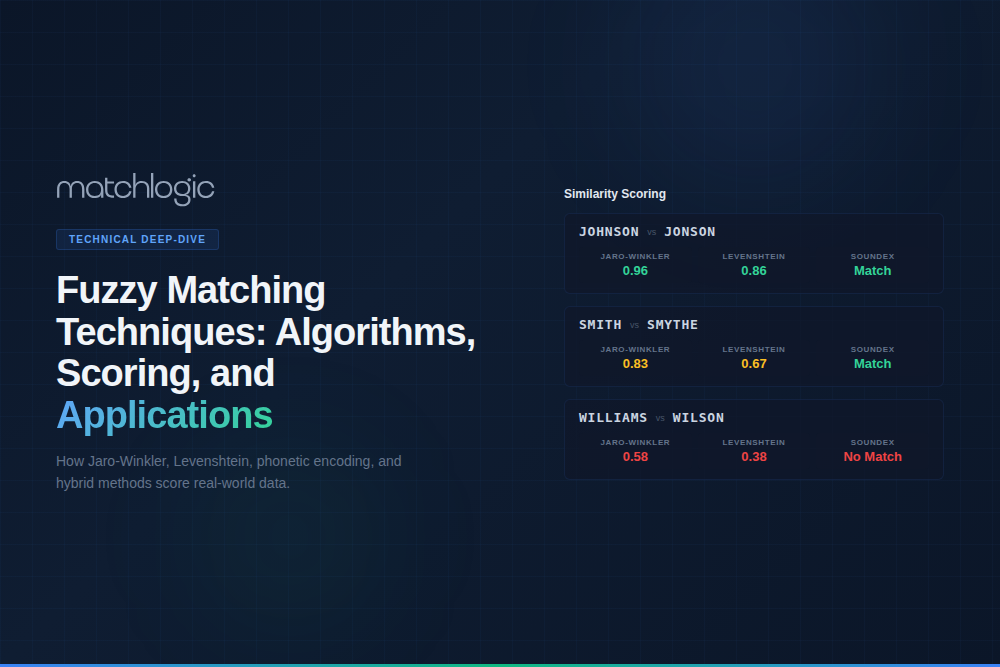

Fuzzy matching techniques are string similarity algorithms that measure how closely two text values resemble each other, producing a numerical score rather than a binary match/no-match result. The five primary categories are edit-distance algorithms (Levenshtein, Damerau-Levenshtein), character-transposition algorithms (Jaro, Jaro-Winkler), phonetic algorithms (Soundex, Metaphone, Double Metaphone), token-based algorithms (Jaccard, cosine similarity, TF-IDF), and hybrid approaches (Monge-Elkan, SoftTF-IDF). Each category is optimized for different data types and error patterns, and enterprise fuzzy matching systems apply multiple techniques in parallel to maximize accuracy.

Choosing the right fuzzy matching technique for each field type is one of the most consequential decisions in a data matching project. Applying Levenshtein distance to person names produces poor results because it penalizes "Robert" vs. "Bob" severely (high edit distance), while Jaro-Winkler handles this effectively because of its prefix bonus. Conversely, Jaro-Winkler performs poorly on long address strings where Levenshtein or token-based methods are more appropriate. This guide provides algorithm-by-algorithm breakdowns with scoring examples, a decision framework for field-type assignment, and real-world application scenarios. For the broader matching process, see our data matching guide.

Key Takeaways

- ✓Fuzzy matching techniques fall into five categories: edit-distance, character-transposition, phonetic, token-based, and hybrid algorithms.

- ✓Each algorithm category is optimized for specific data types: Jaro-Winkler for names, Levenshtein for addresses, Soundex for phonetic variants, cosine for company names.

- ✓Applying the wrong algorithm to a field type degrades accuracy; "Robert" vs. "Bob" scores 0.43 on Levenshtein but matches on Soundex (same phonetic code).

- ✓Enterprise systems apply multiple algorithms in parallel per comparison, choosing the best technique per field type automatically.

- ✓Threshold tuning is critical: lowering from 0.90 to 0.85 can increase recall by 15% while only increasing false positives by 2-3%.

- ✓Standardizing data before fuzzy comparison eliminates format noise, converting many fuzzy matches into exact matches.

What Are Edit-Distance Fuzzy Matching Techniques?

Edit-distance algorithms measure how many single-character operations (insertions, deletions, substitutions) are needed to transform one string into another. The fewer the operations, the more similar the strings.

Levenshtein Distance

The most widely known fuzzy matching algorithm. Levenshtein distance counts the minimum number of insertions, deletions, and substitutions required to convert one string into another. The score is typically normalized to a 0-1 scale by dividing by the length of the longer string.

Scoring example: "MAIN STREET" vs. "MAIN ST" requires 4 deletions (R, E, E, T), producing a raw distance of 4 and a normalized similarity of 0.64. "SMITH" vs. "SMYTH" requires 1 substitution, producing a similarity of 0.80. Levenshtein works well for addresses, product codes, and other fields where character-level edits represent real data quality issues. It performs poorly on names where semantic variants ("Robert" vs. "Bob") have high edit distances.

Damerau-Levenshtein Distance

Extends Levenshtein by adding transpositions (swapping two adjacent characters) as a single operation instead of two. This better models typing errors, which frequently involve transposed characters. "RECIEVE" vs. "RECEIVE" is distance 1 (one transposition) under Damerau-Levenshtein, versus distance 2 (one deletion + one insertion) under standard Levenshtein.

What Are Character-Transposition Fuzzy Matching Techniques?

Jaro Similarity

Jaro similarity measures the proportion of matching characters (characters that appear in both strings within a defined window) and the number of transpositions. It produces scores between 0 (no similarity) and 1 (identical strings). Jaro is particularly effective for short strings because it does not penalize length differences as heavily as edit-distance methods.

Jaro-Winkler Similarity

Jaro-Winkler extends Jaro by adding a prefix bonus: strings that share the same first 1-4 characters receive a score boost. This reflects the empirical observation that misspellings and variants tend to share common prefixes. "ROBERT" and "ROBERTO" share a 6-character prefix, earning a significant bonus.

Scoring example: "MARTHA" vs. "MARHTA" (a transposition) scores approximately 0.961 on Jaro-Winkler. "ROBERT" vs. "BOB" scores approximately 0.43 on Levenshtein but only 0.39 on Jaro-Winkler, confirming that neither algorithm handles nickname variants. For nicknames, phonetic algorithms or reference dictionaries are needed. For fuzzy name matching software, enterprise tools maintain nickname dictionaries that map "Bob" to "Robert" before algorithmic comparison.

What Are Phonetic Fuzzy Matching Techniques?

Soundex

Soundex encodes a string into a four-character code based on how it sounds in English. The first character is retained, and subsequent consonants are mapped to digits (B/F/P/V = 1, C/G/J/K/Q/S/X/Z = 2, etc.). Vowels and repeated digits are dropped. "Smith" and "Smyth" both encode to S530. "Catherine" and "Katherine" both encode to C365.

Soundex is binary: two strings either produce the same code (match) or different codes (no match). There is no granular similarity score. This makes it useful as a blocking key (group records by Soundex code before applying finer-grained algorithms) or as a supplementary signal alongside character-based methods. Its limitation is false positives: phonetically similar but semantically different names ("Lee" and "Li") produce the same code.

Metaphone and Double Metaphone

Metaphone improves on Soundex by using more sophisticated phonetic rules that account for English pronunciation patterns (silent letters, consonant clusters, vowel sounds). Double Metaphone extends this further by generating two encodings per string: a primary and an alternate. This captures cases where a name has two plausible pronunciations ("Schmidt" can sound like "Shmit" or "Smith" depending on the speaker).

Double Metaphone is the standard phonetic algorithm in enterprise fuzzy matching tools because it handles a broader range of name origins (Germanic, Slavic, Romance, Asian-transliterated) than Soundex. It is still most effective for English-language data; multilingual matching requires language-specific phonetic models.

What Are Token-Based Fuzzy Matching Techniques?

Jaccard Similarity

Jaccard similarity measures the overlap between two sets of tokens (words or n-grams). The score is the size of the intersection divided by the size of the union. "John Robert Smith" and "Robert Smith John" have a Jaccard similarity of 1.0 because they contain the same token set, despite different ordering. This makes Jaccard ideal for fields where word order varies (company names, addresses with reordered components).

Cosine Similarity

Cosine similarity treats strings as vectors (using token frequency counts or TF-IDF weights) and measures the cosine of the angle between them. Like Jaccard, it is insensitive to word order, but unlike Jaccard, it accounts for token frequency. "IBM Corp" vs. "International Business Machines Corporation" scores poorly on character-based methods but can achieve high cosine similarity when token-level comparison is used with an abbreviation expansion dictionary.

N-Gram Similarity

N-gram methods break strings into overlapping character sequences of length n (bigrams for n=2, trigrams for n=3) and measure the overlap. "NIGHT" produces bigrams [NI, IG, GH, HT] and "NACHT" produces [NA, AC, CH, HT]. The shared bigram "HT" produces a low similarity score, reflecting that these strings are quite different despite a shared ending. N-gram similarity is useful for catch-all fuzzy comparison when the nature of variations is unpredictable.

Which Fuzzy Matching Technique Should You Use for Each Field Type?

| Field Type | Primary Algorithm | Secondary Algorithm | Why This Combination |

|---|---|---|---|

| Person Names | Jaro-Winkler | Double Metaphone | Catches typos + pronunciation variants. |

| Addresses | Levenshtein | Token-based / Cosine | Character edits + word reordering. |

| Company Names | Cosine / TF-IDF | Jaccard | Abbreviations, suffixes, word order. |

| Phone Numbers | Exact after normalization | Levenshtein (low threshold) | Normalization resolves most; Levenshtein catches digit errors. |

| Emails | Exact on domain; Levenshtein on local | None needed | Highly structured; domain must match. |

| Product Codes | Levenshtein (very low threshold) | N-gram | Minimal variation tolerated; higher edits = different product. |

How Do You Tune Fuzzy Matching Thresholds?

The similarity threshold determines the boundary between matches and non-matches. Setting it requires balancing precision (avoiding false positives) against recall (catching true matches). There is no universal correct threshold; it depends on your data quality, match volume, and tolerance for manual review.

Best practice: create a labeled validation set of at least 500 record pairs (250 known matches, 250 known non-matches). Run fuzzy matching at multiple thresholds (0.80, 0.85, 0.90, 0.95) and measure precision and recall at each level. Plot the precision-recall curve to find the threshold that maximizes F1 score for your specific data. MatchLogic's threshold tuning interface shows this curve in real time, letting you adjust thresholds and see the immediate impact on match quality.

A practical observation from enterprise deployments: lowering the threshold from 0.90 to 0.85 typically increases recall by 10–15% (catching significantly more true matches) while only increasing false positives by 2–3%. This is often the highest-ROI threshold adjustment available. Below 0.80, false positive rates typically accelerate and the manual review burden becomes unsustainable.

Why Does Standardization Reduce the Need for Fuzzy Matching?

Every format variation that can be eliminated by standardization before comparison is a comparison that does not need fuzzy matching. When "123 North Main Street" and "123 N. Main St." are standardized to "123 N MAIN ST" before comparison, the match is exact. The fuzzy matching engine is not needed for that field, and the overall match confidence increases because one field produces a certain result instead of a probabilistic one.

MatchLogic customer benchmarks show that standardizing data before fuzzy matching improves accuracy by 40–50%. The integrated pipeline (standardize first, then match) eliminates format noise and lets fuzzy algorithms focus on genuine data quality issues: actual typos, genuine name variants, and real differences that require similarity scoring. For data deduplication guide where false positives are particularly costly, this preprocessing step is non-negotiable.

"Matched 1.8 million records across three systems with under 2% false positives. Finally have a single source of truth we actually trust."

1.8M records matched using hybrid fuzzy techniques

Where Are Fuzzy Matching Techniques Applied in Enterprise Scenarios?

Healthcare: Patient Name Matching

Patient name matching requires Jaro-Winkler for character-level name variants plus Double Metaphone for transliterated names (common in multilingual populations). A 500-bed hospital system matching 2 million patient records used this combination with multi-pass blocking (name + DOB, SSN fragment + ZIP) and reduced its duplicate rate from 11.2% to 0.8%. For enterprise fuzzy matching software selection in healthcare contexts, auditability of match decisions is the primary requirement.

Financial Services: Sanctions List Screening

KYC/AML screening matches customer names against sanctions lists (OFAC, EU Sanctions, PEP databases). This requires high recall: missing a true match against a sanctioned entity carries severe regulatory penalties. Financial institutions typically use Jaro-Winkler + Soundex with lower-than-normal thresholds (0.80 or below), accepting a higher false positive rate to minimize false negatives. The manual review burden is the cost of regulatory safety.

Retail: Product Catalog Deduplication

Product matching uses Levenshtein for SKU and model number comparisons (where single-character errors are common) plus cosine similarity for product descriptions (where word order and phrasing vary). A retailer with 500,000 SKUs across 12 regional catalogs used this combination to identify 15,000 duplicate products listed under different codes, reducing inventory management overhead by 18%.

Matching the Right Algorithm to the Right Data

Fuzzy matching is not a single technique; it is a family of algorithms, each optimized for different data types and error patterns. The most effective enterprise implementations assign algorithms per field type (Jaro-Winkler for names, Levenshtein for addresses, cosine for company names, phonetic for transliterated names) and apply them in parallel within a unified matching pipeline.

MatchLogic supports all five algorithm categories within a single platform, with per-field algorithm assignment, visual threshold tuning, and integrated standardization that eliminates format noise before fuzzy comparison begins. For organizations that need both accuracy and auditability, every algorithm's score contribution is logged and traceable for every match decision.

Frequently Asked Questions

What are the main categories of fuzzy matching techniques?

The five categories are edit-distance (Levenshtein, Damerau-Levenshtein), character-transposition (Jaro, Jaro-Winkler), phonetic (Soundex, Metaphone, Double Metaphone), token-based (Jaccard, cosine similarity, TF-IDF), and hybrid (Monge-Elkan, SoftTF-IDF). Enterprise tools apply multiple categories per comparison for maximum accuracy.

Which fuzzy matching technique is best for matching person names?

Jaro-Winkler is the primary algorithm for person names because its prefix bonus captures common name variants. Double Metaphone should be applied as a secondary technique to catch phonetic variants and transliterated names. For nickname resolution ("Bob" to "Robert"), reference dictionaries are needed alongside algorithmic comparison.

How do you choose a fuzzy matching threshold?

Create a labeled validation set of at least 500 record pairs. Run matching at multiple thresholds (0.80, 0.85, 0.90, 0.95) and measure precision and recall at each level. Choose the threshold that maximizes F1 score for your data. Lowering from 0.90 to 0.85 typically gains 10-15% recall with only 2-3% more false positives.

Does standardization reduce the need for fuzzy matching?

Yes. Every format variation eliminated by standardization before comparison converts a fuzzy match into an exact match. MatchLogic benchmarks show 40-50% accuracy improvement when data is standardized before fuzzy comparison. Standardization should always precede matching in the pipeline.

.svg)