Data Accuracy in Financial Services: Regulatory Pressure and Operational Risk

Data accuracy in financial services is the degree to which customer records, transaction data, and risk information are correct, complete, and free of duplicates across all systems within a financial institution. According to Gartner, organizations in the financial sector lose an average of $15 million per year due to poor data quality. For banks, insurers, and investment firms, inaccurate data does not just create operational inefficiency; it triggers regulatory fines, enables financial crime, distorts risk models, and erodes the trust that the entire financial system depends on.

This guide examines data accuracy through the five operational lenses where it has the greatest impact in financial services: KYC and anti-money laundering compliance, regulatory reporting, fraud detection, risk management, and post-merger data consolidation. Each section identifies the specific data quality failures that create exposure and the proven strategies for preventing them.

Post-merger integration in financial services surfaces many of the same data migration problems seen in other regulated industries — schema drift, duplicate records, and broken lineage.

Healthcare faces the same accuracy pressure through different regulation — see our companion guide on data quality in healthcare for EMPI and HIPAA-specific patterns.

How Does Data Accuracy Affect KYC and AML Compliance?

Know Your Customer (KYC) and Anti-Money Laundering (AML) programs are only as effective as the data they operate on. When customer records contain duplicate entries, inconsistent name spellings, outdated addresses, or missing identification fields, the entire compliance framework weakens.

A customer named "Mohammed Al-Rashid" at one branch and "M. Alrashid" at another is two records in the system but one person in reality. Without entity resolution to link these records, each identity is screened independently against sanctions lists and transaction monitoring thresholds. If Mohammed deposits $8,000 at Branch A and $7,000 at Branch B, neither transaction triggers the $10,000 Currency Transaction Report (CTR) threshold. But the combined $15,000 should have triggered a report. This is structuring, a federal offense, and the bank's system missed it because of a data quality failure.

The cost of KYC data remediation is substantial. According to Thomson Reuters, the average financial institution spends $60 million per year on KYC compliance. When customer data is inaccurate, a significant portion of that budget goes to manual remediation rather than genuine risk assessment. Industry estimates put the per-record remediation cost at $25 to $50. For a mid-size bank with 5 million customer records and a 10% data error rate, that translates to $12.5 million to $25 million in avoidable rework.

What Entity Resolution Does for KYC

Entity resolution connects all records belonging to the same customer, regardless of name variations, address changes, or identifier differences across systems. It uses probabilistic and fuzzy matching algorithms to determine that "Robert J. Smith" at "123 Main St, Apt 4B" and "Bob Smith" at "123 Main Street #4B" are the same person, with a quantified confidence score. Once resolved, the customer's complete activity is visible in a single view, enabling accurate sanctions screening, transaction monitoring, and risk scoring.

entity resolution for financial services

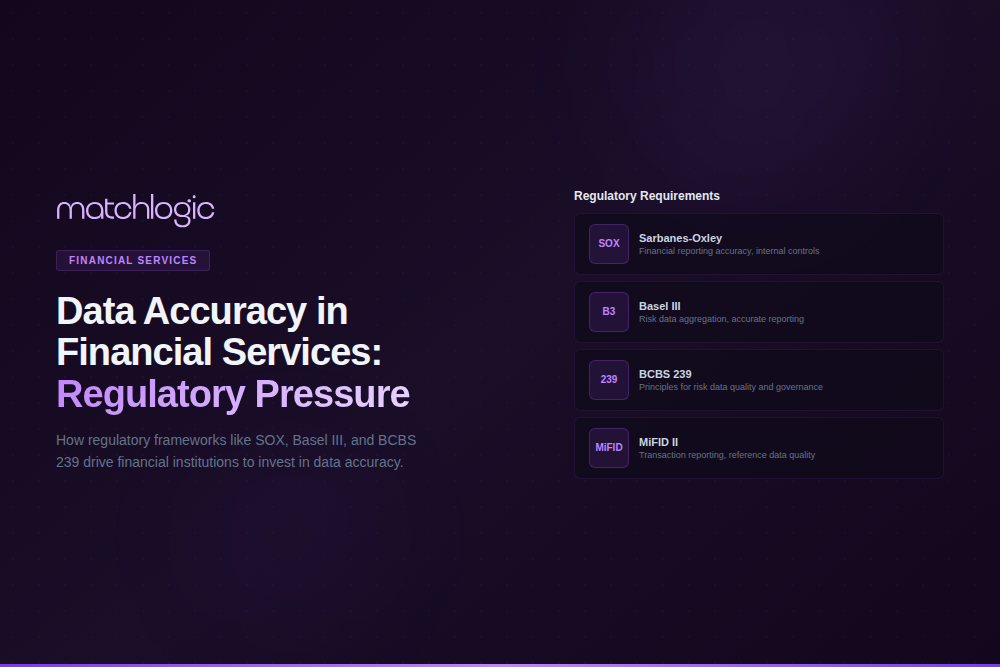

What Regulations Require Data Accuracy in Financial Services?

Financial services is the most heavily regulated industry for data quality. Multiple overlapping frameworks impose specific requirements on the accuracy, completeness, timeliness, and lineage of financial data. Non-compliance carries consequences that range from monetary penalties to criminal prosecution and loss of banking licenses.

BCBS 239 deserves particular attention. Published in 2013 in response to the 2007-2008 financial crisis, it established 14 principles for risk data quality. As of 2024, only 2 of 31 global systemically important banks fully comply with all principles, according to PwC's analysis of the Basel Committee's progress report. The most common compliance gaps are in data accuracy (Principle 3) and data completeness (Principle 4), both of which require institutions to demonstrate that their risk data is free of material errors and includes all relevant exposures.

How Does Poor Data Accuracy Enable Financial Fraud?

Fraud detection systems depend on the ability to see a complete picture of each customer's activity across all accounts, channels, and products. When customer data is fragmented across multiple records, fraud detection systems see each fragment independently and fail to connect patterns that would be obvious in a unified view.

Consider a synthetic identity fraud scenario. A fraudster creates an identity using a real Social Security number (often belonging to a child or elderly person) combined with a fabricated name and address. The fraudster opens accounts at three different branches under slightly different name variations. Without entity resolution linking these accounts, the bank's fraud detection system treats each account as a separate, legitimate customer. The fraudster builds credit history on each account independently, then maxes out all credit lines simultaneously. The Federal Reserve estimates that synthetic identity fraud costs US lenders $6 billion per year.

The prevention requires entity resolution across all customer-facing systems. When every account opening is matched against the existing customer base using fuzzy matching algorithms (not just exact SSN matching), synthetic identities are flagged at the point of application. The match confidence score reveals that three "customers" share the same SSN but have different names, a pattern that triggers immediate review rather than automatic approval.

What Is the Impact of Data Accuracy on Risk Management?

Risk models are mathematical constructs built on data. When the underlying data contains errors, the model's outputs are wrong in ways that may not be apparent until a stress event exposes the gap. The 2007-2008 financial crisis demonstrated this at a systemic level: risk models underestimated exposure because the data they consumed was incomplete, inconsistent, and siloed across business units.

A credit risk model that cannot link a borrower's personal loan, mortgage, and credit card accounts because they are stored under different customer identifiers will underestimate that borrower's total exposure. Multiply this across thousands of borrowers and the bank's aggregate risk calculation is materially wrong. The model shows sufficient capital reserves when the actual exposure requires significantly more.

BCBS 239 Principle 6 (Accuracy) requires that risk data aggregation should be largely automated to minimize the probability of errors. Principle 3 requires that data should be aggregated on a largely automated basis to minimize the probability of errors. For institutions with legacy systems that store customer data in silos without unified identifiers, meeting these requirements demands an entity resolution layer that connects records across systems and produces a single view of each counterparty's exposure.

How Does Data Accuracy Affect Post-Merger Integration in Financial Services?

Bank mergers and acquisitions create the most complex data accuracy challenges in financial services. Two institutions, each with their own customer databases, account numbering systems, product codes, and data quality standards, must be consolidated into a single operating environment. The combined customer database inevitably contains massive overlap: the same customers who banked at Institution A also banked at Institution B.

A regional bank merger involving 2.8 million customer records from the acquiring institution and 1.9 million from the target found, after entity resolution, that 340,000 customers existed in both databases. Without deduplication, those 340,000 customers would have received duplicate welcome packages, duplicate regulatory disclosures, and duplicate account statements. More critically, their combined deposit and lending relationships would have been invisible to relationship managers, risk officers, and the compliance team monitoring concentration risk.

Pre-merger data quality assessment should begin during due diligence, not after close. Profiling the target institution's customer data reveals the baseline duplicate rate, completeness gaps, and format inconsistencies that will need resolution. This assessment directly informs integration cost estimates and timelines. Institutions that defer data quality work until post-close consistently underestimate both by 40% or more.

The Financial Impact of Data Inaccuracy: Cost by Use Case

How to Build a Data Accuracy Strategy for Financial Services

A data accuracy strategy for financial services must address three layers simultaneously: the data itself (profiling, cleansing, deduplication), the processes that produce and consume data (data entry, integration, reporting), and the governance structure that enforces standards (policies, ownership, metrics, audit).

Profile Before You Fix

Run automated data profiling across all customer-facing systems: core banking, CRM, lending, wealth management, insurance, and any ancillary databases. Measure completeness (percentage of records with all required fields populated), uniqueness (duplicate detection rate), consistency (format variations across the same field), and validity (values outside acceptable ranges). This baseline tells you where the problems are and how severe they are before you commit remediation resources.

Deduplicate Across Systems, Not Within Them

Most data quality efforts focus on deduplicating within a single system: cleaning the CRM, or cleaning the core banking platform. This misses the cross-system duplicates that cause the most damage for KYC, AML, and risk management. A customer may have a clean, deduplicated record in the CRM and a separate clean record in the lending system, but those two records are not linked. Entity resolution across systems is the step that creates the unified customer view required for compliance and risk management. MatchLogic's on-premise deployment model allows financial institutions to run entity resolution across all systems without moving sensitive customer data outside their controlled infrastructure.

Embed Quality at the Point of Entry

Cleaning data after it enters the system is reactive and expensive. Embedding validation rules, standardization, and duplicate detection at the point of data entry (account opening, loan application, trade capture) prevents quality problems from forming. Real-time matching against the existing customer base during onboarding catches duplicates before they become compliance gaps. This is where data quality transitions from a periodic cleanup project to a continuous operational capability.

Data Accuracy Is the Foundation of Financial System Integrity

Every function in a financial institution depends on accurate data. Compliance teams need it to screen customers and report suspicious activity. Risk managers need it to calculate exposure and allocate capital. Fraud teams need it to detect synthetic identities and anomalous behavior. Regulators need it to assess institutional stability and protect the financial system.

The institutions that invest in entity resolution, data profiling, and continuous quality monitoring build a foundation that supports compliance, reduces operational risk, and creates the data infrastructure required for AI-driven financial services. The institutions that do not will continue to absorb the compounding costs of remediation, fines, and undetected fraud.

Frequently Asked Questions

What is BCBS 239 and why does it matter for data quality?

BCBS 239 is a set of 14 principles published by the Basel Committee on Banking Supervision in 2013 to strengthen banks' risk data aggregation and reporting. It requires that risk data be accurate, complete, timely, and adaptable. As of 2024, only 2 of 31 global systemically important banks fully comply with all principles, with data accuracy and completeness being the most common gaps.

How much does poor data quality cost financial institutions?

According to Gartner, financial sector organizations lose an average of $15 million per year to poor data quality. The costs include KYC remediation ($25-$50 per record), AML false positive investigations ($15-$50 per alert), regulatory fines (up to $500,000+ per violation), and fraud losses from undetected synthetic identities.

What is the connection between duplicate records and financial crime?

Duplicate customer records allow individuals to maintain multiple identities within the same institution, enabling them to structure transactions below reporting thresholds (a federal offense), bypass sanctions screening, and build fraudulent credit histories. Entity resolution that links all records belonging to the same individual is the primary technical defense against these risks.

How does entity resolution support AML compliance?

Entity resolution creates a unified view of each customer by linking records across all systems, regardless of name variations, address changes, or identifier differences. This unified view enables accurate transaction monitoring (all activity attributed to the correct person), complete sanctions screening (no identity fragments slip through), and reliable suspicious activity detection across the customer's full relationship.

Should financial institutions process data quality on-premise or in the cloud?

The answer depends on regulatory requirements and risk tolerance. Institutions subject to cross-border data transfer restrictions (GDPR, data localization laws, certain BCBS 239 interpretations) may need on-premise processing to maintain compliance. On-premise data quality platforms like MatchLogic allow institutions to run profiling, matching, and deduplication without transmitting sensitive customer data to external environments.

What SOX Section 404 requirements relate to data accuracy?

SOX Section 404 requires management to assess and report on the effectiveness of internal controls over financial reporting, and external auditors must attest to that assessment. Data accuracy is a foundational internal control: if the underlying data feeding financial reports contains errors, duplicates, or inconsistencies, the resulting financial statements are unreliable and the company may face restatement requirements, personal liability for executives, and fines.

.svg)