Data Standardization Tools: Selection Criteria for Enterprise Environments

Key Takeaways

- ✓Data standardization tools transform inconsistent field values into uniform formats, directly improving downstream matching accuracy by 15% to 25%.

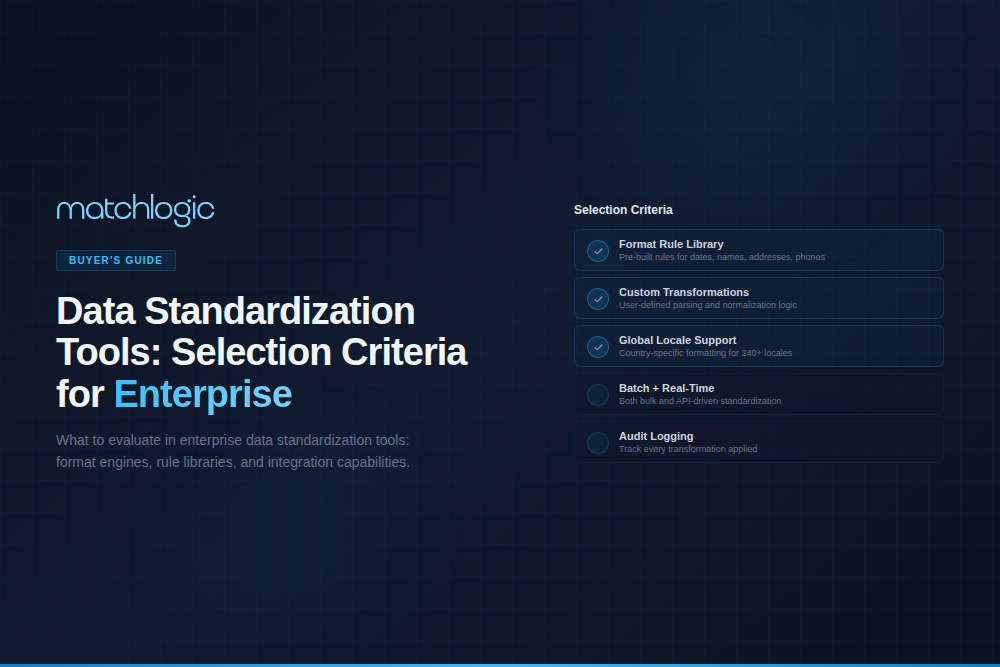

- ✓Evaluate tools across eight criteria: field-type coverage, rule transparency, automation, scalability, deployment, integration, auditability, and total cost.

- ✓Standardization-first platforms outperform general-purpose ETL tools for name, address, and code normalization because they include domain-specific reference libraries.

- ✓On-premise deployment is non-negotiable for regulated industries where data residency and processing sovereignty are compliance requirements.

- ✓According to Gartner, poor data quality costs organizations an average of $12.9 million per year; standardization eliminates a significant share of those losses.

Data standardization tools are software platforms that transform inconsistent data values into uniform formats by applying parsing, normalization, and validation rules across fields like names, addresses, dates, phone numbers, and industry codes. These tools automate the process of converting variations ("St." to "Street," "Bob" to "Robert," "CA" to "California") into canonical forms that downstream systems can process reliably. data standardization guide For enterprises managing millions of records across dozens of source systems, standardization tools are the prerequisite to accurate matching, deduplication, and analytics.

According to Gartner, poor data quality costs organizations an average of $12.9 million per year. A significant portion of that cost traces back to unstandardized data: records that fail to match because "Robert Smith" and "Bob Smith" are treated as different people, or because "123 Main St." and "123 Main Street" are stored as separate addresses. The right standardization tool eliminates these inconsistencies before they cascade into duplicate records, failed integrations, and inaccurate reporting.

What Do Data Standardization Tools Actually Do?

Data standardization tools perform four core functions: parsing, normalization, validation, and enrichment. Parsing breaks compound fields into discrete components. A single "name" field containing "Dr. Robert J. Smith III" becomes title ("Dr."), first name ("Robert"), middle initial ("J."), last name ("Smith"), and suffix ("III"). A concatenated address field becomes street number, street name, street type, unit, city, state, and postal code.

Normalization converts parsed values into canonical forms using reference libraries and rule sets. Nicknames map to legal names ("Bob" to "Robert," "Liz" to "Elizabeth"). Abbreviations expand ("Corp." to "Corporation," "LLC" to "Limited Liability Company"). Date formats convert to ISO 8601 ("March 15, 2026" and "3/15/26" both become "2026-03-15"). Phone numbers normalize to E.164 format.

Validation checks standardized values against authoritative reference data. Address validation compares results against USPS CASS-certified databases or international postal files. Email validation confirms domain existence and mailbox format. Industry code validation cross-references SIC, NAICS, or ISIC codes against current classification tables. Enrichment adds missing data elements: appending ZIP+4 codes, geocoordinates, or firmographic attributes from third-party sources.

Why Does Standardization Matter Before Matching?

Matching algorithms compare field values character by character, token by token, or phonetically. When the same real-world value appears in different formats across source systems, matching accuracy drops. Studies cited by Peter Christen in "Data Matching" (Springer, 2012) found that data standardization alone improved match recall by 10% to 20% in cross-system record linkage projects.

Consider a practical example. Two ERP systems contain records for the same supplier. System A stores "Johnson & Johnson Inc., 1 Johnson & Johnson Plaza, New Brunswick, NJ 08901." System B stores "J&J, 1 J&J Plz, New Brunswick, New Jersey, 08901-1234." Without standardization, a fuzzy matching algorithm running Jaro-Winkler on the company name field returns a similarity score of 0.62, well below most match thresholds. After standardization expands abbreviations, normalizes punctuation, and parses addresses into components, the same algorithm returns 0.94.

This is not a hypothetical improvement. Every enterprise data matching project that skips standardization pays the cost in false negatives (missed matches), false positives (incorrect matches caused by noise), and hours of manual review. Standardization is not a separate project from matching; it is the first phase of every matching pipeline. address standardization

What Are the Key Evaluation Criteria for Data Standardization Tools?

Enterprise tool evaluation requires more than a feature checklist. The following eight criteria separate tools built for enterprise-scale standardization from lightweight utilities that work on small datasets but fail at production volumes.

Evaluation Criteria Comparison Table

| Criterion | What to Evaluate | Green Flag | Red Flag |

|---|---|---|---|

| 1. Field-Type Coverage | Does the tool handle names, addresses, dates, phone numbers, emails, and industry codes with domain-specific logic? | Built-in parsers for 6+ field types with configurable reference libraries | Generic regex-only approach with no domain-specific parsing |

| 2. Rule Transparency | Can you inspect, modify, and audit every transformation rule applied to your data? | Visual rule editor with before/after previews and full audit trail | Black-box AI transformations with no visibility into logic |

| 3. Automation and Scheduling | Can standardization run automatically on new records as they enter your systems? | Real-time API and batch scheduling with event-driven triggers | Manual-only execution requiring user intervention for every run |

| 4. Scalability | Performance at 1M, 10M, and 100M+ records without degradation | Parallel processing with linear throughput scaling; benchmarks available | Single-threaded processing that slows exponentially beyond 500K records |

| 5. Deployment Model | On-premise, cloud, or hybrid deployment to meet data residency requirements | Full on-premise option with no cloud dependency for regulated industries | Cloud-only with no on-premise or air-gapped deployment option |

| 6. Integration Depth | Native connectors to CRMs, ERPs, data warehouses, and data lakes | Pre-built connectors for Salesforce, SAP, Oracle, Snowflake, plus REST API | CSV import/export only with no system-level integration |

| 7. Auditability | Complete transformation lineage: original value, rule applied, result, timestamp, user | Field-level audit log exportable for compliance reporting (SOX, HIPAA, GDPR) | No record of what changed, when, or why |

| 8. Total Cost of Ownership | License, implementation, training, maintenance, and infrastructure costs over 3 years | Transparent pricing with predictable annual cost; no per-record fees at scale | Per-record pricing that escalates unpredictably as data volumes grow |

How Does Field-Type Coverage Affect Standardization Quality?

The most common failure in enterprise standardization projects is applying generic text transformation to fields that require domain-specific logic. A regex pattern that strips special characters works fine for phone numbers but destroys valid data in company names (removing "&" from "Johnson & Johnson") or addresses (removing "#" from apartment numbers).

Enterprise-grade tools maintain separate parsers and reference libraries for each field type. Name standardization requires nickname dictionaries, cultural name pattern recognition (Eastern vs. Western name order), and title/suffix handling. Address standardization requires postal authority reference files (USPS for the U.S., Royal Mail PAF for the UK, Canada Post NDC). Date standardization must handle dozens of regional formats and detect ambiguous values (is "01/02/2026" January 2 or February 1?).

Ask vendors to demonstrate their tool against a test file containing 10,000 records with mixed name formats (including hyphenated, multi-word, and non-Latin names), international addresses (at least 5 countries), and dates in 4+ formats. The pass rate on this test reveals more than any product demo.

Why Is Rule Transparency Critical for Regulated Industries?

In healthcare, HIPAA requires organizations to demonstrate how patient data is processed and transformed. In financial services, SOX Section 404 mandates internal controls over data that feeds financial reporting. In any GDPR-regulated context, Article 5(1)(d) requires data accuracy and the ability to prove how accuracy is maintained. These regulations make black-box standardization a compliance liability.

Transparent standardization means every transformation is visible, reversible, and auditable. When an auditor asks why "Robert" was changed to "Bob" (or vice versa), the system should produce the exact rule, the timestamp, and the user or process that triggered it. MatchLogic's on-premise architecture gives enterprises full control over rule configuration and transformation logs without sending data to external servers, which is a requirement in industries where data residency is mandated by regulation.

What Are the Main Categories of Data Standardization Tools?

Data standardization tools fall into four categories, each with different strengths and limitations. Understanding where each category excels (and where it fails) prevents the most expensive mistake in tool selection: choosing a platform that solves the wrong problem.

Category 1: Dedicated Data Quality Platforms

Platforms like Informatica Data Quality, Trillium, and MatchLogic treat standardization as a core capability alongside profiling, matching, and deduplication. They include domain-specific parsers, reference libraries, and configurable rule engines. These tools are purpose-built for enterprises that need standardization as part of a broader data quality program. Their strength is depth: field-type-specific logic, postal authority integration, and transformation audit trails. Their limitation is cost and implementation complexity, which typically makes them a poor fit for teams standardizing a single spreadsheet.

Category 2: ETL and Data Integration Tools

Tools like Talend, Informatica PowerCenter, and Azure Data Factory include standardization as a transformation step within data pipelines. They are strong when standardization is embedded in a larger data movement workflow. Their limitation is that standardization logic is typically generic: string manipulation functions, lookup tables, and regex patterns rather than domain-specific parsers. Teams often build custom standardization logic in these tools, which works until the developer who built it leaves the organization.

Category 3: Standalone Cleansing Utilities

Tools like OpenRefine, Trifacta (now Alteryx Designer Cloud), and various open-source libraries (Python's usaddress, nameparser) handle specific standardization tasks well at small scale. They are excellent for ad hoc projects and data exploration. They typically lack scheduling, API access, audit trails, and the scalability needed for enterprise production workloads. data cleansing guide

Category 4: CRM and ERP Native Tools

Salesforce, HubSpot, and SAP include built-in field validation and formatting rules. These prevent some standardization issues at the point of entry but cannot standardize data that already exists in the system or data imported from external sources. They are a first line of defense, not a standardization platform.

How Should Enterprises Choose Between Tool Categories?

The right tool category depends on three factors: the scope of your standardization needs, the regulatory environment, and your team's technical capacity.

If standardization is a one-time project (cleaning a dataset before a migration), a standalone utility or ETL tool is sufficient. If standardization is an ongoing operational requirement (maintaining data quality across multiple source systems feeding a master data repository), a dedicated data quality platform pays for itself within one to two budget cycles through reduced manual cleanup and improved matching accuracy.

If your industry requires audit trails, transformation lineage, and data residency (healthcare, financial services, government, defense), the tool must support on-premise deployment with field-level audit logging. Cloud-only tools that process data through external servers are often disqualified by procurement and compliance teams before evaluation begins.

If your data engineering team has fewer than three dedicated data quality professionals, prioritize tools with visual rule builders over platforms that require custom coding. According to a TDWI report on data quality practices, organizations that rely on hand-coded standardization rules face a 50% risk of losing the core team member who built and understood the rules within five years.

Enterprise Scenario: Standardization Before ERP Consolidation

A mid-market industrial distributor with 1,200 employees operates three ERP systems acquired through mergers: SAP (North America), Oracle (Europe), and a legacy AS/400 system (Asia-Pacific). The company plans to consolidate onto a single SAP S/4HANA instance. The combined vendor master contains 340,000 supplier records across the three systems.

Before standardization, a preliminary matching run using Jaro-Winkler string similarity identifies 12,400 potential duplicate pairs. The data quality team reviews a sample of 500 pairs and finds that 38% are false negatives (true duplicates the algorithm missed because of formatting differences) and 14% are false positives (non-duplicates flagged because unstandardized abbreviations created misleading similarity scores). The estimated total duplicate rate is 22% to 28%, representing 74,800 to 95,200 records.

After standardization, company name normalization (expanding abbreviations, removing legal suffixes, normalizing punctuation) increases match recall by 19%. Address standardization (parsing into components, normalizing street types, validating against postal reference files across 23 countries) increases address-field match accuracy from 71% to 93%. The second matching run identifies 31,200 duplicate pairs with a false positive rate under 3%, reducing the manual review queue from an estimated 4,200 hours to 680 hours.

The standardization phase took six weeks and cost $185,000 (software licensing, configuration, and staff time). The estimated savings from reduced manual review, prevented duplicate payments, and avoided data migration rework exceeded $1.4 million in the first year.

Image placement suggestion: Insert the MatchLogic Match Results visual (https://cdn. prod.website-files. com/63d7b3235fa5ca763a4aa170/69412aa5804b494ae3db9b9c_Match%20Results. svg) to illustrate confidence scoring after standardization.

Alt text: "MatchLogic match results dashboard showing confidence scores and match percentages across name, address, and phone fields after standardization."

How Do You Measure Whether Standardization Is Working?

Effective standardization programs track three categories of metrics: input quality, transformation rates, and downstream impact. Input quality metrics measure the percentage of records arriving in non-standard formats before processing. Transformation rate metrics track how many records required changes and what types of changes were applied. Downstream impact metrics measure the effect on matching accuracy, duplicate detection rates, and manual review volumes.

The most telling metric is the pre-standardization vs. post-standardization match rate. Run the same matching algorithm against the same dataset before and after standardization. The delta reveals the exact value standardization adds. In practice, organizations that measure this consistently find a 15% to 25% improvement in match recall, with name standardization contributing the largest share. If the delta is less than 5%, either the data was already clean or the standardization rules are too conservative.

Track standardization exceptions separately. These are records the tool flags but cannot transform automatically, typically because the input is ambiguous (is "Alex" short for "Alexander" or "Alexandra"?), the field contains multiple values concatenated together, or the value does not appear in any reference library. A healthy exception rate is 2% to 5%. Above 10% suggests the rule set needs tuning or the source data requires upstream fixes before standardization can be effective.

What Are the Most Common Mistakes in Standardization Tool Selection?

The first mistake is treating standardization as an ETL step rather than a data quality discipline. ETL tools move data; standardization tools understand data. Conflating the two leads to generic string manipulation that mishandles domain-specific fields.

The second mistake is selecting a tool based on a demo against clean sample data. Every vendor demo works perfectly against curated datasets. Require vendors to process a representative sample of your actual production data, including records with missing fields, non-Latin characters, concatenated fields, and formatting inconsistencies. The difference between 95% accuracy on clean data and 72% accuracy on real data is the difference between a successful project and a failed one.

The third mistake is ignoring ongoing maintenance costs. Standardization rules require updates as postal codes change, company names evolve, and new data sources introduce unfamiliar formats. A tool that costs $50,000 in Year 1 but requires $30,000 per year in rule maintenance and reference data updates has a three-year TCO of $110,000, not $50,000. Ask vendors about the frequency and cost of reference data updates before signing.

The fourth mistake is evaluating tools in isolation from the rest of the data quality pipeline. Standardization feeds matching, matching feeds deduplication, deduplication feeds your golden record. A tool that standardizes data brilliantly but exports results in a format your matching engine cannot consume creates an integration gap that erodes every efficiency gain. Evaluate the full pipeline: standardization, profiling, matching, and merging as connected steps, not independent software purchases.

Frequently Asked Questions

What is the difference between data standardization and data normalization?

Data standardization transforms field values into uniform formats (converting "St." to "Street" or "Bob" to "Robert"). Data normalization restructures database schemas to reduce redundancy, typically through techniques like first, second, and third normal form. Standardization operates on values within fields; normalization operates on the relationships between tables. Both improve data quality but address different problems.

How long does an enterprise data standardization project typically take?

Initial implementation of a standardization tool takes 4 to 12 weeks for most enterprises, depending on the number of source systems, field types, and rule complexity. A single-source project with straightforward name and address standardization can go live in 4 weeks. Multi-source projects spanning 10+ systems with international address formats and custom business rules typically require 8 to 12 weeks.

Can data standardization tools handle international data?

Enterprise-grade tools include reference libraries for international address formats, name patterns (Eastern vs. Western name order, patronymic naming conventions), and date/number formats. Ask vendors specifically about coverage for your target geographies. Tools that only support USPS-based address standardization will fail on European, Asian, or Latin American address formats. ISO 8000 provides a framework for international data quality standards.

Do data standardization tools replace the need for data governance?

No. Standardization tools enforce rules; governance defines which rules to enforce, who owns the data, and how exceptions are handled. According to DAMA-DMBOK, data governance is the organizational framework that authorizes the policies standardization tools implement. Tools without governance produce consistent but potentially incorrect data. Governance without tools produces correct policies that nobody follows.

What is the ROI of investing in data standardization tools?

ROI depends on current data quality levels and downstream use cases. Organizations using standardization as a prerequisite to matching and deduplication typically see a 30% to 50% reduction in manual review time and a 15% to 25% improvement in match accuracy, according to TDWI research on data quality practices. For a team spending 2,000 hours per year on manual data cleanup at $75/hour loaded cost, a 40% reduction represents $60,000 in annual savings from labor alone, before counting avoided duplicate payments, improved marketing response rates, and reduced compliance risk.

Should standardization happen before or after data migration?

Before. Standardizing data after migration means migrating dirty data into a clean system, then cleaning it in place, which is more complex and risky than cleaning at the source. Pre-migration standardization also reveals data quality issues that may require schema changes in the target system, changes that are far cheaper to implement before go-live than after. Every major ERP implementation methodology (SAP Activate, Oracle Unified Method) includes data quality as a pre-migration workstream.

.svg)