Entity Matching Software: How Algorithms Identify the Same Real-World Object

Key Takeaways

- ✓Entity matching is the comparison stage within entity resolution: the algorithms that determine whether two records describe the same real-world person, organization, or object.

- ✓No single algorithm handles all data types. Effective entity matching software combines string similarity, phonetic, token-based, and probabilistic methods in a multi-layer pipeline.

- ✓Algorithm selection should be driven by field type: Jaro-Winkler for short names, token-based TF-IDF for addresses, exact or tolerance matching for dates and numeric IDs.

- ✓The precision/recall trade-off is a business decision, not a technical one: fraud detection demands high recall (catch everything), while CRM deduplication prioritizes high precision (avoid false merges).

- ✓Transparent matching logic (seeing which algorithm fired on which field) is a regulatory requirement in healthcare, financial services, and government.

Entity matching software uses algorithms to compare records from one or more databases and determine whether they refer to the same real-world entity: a person, organization, product, or location. Matching is the core comparison step within the broader entity resolution process, which also includes data preparation, blocking, clustering, and golden record creation. The accuracy of the matching stage determines the quality of everything downstream: if the algorithms cannot detect that “Robert J. Smith at 123 Main St.” and “Bob Smith at 123 Main Street, Apt. 2” are the same person, the resulting customer profiles, compliance reports, and analytics will be built on fragmented data. This guide explains how the matching algorithms work, which algorithms fit which data types, and how enterprise data teams should evaluate entity matching capabilities. entity resolution guide

Entity matching is one capability inside a broader entity resolution software platform, which also handles clustering, canonicalization, and survivorship.

How Is Entity Matching Different from Entity Resolution?

Entity matching is one stage in a multi-step pipeline. Entity resolution is the full process. Understanding this distinction clarifies what you are evaluating when you assess entity matching software.

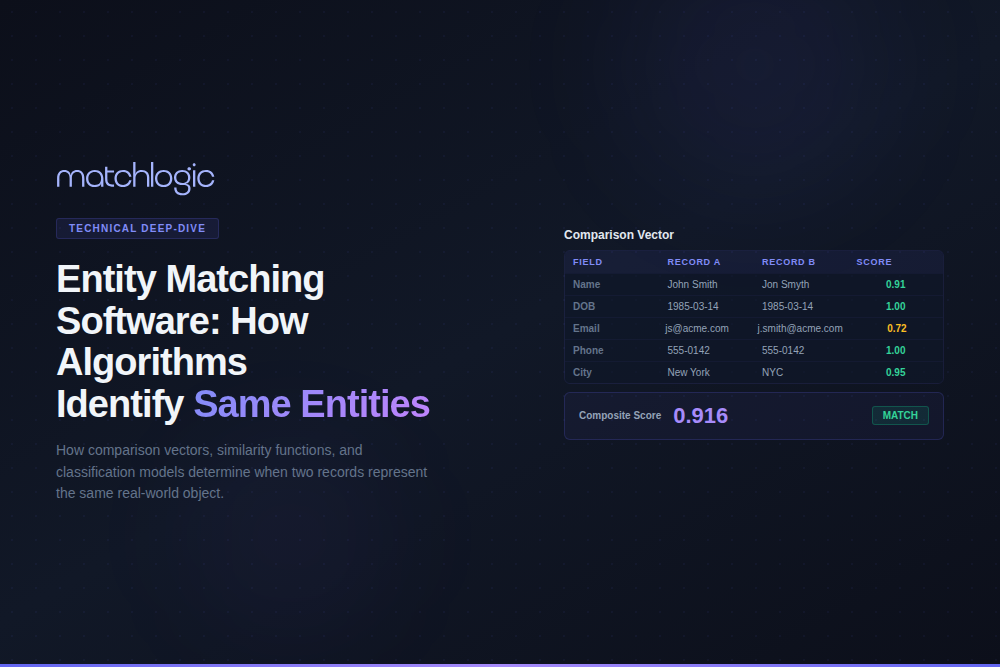

Matching takes two records and produces a similarity score or a match/non-match classification. It answers a narrow question: “How likely is it that Record A and Record B describe the same entity?” The matching stage does not decide what to do with that answer. It does not group records into entity clusters, resolve conflicting attribute values, or produce golden records.

Entity resolution wraps matching in a larger process: data ingestion, standardization, blocking (to reduce the comparison space), the matching stage itself, classification against thresholds, transitive clustering, survivorship rules, and golden record persistence. When vendors say “entity matching software,” they typically mean the matching algorithms embedded within their entity resolution platform, not a standalone comparison tool. entity resolution and data linkage

What Algorithms Does Entity Matching Software Use?

Entity matching algorithms fall into five categories. Production-grade entity matching software combines algorithms from multiple categories in a layered pipeline, because no single algorithm handles every data type and quality scenario effectively. data matching techniques breakdown

String Similarity Algorithms

String similarity algorithms measure the character-level distance between two text values. Levenshtein distance counts the minimum number of single-character insertions, deletions, or substitutions needed to transform one string into the other. “Smith” and “Smyth” have a Levenshtein distance of 1. Jaro-Winkler distance is optimized for short strings (particularly names) and gives higher scores when the compared strings share a common prefix, reflecting the empirical observation that first-character errors are rarer than mid-string or end-of-string errors. Damerau-Levenshtein extends Levenshtein by also counting character transpositions as a single edit (“recieve” vs. “receive”).

String similarity works well for detecting typos, minor spelling variations, and abbreviation differences. It struggles with semantic equivalences (“Robert” vs. “Bob”), reordered tokens (“Smith, John” vs. “John Smith”), and strings of very different lengths where the edit distance is high even if the core content matches.

Phonetic Algorithms

Phonetic algorithms encode strings based on how they sound rather than how they are spelled. Soundex, developed in the early 1900s for the U.S. Census, encodes names into a letter-plus-three-digits code (“Smith” and “Smythe” both encode as S530). Metaphone and Double Metaphone improve on Soundex by handling a wider range of English phonetic rules and producing multiple encodings for names with ambiguous pronunciation. NYSIIS (New York State Identification and Intelligence System) is optimized for names common in New York’s diverse population.

Phonetic algorithms are most useful for person name matching where spelling variation is high but pronunciation is consistent. They produce false positives for names that sound alike but refer to different people (a common challenge with surnames like “Smith” and “Schmidt” in multilingual datasets). They are not applicable to non-name fields like addresses, dates, or numeric identifiers.

Token-Based Algorithms

Token-based algorithms split strings into words (tokens) and compare the sets rather than the character sequences. Jaccard similarity calculates the size of the intersection divided by the size of the union of two token sets. Cosine similarity using TF-IDF (Term Frequency-Inverse Document Frequency) weights each token by how informative it is: a matching city name like “Springfield” (common) contributes less to the similarity score than a matching suite number like “Suite 4200” (rare).

Token-based algorithms excel at address and company name matching, where word order varies (“123 Main Street, Suite 200” vs. “Suite 200, 123 Main St”) and some tokens are informative while others (like “Inc.” or “LLC”) are noise. They handle long strings better than character-level algorithms because they are order-independent and can be weighted by token significance.

Probabilistic Matching

Probabilistic matching, grounded in the Fellegi-Sunter model (1969), assigns weights to each field comparison based on the field’s discriminating power. Agreement on a rare last name (“Wojciechowski”) carries more weight than agreement on a common first name (“John”). The model calculates a log-likelihood ratio for each field comparison, and the sum of these ratios across all fields determines the composite match score.

Probabilistic matching is the dominant approach in government, healthcare, and public sector entity matching, where the Fellegi-Sunter model’s mathematical rigor provides auditable decision logic. The model can be estimated without labeled training data using the Expectation-Maximization algorithm, which is a significant advantage for organizations that cannot afford to manually label thousands of record pairs before starting.

Machine Learning-Based Matching

ML-based matching trains a binary classifier (match vs. non-match) on labeled record pairs. Supervised approaches (random forests, gradient boosting, neural networks) require labeled training data but can learn complex, non-linear patterns across fields that rule-based and probabilistic methods miss. Active learning reduces the labeling burden by iteratively selecting the most informative record pairs for human review, training the model on a minimal labeled set.

The trade-off is explainability. A gradient-boosted classifier that returns a 0.87 match probability without revealing which features drove the decision creates compliance challenges in regulated industries. Enterprise entity matching software that uses ML should provide feature importance scores or SHAP values that auditors can review. Without explainability, ML-based matching is best suited for use cases where accuracy is the primary concern and regulatory explainability requirements are minimal.

| Algorithm | Best Field Types | Strengths | Weaknesses | Complexity |

|---|---|---|---|---|

| Jaro-Winkler | Short person names, first/last name fields. | Fast. Prefix-weighted. Handles transposition errors well. | Poor for long strings. Cannot handle reordering or semantic equivalence. | O(n) per pair. Very low computational cost. |

| Levenshtein | Short to medium text fields with typo/spelling variation. | Intuitive (counts edits). Well-understood. Widely implemented. | Expensive for long strings. Does not handle reordering. | O(m×n) where m, n are string lengths. |

| Double Metaphone | Person names, especially multilingual datasets. | Sound-based. Catches phonetic variations. Two encodings for ambiguous names. | High false positive rate for common phonetic groups. Not applicable to non-name fields. | O(n) per encoding. Low cost. |

| TF-IDF Cosine | Addresses, company names, product descriptions. | Order-independent. Weights informative tokens higher. Handles variable-length strings. | Requires tokenization and IDF pre-computation. Less effective for short strings. | O(n) per comparison after IDF indexing. |

| Fellegi-Sunter | Multi-field composite matching across all field types. | Mathematically rigorous. Auditable. Can be estimated unsupervised (EM algorithm). | Assumes field independence (often violated). Requires m/u probability estimation. | Depends on number of fields. Moderate cost per pair. |

| ML Classifier | Complex, high-volume datasets where patterns are non-linear. | Can learn interactions between fields. Highest accuracy ceiling. | Requires labeled data. Black-box unless explainability layer added. Overfitting risk. | Training: O(n log n) to O(n²). Inference: O(1) per pair. |

How Do Entity Matching Algorithms Combine in a Production Pipeline?

Enterprise entity matching software does not run a single algorithm against all fields. It runs different algorithms on different fields and combines the results. A typical multi-layer matching pipeline works as follows.

Layer 1 (field-specific comparison): Jaro-Winkler on first name (score: 0.92), Jaro-Winkler on last name (score: 0.88), Double Metaphone on last name (result: same phonetic code), TF-IDF cosine on full address (score: 0.85), exact match on date of birth (result: match), exact match on phone number (result: no data in Record B).

Layer 2 (composite scoring): The Fellegi-Sunter model calculates match weights for each field based on the field’s m-probability and u-probability. The rare last name “Wojciechowski” with a 0.88 Jaro-Winkler score contributes a much higher weight than the common first name “John” with a 0.92 score, because the u-probability (agreement by chance) is vastly lower for the rare surname. Missing data fields (phone number) are handled by assigning a neutral weight that neither supports nor opposes the match.

Layer 3 (classification): The composite score is compared against upper and lower thresholds. Above the upper threshold: auto-match. Below the lower threshold: auto-reject. Between the thresholds: route to manual review queue. The threshold values are set by the business based on their tolerance for false positives vs. false negatives.

How Should You Balance Precision and Recall in Entity Matching?

The precision/recall trade-off is the single most important configuration decision in entity matching. Precision measures the percentage of predicted matches that are actually correct (avoiding false positives). Recall measures the percentage of true matches that the system successfully identifies (avoiding false negatives). Increasing one typically decreases the other.

This trade-off is not a technical optimization problem. It is a business decision. In CRM deduplication, false positives (incorrectly merging two different customers into one record) destroy customer data and can trigger downstream errors in billing, communications, and reporting. High precision is critical. In fraud detection, false negatives (failing to link a fraudulent entity’s records across systems) allow bad actors to operate undetected. High recall is critical, even at the cost of more false positives entering the manual review queue.

Enterprise entity matching software should allow data engineers to set precision/recall trade-offs per entity type and per use case. Customer deduplication might require 98% precision with 90% recall, while sanctions screening might require 99.5% recall even if precision drops to 85%. MatchLogic’s configurable thresholds and field-level weight adjustments allow these trade-offs to be tuned per matching job.

What Does Algorithm Selection Look Like for a Real Data Challenge?

Consider a national retail chain with 8.5 million customer records across its e-commerce platform, in-store POS system, and loyalty program database. The data team needs to create a unified customer view for a personalization initiative. The three systems store customer data with different conventions: the e-commerce platform captures full legal names, the POS system stores names as entered by cashiers (often abbreviated or misspelled), and the loyalty system uses the name printed on the member’s credit card.

The data team configures their entity matching pipeline as follows. For name matching: Jaro-Winkler on first name (threshold: 0.82) combined with Double Metaphone as a secondary check for phonetic equivalence. This catches “Michael” vs. “Micheal” (typo) and “Katherine” vs. “Catherine” (phonetic variant). For address matching: TF-IDF cosine similarity on the concatenated address field (threshold: 0.78), which handles “123 Main Street, Apt 4” vs. “Apt. 4, 123 Main St.” without requiring identical word order. For email matching: exact match after lowercasing and whitespace stripping. For phone matching: exact match after normalizing to 10-digit format.

The composite scoring layer uses Fellegi-Sunter weights calibrated against a labeled sample of 800 record pairs reviewed by the data stewardship team. The upper auto-match threshold captures 72% of true matches with 99.1% precision. The manual review zone captures an additional 18% of true matches. The remaining 10% fall below the lower threshold and are classified as distinct customers. The total F1 score across the complete pipeline is 0.94, compared to 0.71 when the team previously attempted exact matching on name + email alone.

What Should You Look For in Entity Matching Software?

When evaluating entity matching capabilities within an entity resolution platform, focus on five factors.

Algorithm breadth: does the platform support string similarity, phonetic, token-based, probabilistic, and ML-based methods? Platforms limited to one or two algorithm types will underperform on data types those algorithms are not designed for.

Field-level configurability: can you assign different algorithms and thresholds to different fields? Name fields, address fields, date fields, and numeric identifiers all require different treatment. A platform that applies the same algorithm across all fields sacrifices accuracy.

Transparency: can you see which algorithm fired on which field, what score it produced, and how those scores combined into the composite match decision? This is not optional for regulated industries. MatchLogic provides field-level match explanations showing every algorithm’s contribution to each decision.

Threshold configurability: can you set different precision/recall trade-offs per entity type and per matching job? And can you adjust thresholds without re-running the entire matching process from scratch?

Performance at scale: how does matching speed degrade as record counts increase from 1 million to 10 million to 100 million? The answer depends on the platform’s blocking strategy, parallelization support, and whether it can leverage distributed compute frameworks.

Frequently Asked Questions

What is entity matching software?

Entity matching software uses algorithms to compare records from one or more databases and determine whether they refer to the same real-world entity. It is the comparison stage within the broader entity resolution process. The software applies string similarity, phonetic, token-based, probabilistic, and ML-based algorithms to calculate match scores, which are then classified against configurable thresholds to produce match, non-match, or possible-match decisions.

What is the difference between entity matching and fuzzy matching?

Fuzzy matching refers to the specific algorithms (Jaro-Winkler, Levenshtein, Soundex, etc.) that compare strings for approximate similarity. Entity matching is the broader process of applying fuzzy matching along with other techniques (probabilistic scoring, ML classification, token-based comparison) across multiple fields to produce a composite match decision. Fuzzy matching is one tool within the entity matching toolkit.

Which algorithm is best for matching person names?

Jaro-Winkler distance is the most widely used algorithm for short person name matching because it is prefix-weighted (reflecting the fact that first-character errors are rare) and handles transposition errors well. For multilingual or phonetically variable names, combine Jaro-Winkler with Double Metaphone to catch both spelling and phonetic similarities. No single algorithm handles all name variations; effective person name matching requires a combination.

How do I set the right match threshold?

Match thresholds are a business decision driven by the cost of errors. If false positives (merging records that should remain separate) are more costly than false negatives (missing true matches), set a higher threshold for higher precision. If missing a true match is more costly (as in fraud detection or compliance screening), lower the threshold for higher recall and route additional borderline cases to manual review.

Can entity matching software handle multilingual data?

Some platforms can; many cannot. Multilingual matching requires Unicode support, language-specific phonetic algorithms (Soundex is English-only; Double Metaphone handles broader Latin-script languages), transliteration for non-Latin scripts, and cultural awareness of name ordering conventions (given-family vs. family-given). Verify multilingual capabilities with your actual data, not the vendor’s marketing claims.

How does entity matching relate to data quality?

Matching accuracy is directly dependent on data quality. Unstandardized fields (mixed date formats, inconsistent abbreviations, concatenated name fields) reduce match recall. Data profiling and standardization before the matching stage can improve match accuracy by 10% to 20%, according to research cited by Peter Christen in Data Matching (Springer, 2012). Platforms that integrate profiling, cleansing, and matching in a single pipeline (like MatchLogic) eliminate the data handoff that often introduces errors.

.svg)