Entity Resolution and Data Linkage: Connecting the Dots Across Databases

Key Takeaways

- ✓Data linkage connects records across separate databases that describe the same entity; entity resolution adds clustering and golden record creation on top of linkage.

- ✓Record linkage originated in 1946 with Halbert Dunn's vital statistics work and was formalized by the Fellegi-Sunter probability model in 1969.

- ✓Blocking reduces the computational cost of cross-database linkage from quadratic (n²) to near-linear by partitioning records into comparable groups.

- ✓Privacy-preserving record linkage (PPRL) enables cross-organization linkage without exposing raw PII, using techniques like Bloom filters and secure multi-party computation.

- ✓Transitive closure connects records that were never directly compared: if A matches B and B matches C, all three are linked to the same entity.

Entity resolution data linkage at is the process of identifying records across two or more separate databases that refer to the same real-world entity (a person, organization, product, or location) and connecting them into a unified view, even when no shared unique identifier exists between the systems. Data linkage is the matching mechanism; entity resolution is the broader process that uses linkage results to cluster, merge, and produce a single trusted profile for each entity. For enterprises operating across multiple CRM, ERP, billing, and operational systems, cross-database linkage is the technical foundation that makes single-customer views, master data management, and regulatory compliance achievable. This guide covers the relationship between data linkage and entity resolution, the techniques that make cross-database matching work, and how to implement linkage at enterprise scale. entity resolution guide

For evaluation criteria when selecting a platform to run this workflow, see our entity resolution software guide.

How Are Data Linkage and Entity Resolution Related?

The terms “data linkage,“record linkage,” and “entity resolution” are frequently used interchangeably, but they describe different scopes of the same problem. Understanding the distinction matters because it determines which capabilities you need from your tooling.

Record linkage (also called data linkage) is the task of comparing records from one or more data sets and determining which record pairs refer to the same entity. The output is a set of linked pairs with associated confidence scores. The term was coined in 1946 by Halbert Dunn in the context of linking vital statistics records (birth, death, marriage certificates) across U.S. state registries. The mathematical foundation was established in 1969 by Ivan Fellegi and Alan Sunter, whose probabilistic model remains the basis of most modern record linkage implementations.

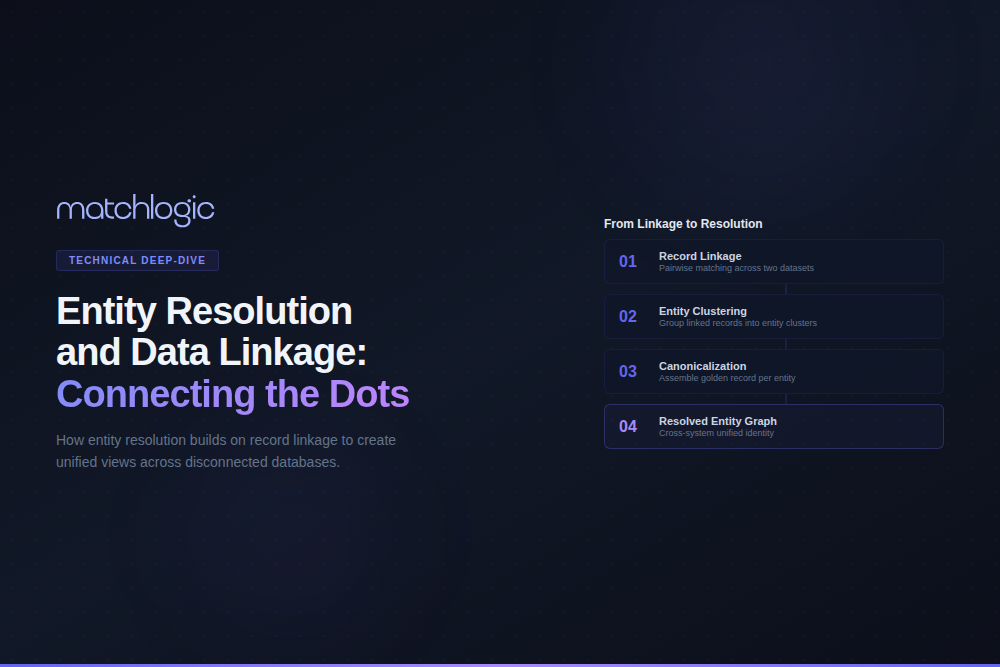

Entity resolution starts where record linkage ends. It takes the linked pairs, applies transitive closure to form entity clusters (if A matches B and B matches C, then A, B, and C are all the same entity), resolves conflicting attribute values using survivorship rules, and produces a canonical “golden record” for each entity. Put simply, record linkage answers the question “do these two records describe the same thing?” while entity resolution answers “who is this entity, given everything we know from every system?”

| Aspect | Record Linkage (Data Linkage) | Entity Resolution |

|---|---|---|

| Scope | Pairwise comparison between records across one or more datasets. | End-to-end process: linkage + clustering + survivorship + golden record creation. |

| Output | Linked record pairs with match/non-match classification and confidence scores. | Unified entity profiles (golden records) with full data lineage. |

| Handles Transitivity | No. Evaluates pairs independently. A-B match and B-C match does not automatically link A-C. | Yes. Transitive closure or graph-based clustering connects all related records. |

| Conflict Resolution | Not included. Returns linked pairs without resolving which attribute values to keep. | Survivorship rules determine which source's values populate the golden record. |

| Use Case Fit | Research studies, one-time data integration projects, epidemiological record linking. | Ongoing MDM, Customer 360, patient matching (EMPI), KYC/AML compliance. |

| Historical Origin | Dunn (1946), Fellegi-Sunter model (1969). Rooted in public health and statistics. | Evolved from record linkage in the 2000s as MDM and data integration became enterprise priorities. |

How Does Cross-Database Record Linkage Work?

Cross-database linkage follows a structured pipeline designed to find true matches efficiently while minimizing both false positives (records incorrectly linked) and false negatives (true matches missed). The pipeline addresses a fundamental computational challenge: comparing every record in Database A against every record in Database B produces n × m candidate pairs. For two systems with 5 million records each, that is 25 trillion comparisons, which is not feasible even with modern hardware.

Step 1: Schema Harmonization

Before records can be compared, the fields used for matching must be mapped across schemas. Database A may store names as “First_Name” and “Last_Name” while Database B uses a single “Full_Name” field. Date formats differ (MM/DD/YYYY vs. DD-MM-YYYY vs. ISO 8601). Address fields may be concatenated or split across street, city, state, and ZIP. Schema harmonization creates a common field structure without modifying the source data.

Step 2: Data Standardization

Standardization normalizes field values to reduce noise before comparison. Name standardization expands nicknames (“Bob” → “Robert”), removes honorifics, and normalizes casing. Address standardization resolves abbreviations (“St.” → “Street,” “Apt” → “Apartment”) and validates against postal reference files. Phone numbers are normalized to a standard format (country code + area code + number). The quality of this step directly affects downstream match accuracy; studies cited by Peter Christen in “Data Matching” (Springer, 2012) show that standardization alone can improve match recall by 10% to 20%.

Step 3: Blocking

Blocking partitions records into groups that share a common attribute value (the blocking key), so that only records within the same block are compared. A blocking key of “first three characters of last name + ZIP code” reduces a 25 trillion comparison space to a manageable fraction. The trade-off is coverage: overly restrictive blocking keys miss matches where the blocking attribute itself contains errors. Advanced strategies like sorted neighborhood, canopy clustering, and LSH (locality-sensitive hashing) use multiple overlapping blocking keys to increase recall without sacrificing performance.

Step 4: Pair wise Comparison

Within each block, record pairs are compared field by field using similarity functions appropriate to the data type. Name fields use Jaro-Winkler distance (optimized for short strings with common transposition errors), Levenshtein distance, or phonetic algorithms like Double Metaphone. Numeric fields (dates, phone numbers) use exact or within-tolerance matching. Address fields benefit from token-based comparison (TF-IDF cosine similarity) that handles reordering and abbreviation. Each field comparison produces a similarity score between 0 (no similarity) and 1 (identical). how entity matching algorithms work

Step 5: Classification

Field-level similarity scores are combined into a composite match score using one of three approaches. Deterministic classification applies hard rules (if SSN matches exactly AND last name similarity exceeds 0.85, classify as match). Probabilistic classification, based on the Fellegi-Sunter model, calculates match weights for each field based on the field’s discriminating power and combines them into a log-likelihood ratio. ML-based classification trains a binary classifier on labeled match/non-match pairs. Record pairs are classified into three categories: match (auto-link), non-match (auto-reject), and possible match (route to manual review). record linkage software and techniques

What Is the Fellegi-Sunter Model and Why Does It Matter?

The Fellegi-Sunter model, published in the Journal of the American Statistical Association in 1969, provides the mathematical foundation for probabilistic record linkage. It formalizes the intuition that some fields are more informative than others when deciding whether two records represent the same entity.

The model calculates two probabilities for each field comparison. The m-probability is the likelihood that the field values agree given that the records are a true match. The u-probability is the likelihood that the field values agree by chance among non-matching records. The ratio of these probabilities (m/u) determines the match weight for that field. A rare last name that agrees across two records (low u-probability) contributes a much higher weight than a common first name like “Michael” (high u-probability).

These per-field weights are summed into a composite score. Records above an upper threshold are classified as matches; records below a lower threshold are classified as non-matches; records between the thresholds are designated as possible matches requiring manual review. The elegance of the model is that it provides an optimal decision framework given the quality of the available comparison data, and it can be estimated in an unsupervised manner using Expectation-Maximization when labeled training data is unavailable.

How Does Transitive Closure Affect Data Linkage Quality?

Pair wise record linkage evaluates each record pair independently. This creates a problem that data linkage alone cannot solve: transitivity. If the linkage process determines that Record A (from Database 1) matches Record B (from Database 2), and Record B matches Record C (from Database 3), the logical conclusion is that A, B, and C all represent the same entity. Pairwise linkage does not make this inference automatically because A and C were never directly compared (they may reside in different blocks or different comparison sets).

Entity resolution applies transitive closure (or graph-based clustering algorithms like connected components, correlation clustering, or Markov clustering) to the linkage results to form complete entity clusters. This is where data linkage becomes entity resolution: the transition from pairs to unified entities.

Transitive closure introduces a risk: error propagation. If A-B is a false positive (an incorrect match), and B-C is a true match, transitive closure incorrectly links A to C’s entity cluster, contaminating the golden record. Enterprise entity resolution platforms mitigate this risk by applying cluster-level validation rules: maximum cluster size limits, minimum intra-cluster similarity thresholds, and automated flagging of clusters where any constituent link falls below a confidence threshold.

What Is Privacy-Preserving Record Linkage?

Not all data linkage happens within a single organization’s data perimeter. Cross-organization linkage is common in public health (linking hospital records across health systems), government (matching benefit recipients across agencies), and financial services (counterparty verification across institutions). In these scenarios, organizations need to determine whether they share records about the same entity without exposing the underlying personal data to each other.

Privacy-preserving record linkage (PPRL) addresses this requirement using several techniques. Bloom filter encoding converts field values into binary vectors that preserve approximate similarity for comparison but cannot be reversed to recover the original values. Secure multi-party computation (SMPC) allows two parties to compute match scores on encrypted data without either party seeing the other’s records in plaintext. Trusted third-party models route encrypted records through a neutral intermediary that performs the linkage and returns only the linked identifiers, not the underlying data.

PPRL is not theoretical. The Australian Institute of Health and Welfare uses Bloom filter-based linkage to connect patient records across state health systems without centralizing PII. The U.S. Census Bureau’s Center for Economic Studies uses record linkage across ad ministrative data sets using similar privacy-preserving methods. For enterprises bound by GDPR, HIPAA, or similar frameworks, PPRL enables data linkage that would otherwise be prohibited by data sharing restrictions.

What Does Entity Resolution Data Linkage Look Like at Enterprise Scale?

Consider a global manufacturer operating 22 plants across 8 countries. The company’s procurement function uses three separate ERP instances (one per geographic region), each with its own vendor master. A spot check reveals that the same raw material supplier appear sas “BASF SE” in the European ERP, “BASF Corporation” in the North American system, and “巴斯夫” (the Mandarin name) in the Asia-Pacific instance. The supplier’s address, bank details, and contact information differ across all three systems due to regional formatting standards and local subsidiary structures.

Without cross-database linkage, this manufacturer cannot consolidate spending analysis, negotiate volume discounts, or enforce consistent payment terms across regions. The procurement team estimates 15% to 20% vendor duplication across the three ERP instances, representing $4 million to $6 million annually in missed volume discount opportunities and duplicate payment processing overhead.

An entity resolution platform ingests vendor records from all three ERPs, standardizes company names (expanding abbreviations, transliterating non-Latin characters), applies multi-field probabilistic matching across name, address, tax ID, and bank account attributes, and produces entity clusters. The cluster for BASF links all three regional records with a composite confidence of 97.2%, producing a single golden vendor record with survivorship rules that preserve the regional address for each subsidiary while unifying the parent entity. The procurement team now has a single view of each vendor across all 22 plants.

How Do You Maintain Data Linkages as Source Data Changes?

Most data linkage implementations focus on the initial matching project. The harder problem is keeping linkages accurate as source systems continue to generate new records, update existing ones, and occasionally delete records that should propagate as unlinks.

Three maintenance strategies address this challenge. Batch re-linkage runs the full linkage process on a scheduled basis (nightly, weekly, or monthly). This approach is simple to implement but creates a window during which new records exist in source systems without being linked, which is unacceptable for real-time use cases like fraud detection or clinical decision support.

Incremental linkage evaluates each new or updated record against the existing entity index as it arrives. The new record is compared against the most likely blocks, classified, and either linked to an existing entity cluster or used to create a new entity. This event-driven approach eliminates the delay window but requires a persistent entity index and real-time comparison infrastructure.

Hybrid approaches combine both methods: incremental linkage handles day-to-day updates, while periodic batch re-linkage catches edge cases (records that should have been linked but were missed by incremental blocking, or entity clusters that should be split base don new contradictory evidence). MatchLogic supports both batch and incremental matching modes, allowing enterprises to start with batch linkage and transition to event-driven resolution as their data infrastructure matures.

Frequently Asked Questions

What is the difference between record linkage and entity resolution?

Record linkage compares records from one or more datasets to determine which pairs refer to the same entity, producing linked pairs with confidence scores. Entity resolution is the broader end-to-end process that includes record linkage plus transitive closure (forming entity clusters), survivorship rules (resolving conflicting attribute values), and golden record creation. Record linkage is one step within the entity resolution pipeline.

What is the Fellegi-Sunter model?

The Fellegi-Sunter model is a probabilistic framework for record linkage published in 1969. It calculates match weights for each comparison field based on the ratio of the m-probability (agreement given a true match) to the u-probability (agreement by chance among non-matches). Records are classified as matches, non-matches, or possible matches based on composite weight thresholds. The model can be estimated without labeled training data using the Expectation-Maximization algorithm.

How does blocking work in data linkage?

Blocking partitions records into groups that share a common attribute value (the blocking key). Only records within the same block are compared, reducing the computational cost from quadratic (n²) to near-linear. Common blocking keys include the first few character sof a last name combined with a ZIP code or date of birth year. Advanced techniques like sorted neighborhood and locality-sensitive hashing use multiple overlapping keys to improve recall.

Can data linkage be performed across organizations without sharing raw data?

Yes. Privacy-preserving record linkage (PPRL) uses techniques like Bloom filter encoding, secure multi-party computation, and trusted third-party intermediaries to compare records in encrypted or tokenized form. These methods allow organizations to identify shared entities without exposing personal data to each other. PPRL is used in practice by public health agencies, census bureaus, and financial institutions.

How many records can modern data linkage handle?

Enterprise data linkage platforms routinely process datasets of 10 million to 100 million records. The key constraint is not record count but the number of candidate pairs generated by the blocking strategy. Effective blocking reduces 25 trillion potential comparisons (for two 5 million record datasets) to a manageable subset. Scalability depends on the platform’s blocking algorithms, parallelization support, and indexing infrastructure.

What happens when data linkage produces incorrect matches?

False positives (incorrect matches) are managed through configurable confidence thresholds, manual review queues for borderline cases, and cluster-level validation rules that flag suspicious entity groups (unusually large clusters, clusters with low average internal similarity). Enterprise platforms maintain full audit trails so that false links can be identified, reviewed, and corrected without rebuilding the entire linkage from scratch

.svg)