How Much Do Duplicate Records Actually Cost?

Key Takeaways

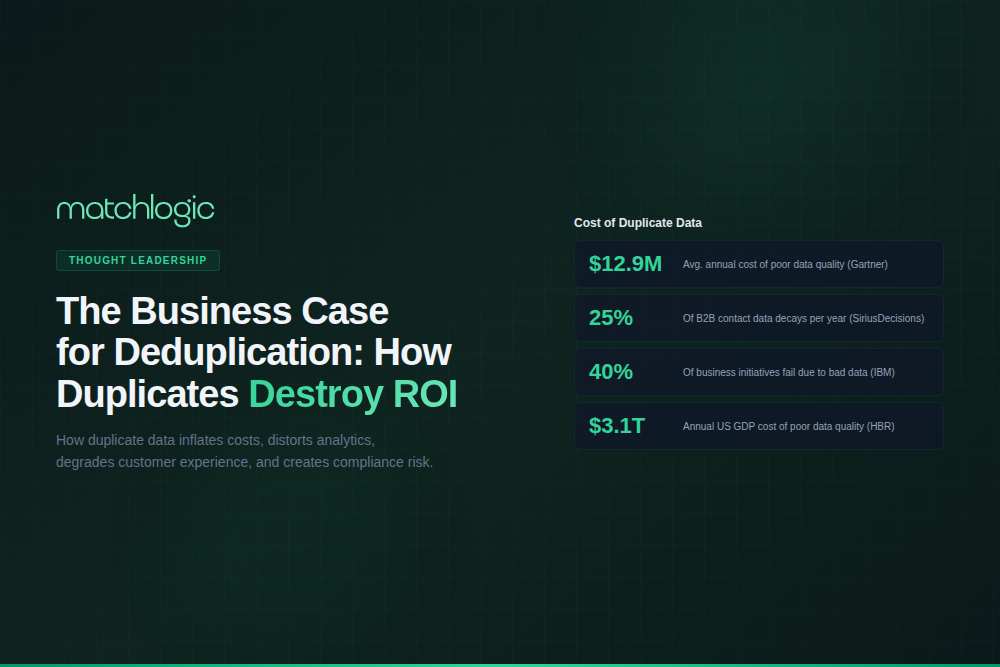

- ✓According to IBM, poor data quality costs U.S. businesses $3.1 trillion annually. Duplicate records are among the most common and measurable contributors.

- ✓The 1-10-100 rule quantifies the cost escalation: $1 to prevent a duplicate, $10 to fix it later, $100 to absorb the business consequences of leaving it unfixed.

- ✓Duplicate records impose measurable costs on six functions: marketing, sales, finance, compliance, customer experience, and AI/analytics.

- ✓Gartner predicts that 60% of AI projects will be abandoned by 2026 due to data quality gaps, with duplicates undermining training data and model accuracy.

- ✓A structured ROI calculation framework, based on record volume, duplicate rate, and cost per department, builds the CFO-ready case for deduplication investment.

Duplicate records are the most common, most measurable, and most expensive form of poor data quality in enterprise environments. According to IBM, poor data quality costs U.S. businesses an estimated $3.1 trillion annually, and a 2025 IBM Institute for Business Value study found that over 25% of organizations lose more than $5 million per year to data quality problems. Duplicate records sit at the center of this cost because they are both a direct expense (wasted marketing spend, redundant processing) and a multiplier of downstream errors (inaccurate analytics, failed AI models, compliance violations).

Common deduplication workflows that directly reduce duplicate-record cost include merge purge, real-time deduplication at entry, and periodic batch reconciliation.

Yet most enterprises tolerate duplicates because the costs are distributed across departments and invisible in any single line item. No budget line reads "duplicate record overhead." The cost hides inside inflated CRM license fees, wasted campaign spend, misallocated sales territories, denied insurance claims, and AI models that produce wrong answers with high confidence. For a technical overview of how deduplication works, see our data deduplication guide.

What Is the 1-10-100 Rule for Data Quality?

The 1-10-100 rule, originally articulated by quality management researchers and widely cited in data governance literature, quantifies the cost escalation of data quality problems over time. It costs approximately $1 per record to prevent a data quality issue at the point of entry. It costs $10 per record to find and fix the issue after it has entered the system. It costs $100 per record (or more) to absorb the business consequences of the unfixed issue: the lost sale, the compliance fine, the misrouted patient, the failed AI prediction.

Applied to duplicate records at enterprise scale, the math becomes significant. An organization with 5 million records and a 10% duplicate rate (a conservative estimate per Edgewater Consulting) carries 500,000 duplicates. At $1 per record for prevention, the proactive cost is $500,000. At $10 per record for remediation, the reactive cost is $5 million. At $100 per record for absorbed business impact, the cost of inaction is $50 million. The actual cost per record varies by industry and use case, but the ratio holds: prevention is 10x cheaper than correction, and correction is 10x cheaper than consequence.

Where Do Duplicate Records Cost Money? A Department-by-Department Breakdown

Duplicate records do not create a single, obvious expense. They create a tax on every function that touches data. The following table maps the specific cost mechanisms across six enterprise functions.

| Department | How Duplicates Destroy ROI | Quantifiable Impact |

|---|---|---|

| Marketing | Inflated lead counts distort campaign performance. Same person receives the same email 2 to 3 times. Segmentation breaks because one customer appears in multiple cohorts. Marketing automation platforms (Marketo, HubSpot) charge by database size, so duplicates directly inflate license costs. | 10% duplicate rate on 500K contacts = 50K wasted records. At $0.01/contact/month license cost = $6,000/year in excess fees alone, before accounting for wasted campaign spend. |

| Sales | Same prospect assigned to multiple reps, causing territory conflicts. Pipeline reports overstate opportunity count. Sales reps waste time pursuing leads that another rep already contacted, or that are already existing customers. | According to Dun & Bradstreet, 50% of sales time is wasted on unproductive prospecting. Duplicates compound this by sending reps to leads that have already been contacted or converted. |

| Finance | Duplicate vendor records generate duplicate payments. Duplicate customer records produce inaccurate revenue reporting and flawed forecasts. CFOs making decisions on inflated customer counts allocate resources to segments that do not exist at the reported size. | A mid-market company with 50K vendor records and a 5% duplicate rate risks duplicate payments on 2,500 vendor entries. Even a 1% duplicate payment rate on $100M annual procurement = $1M in overpayments. |

| Compliance | GDPR data subject access requests require identifying all records for an individual across all systems. If the same person exists under 3 slightly different names, and the organization only finds 2, the response is incomplete and non-compliant. HIPAA patient matching failures cause clinical errors. | According to Black Book Research, 33% of denied healthcare claims are linked to patient identification errors. The average hospital spends $1.5M annually resolving duplicate patient records. |

| Customer Experience | Customer calls in and the service agent sees 3 records with different order histories. The customer is asked to verify information that the company should already have. Loyalty programs assign different reward balances to different records for the same person. | According to a PwC survey, 32% of customers will stop doing business with a brand they love after just one bad experience. Duplicate-driven service failures are entirely preventable. |

| AI / Analytics | Duplicate records in training data bias ML models. Customer segmentation algorithms double-count individuals. Churn models predict behavior for entities that do not actually exist as distinct customers. Revenue forecasts built on duplicated records overstate actual performance. | Gartner predicts that 60% of AI projects will be abandoned by 2026 due to data that is not AI-ready (as cited by IBM). Duplicates are one of the most common forms of data quality failure in AI pipelines. |

Case Scenario: Calculating the Cost of Inaction at a B2B SaaS Company

A B2B SaaS company with $80 million in annual recurring revenue maintains a CRM database of 420,000 accounts. A data quality audit reveals a 15% duplicate rate: approximately 63,000 accounts are duplicates. The duplicates create the following measurable costs:

Marketing: The company's marketing automation platform charges $0.015 per contact per month. 63,000 duplicate contacts cost $11,340 per year in excess license fees. Beyond licensing, the marketing team sends 24 email campaigns per year. With a 15% duplicate overlap, each campaign reaches approximately 9,450 duplicate recipients. At an industry-average cost-per-email of $0.03 (including content production allocation), duplicate sends cost $6,804 per year.

Sales: The company employs 45 account executives. Duplicate accounts cause an estimated 2 territory conflicts per rep per quarter, each requiring 30 minutes of investigation and manager involvement. That is 360 conflict incidents per year at an average fully loaded cost of $75/hour per rep, totaling $13,500 in lost productivity.

Finance: Duplicate customer records cause the finance team to overstate unique customer counts by 15%, which inflates the company's reported customer lifetime value (CLTV) and distorts cohort retention analysis. The CEO presents a board deck showing 420,000 customers with a CLTV of $190. The actual figure: 357,000 customers with a CLTV of $224. Decisions about expansion, hiring, and product investment are based on the wrong numbers.

Total quantifiable annual cost: approximately $31,644 in direct waste, plus an unquantifiable (but potentially much larger) cost in misallocated strategic resources. A deduplication project costing $50,000 to $75,000 would pay for itself within the first year on direct savings alone, before accounting for improved forecasting accuracy and customer experience.

How Do You Build the Business Case for Deduplication?

The following framework translates duplicate record costs into a format that finance teams and executive sponsors can evaluate. Use it to calculate the ROI of a deduplication investment at your organization.

Step 1: Measure Your Duplicate Rate

Run a data profile across your primary systems (CRM, ERP, marketing automation, data warehouse). Count unique entities using fuzzy matching, not just exact-match comparison. Most organizations are surprised: the duplicate rate is almost always higher than expected. A conservative enterprise benchmark is 10% (Edgewater Consulting), but rates of 15% to 30% are common in CRMs that ingest data from multiple channels. For tool options to run this assessment, see our guide to dedupe software.

Step 2: Calculate Direct Costs

Multiply your duplicate count by the per-record costs specific to your environment. Common direct cost categories: excess SaaS license fees (per-contact pricing on CRM and marketing automation platforms), wasted campaign spend (cost per email/mail piece multiplied by duplicate volume), duplicate vendor payments (procurement audits can identify these), and labor costs for manual duplicate resolution (hours per incident multiplied by fully loaded hourly rate).

Step 3: Estimate Downstream Impact

This is where the business case becomes compelling. Downstream costs include: inaccurate pipeline forecasting (overstatement leads to under-delivery against board targets), compliance penalties (GDPR fines can reach 4% of annual global revenue), clinical errors from duplicate patient records (average $1,950 per duplicate inpatient stay per Black Book Research), and AI model degradation (duplicates in training data produce biased or inaccurate outputs that affect every downstream decision).

Step 4: Compare Against Deduplication Investment

Enterprise deduplication software typically costs $50,000 to $250,000 annually depending on record volume, deployment model, and feature requirements. Implementation requires 4 to 16 weeks of project time. The break-even calculation: if the annual cost of duplicates exceeds the annual cost of the deduplication platform plus implementation and maintenance, the investment has a positive ROI. In practice, most enterprise deduplication projects achieve ROI within 6 to 12 months on direct cost savings alone.

Why Do Organizations Delay Deduplication Despite the Clear ROI?

Three organizational dynamics explain why deduplication investment lags behind the obvious financial case.

Distributed cost ownership. No single department owns the full cost of duplicates. Marketing absorbs the license inflation. Sales absorbs the territory conflicts. Finance absorbs the forecast distortion. Compliance absorbs the regulatory risk. Because the cost is distributed, no individual budget holder feels the full impact, and no individual executive has the incentive to fund the fix. The business case for deduplication must aggregate costs across departments to demonstrate the total organizational burden.

The "good enough" illusion. When CRM reports show 420,000 customers, and the sales team is hitting quota, and marketing is generating leads, the data appears to be working. The 15% error margin is invisible until someone runs a cohort analysis that does not reconcile, or a compliance audit reveals records that should have been consolidated. Deduplication investments are often triggered by a crisis (failed audit, botched migration, AI project failure) rather than by proactive planning.

Underestimating the compounding effect. Duplicate records accumulate over time. A 10% rate today becomes 18% in two years if new duplicates are created faster than old ones are resolved. The cost compounds because every downstream system, report, and model built on the duplicated data inherits the error. Organizations that delay deduplication do not face a static cost; they face an accelerating one. For a broader perspective on how data accuracy affects business intelligence, see our article on data accuracy.

Frequently Asked Questions

What is the ROI of data deduplication?

ROI varies by organization size, duplicate rate, and industry. A typical enterprise with 1 million records and a 12% duplicate rate can expect to recover $100,000 to $500,000 annually in direct cost savings (reduced license fees, eliminated duplicate payments, reclaimed sales productivity) against a platform investment of $50,000 to $150,000. Downstream benefits (improved forecasting, compliance risk reduction, AI model accuracy) often exceed the direct savings but are harder to quantify precisely.

How do you measure the cost of duplicate data?

Start with a data profile to establish your duplicate rate. Then calculate per-record costs across four categories: SaaS license inflation (per-contact fees on duplicate records), wasted outreach (cost per email or mail piece multiplied by duplicate volume), labor waste (hours spent resolving conflicts and manual merges), and downstream impact (compliance risk, forecast inaccuracy, AI degradation). Aggregate these across all affected departments for the total annual cost.

Who should own the deduplication budget in an enterprise?

Because duplicate costs are distributed across departments, the deduplication budget is most effectively owned by a cross-functional data governance function, a Chief Data Officer, or IT leadership with a mandate that spans marketing, sales, and operations. If no centralized function exists, the department with the largest measurable cost (often marketing or compliance) typically sponsors the initial investment, with costs shared across benefiting teams.

How quickly can deduplication deliver measurable results?

The first batch deduplication run produces immediate results: reduced record counts, lower duplicate rates, and quantifiable cost savings. Most enterprise implementations show measurable ROI within 3 to 6 months. The full benefit, including improved forecasting accuracy, compliance risk reduction, and AI model improvement, typically materializes over 6 to 12 months as clean data flows through downstream systems and processes.

.svg)