What Is Dedupe Software?

Key Takeaways

- ✓Enterprise dedupe software uses fuzzy matching, phonetic algorithms, and probabilistic scoring to find non-exact duplicates that exact-match tools miss entirely.

- ✓Survivorship rules determine which field values survive a merge. Without configurable survivorship, you lose data during every deduplication run.

- ✓According to IBM, over 25% of organizations lose more than $5 million annually from poor data quality, with duplicates as a primary contributor.

- ✓On-premise deployment is non-negotiable for regulated industries subject to HIPAA, GDPR, or SOX data residency requirements.

- ✓Evaluate dedupe software across seven criteria: matching algorithms, survivorship logic, scalability, deployment model, data connectivity, auditability, and total cost of ownership.

Dedupe software is a category of data quality tools that identifies, flags, and resolves duplicate records within or across databases, CRMs, data warehouses, and other enterprise data systems. Unlike file-level deduplication used in backup and storage (which eliminates redundant data blocks to save disk space), record-level dedupe software operates on structured data: customer records, vendor entries, patient files, product catalogs, and similar entities where the same real-world object may be represented by multiple, slightly different records.

Before investing in dedupe software, quantify the cost of duplicate records in your environment — that number is what builds the business case.

The distinction matters because the top search results for "dedupe software" frequently conflate these two categories. Storage deduplication tools from vendors like Dell PowerProtect and Veritas NetBackup reduce backup volumes. Record deduplication tools from vendors like MatchLogic, Data Ladder, and WinPure fix the data quality problems that corrupt analytics, inflate costs, and violate compliance mandates. This article covers the latter: enterprise record deduplication software.

According to a 2025 IBM Institute for Business Value report, over 25% of organizations estimate they lose more than $5 million annually due to poor data quality. Duplicate records are one of the most common and measurable forms of that loss. A conservative estimate from Edgewater Consulting puts the average duplicate rate in enterprise databases at approximately 10%, though rates of 20% to 30% are common in CRMs that ingest data from multiple channels.

Why Do Enterprise Organizations Need Dedicated Dedupe Software?

CRMs like Salesforce and HubSpot include basic built-in deduplication. These native tools rely on exact-match rules (identical email address or phone number) and miss the vast majority of real-world duplicates. "Robert Smith" and "Bob Smith" at the same address are different records in Salesforce's native dedupe. "Acme Corp" and "ACME Corporation" remain two separate accounts. The gap between native tools and enterprise dedupe software is the gap between exact matching and fuzzy matching.

Enterprise dedupe software applies multiple matching techniques in layers. Phonetic algorithms (Soundex, Metaphone, NYSIIS) catch names that sound alike but are spelled differently. String similarity algorithms (Jaro-Winkler, Levenshtein distance) catch typos, transpositions, and abbreviations. Probabilistic models assign weighted scores to each field comparison and calculate an overall match confidence. This layered approach is how platforms like MatchLogic's data deduplication engine achieve 95%+ accuracy on enterprise datasets where native CRM tools plateau at 60% to 70%.

For a detailed breakdown of the technical pipeline behind deduplication, see our data deduplication guide.

What Features Should You Evaluate in Dedupe Software?

Not all dedupe tools serve the same purpose. A Salesforce plugin that merges obvious duplicates within a single CRM is a different product than an enterprise platform that deduplicates 50 million records across six source systems. The following seven features separate enterprise-grade dedupe software from point tools.

1. Matching Algorithm Depth

The matching engine is the core of any dedupe tool. Evaluate whether the software supports deterministic (rule-based), probabilistic (weighted scoring), and fuzzy matching methods. A tool limited to deterministic matching will miss duplicates with misspellings, abbreviations, and formatting differences. Look for configurable match thresholds, field-level weighting, and support for domain-specific algorithms (for example, medical record number matching in healthcare or DUNS number matching for B2B).

For a deeper technical comparison of fuzzy matching algorithms, see our guide to fuzzy matching software.

2. Survivorship and Merge Rules

Identifying duplicates is only half the problem. When three records represent the same customer, the software must decide which field values survive into the merged "golden record." The most complete email address? The most recent phone number? The longest company name? Survivorship rules define these decisions.

Weak tools offer only "most recent wins" or "longest value wins." Enterprise tools provide field-level survivorship logic: use the most recent phone number, the earliest creation date, the most complete address, and the email from the CRM (not the marketing automation platform). Without configurable survivorship, every merge risks data loss.

3. Blocking and Indexing Strategy

Comparing every record to every other record in a database of 10 million rows would require 50 trillion pairwise comparisons. Blocking algorithms partition the dataset into smaller groups (blocks) that share a common attribute, like the first three characters of a last name or a ZIP code. The software then only compares records within the same block.

Effective blocking reduces processing time by 99%+ without sacrificing match quality. Evaluate whether the tool supports multiple blocking keys, adaptive blocking (automatically selecting optimal keys), and cross-block matching for edge cases that fall into different blocks.

4. Scalability and Performance

Enterprise datasets range from hundreds of thousands to hundreds of millions of records. Test the software against your actual data volumes, not vendor-provided benchmarks on synthetic data. Key questions: How long does a full deduplication run take on 10 million records? Does performance degrade linearly or exponentially? Can you run incremental deduplication (only matching new or changed records against the existing master)?

5. Deployment Model: On-Premise vs. Cloud

For organizations in regulated industries (healthcare, financial services, government), the deployment model is often the first filter. Data residency requirements under HIPAA, GDPR, and SOX may prohibit sending production data to a cloud-hosted deduplication service. On-premise deployment keeps all data within your network perimeter and under your security controls.

MatchLogic's on-premise architecture was designed for exactly this requirement: regulated enterprises that need full control over data processing, auditability, and infrastructure. Cloud-only tools require data exports, API transfers, or direct database connections to external servers, each of which introduces compliance risk.

6. Data Connectivity and Source Support

Enterprise deduplication rarely involves a single database. You may need to deduplicate across Salesforce, SAP, Oracle, SQL Server, flat files, and cloud data warehouses simultaneously. Evaluate the number and depth of native connectors. A tool with pre-built Salesforce and HubSpot connectors but no support for ODBC, JDBC, or flat file imports will not serve a multi-system enterprise environment.

7. Auditability and Lineage Tracking

In regulated industries, you must be able to prove why two records were merged and which field values came from which source. Audit trails should capture the match score, the algorithms used, the survivorship rules applied, and a timestamp for every merge operation. If the tool cannot produce this audit trail, it is not suitable for environments governed by SOX Section 404, HIPAA, or GDPR Article 17.

How Do Leading Dedupe Software Platforms Compare?

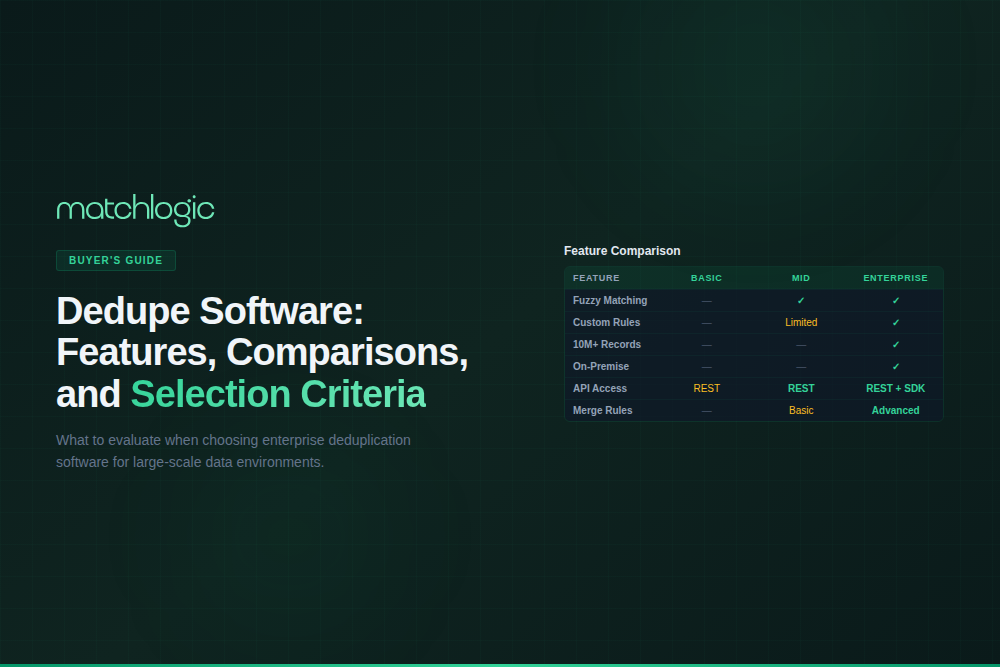

The table below compares key enterprise dedupe software capabilities across seven evaluation criteria. This comparison focuses on record-level deduplication tools, not storage or backup deduplication appliances.

| Feature / Criteria | MatchLogic | Data Ladder (DME) | WinPure | Informatica DQ | Openprise |

|---|---|---|---|---|---|

| Fuzzy + Probabilistic Matching | Yes (layered engine) | Yes (proprietary) | Yes (phonetic + fuzzy) | Yes (cloud-based) | Limited (rule-based) |

| Configurable Survivorship | Field-level rules | Field-level rules | Basic rules | Advanced rules | Field-level rules |

| Adaptive Blocking | Yes | Yes | Manual config | Yes | No |

| On-Premise Deployment | Yes (primary model) | Yes | Yes (Windows) | Cloud-first | Cloud only |

| Scale (records tested) | 100M+ records | 8M+ (third-party tested) | Millions (vendor claim) | Enterprise-scale | CRM-focused scale |

| Audit Trail / Lineage | Full (every merge logged) | Partial | Partial | Full | Limited |

| Multi-Source Connectors | ODBC, JDBC, flat files, APIs | SQL, Hadoop, CRM, flat files | Databases, CRMs, flat files | Broad (200+ connectors) | CRM-focused (SFDC, HubSpot, Marketo) |

| Pricing Model | License-based | License + free trial | License (one-time or subscription) | Enterprise (quote-based) | Subscription |

Note: This comparison is based on publicly available product documentation, vendor websites, and independent reviews as of April 2026. Specific capabilities may vary by product edition and release version. Informatica's data quality tools have shifted toward cloud-first architectures following its 2024 acquisition discussions with Salesforce.

What Does the Enterprise Deduplication Process Look Like?

Deduplication is not a single operation. It is a pipeline with distinct stages, each requiring different configuration and oversight. Understanding this pipeline is critical for evaluating how well a dedupe tool fits your workflow.

Step 1: Data Profiling

Before matching begins, profile the data to understand field completeness, format consistency, and potential duplicate density. A 400-bed hospital system migrating from three legacy EHR systems might discover that 18% of patient records lack a date of birth, 12% have inconsistent address formats, and 8% already contain exact-match duplicates before fuzzy matching even starts. Profiling quantifies the problem and shapes the matching strategy.

Step 2: Standardization

Standardize field values so that matching algorithms compare like with like. Convert "St." to "Street," "Corp" to "Corporation," "NY" to "New York." Parse compound names into first, middle, and last components. Normalize phone numbers to a consistent format. This step directly improves match accuracy by eliminating surface-level differences that would otherwise generate false negatives.

Step 3: Blocking

Partition the dataset into candidate groups. Common blocking keys include: first three letters of last name + ZIP code, phonetic encoding of company name, or date of birth + gender. Effective blocking reduces the number of pairwise comparisons from O(n2) to a manageable number without excluding true matches from comparison.

Step 4: Pairwise Comparison and Scoring

Within each block, compare record pairs across multiple fields. Each field comparison generates a similarity score (for example, Jaro-Winkler score of 0.92 for name, exact match on ZIP code, Levenshtein distance of 1 on street address). The dedupe software combines these field-level scores into an overall match confidence score, typically on a 0 to 100 scale.

Step 5: Classification and Review

Records above the auto-merge threshold (for example, 90+ confidence) are merged automatically. Records in the gray zone (for example, 70 to 89) go to a manual review queue. Records below the threshold are classified as non-matches. The width of the gray zone and the accuracy of the automated merges are where enterprise dedupe software earns its value.

Step 6: Merge and Survivorship

Apply survivorship rules to create the golden record. Every field in the merged record must trace back to a source record. The merged record inherits the most complete address from Record A, the most recent phone from Record B, and the email from Record C. The audit trail logs every decision. For more detail on the merge process, see our guide to merge purge.

Case Scenario: Deduplication at a Multi-Brand Retailer

A multi-brand retailer with 12 million customer records across four e-commerce platforms and two in-store POS systems ran an initial data profile and discovered a 28% duplicate rate. The duplicates were concentrated in three categories: customers who purchased from multiple brands (same person, different accounts), data entry variations from in-store staff ("Catherine" vs. "Cathy" vs. "Kathy"), and records created through acquisition of a competitor brand.

Using a layered matching approach with phonetic encoding on names, Jaro-Winkler similarity on addresses, and exact matching on email domains, the retailer reduced the duplicate rate to 2.1% after the first automated pass. The manual review queue contained 14,000 records (0.12% of the total), primarily edge cases involving family members at the same address. Post-deduplication, the retailer reclassified its actual unique customer count from 12 million to 8.6 million, a 28.3% reduction that fundamentally changed its customer lifetime value calculations, marketing segmentation, and loyalty program economics.

What Are the Most Common Mistakes When Selecting Dedupe Software?

Enterprise buyers frequently make three predictable errors during the evaluation process that lead to failed implementations or expensive platform switches within 12 to 18 months.

Mistake 1: Evaluating with Clean Sample Data

Vendors provide demo datasets that showcase their matching engine's strengths. Your data is different: inconsistent formats, missing fields, multilingual names, legacy system artifacts. Always test dedupe software against a representative sample of your actual production data, including its messiest segments. A tool that scores 98% accuracy on the vendor's sample and 72% on your data is not a 98%-accurate tool.

Mistake 2: Ignoring Survivorship Complexity

Many evaluations focus entirely on match accuracy and ignore what happens after the match. If the tool cannot handle field-level survivorship rules, conditional logic ("use this source for financial data, that source for contact data"), and exception handling for conflicting values, the merge process will either lose data or require extensive manual intervention.

Mistake 3: Choosing Cloud When Compliance Requires On-Premise

Cloud-hosted deduplication tools require that your data leave your network. For organizations subject to HIPAA, GDPR data residency requirements, or internal data governance policies that prohibit third-party data transfers, this disqualifies cloud-only platforms regardless of their matching capabilities. Evaluate deployment model as a pass/fail criterion before evaluating features.

What Are the Different Types of Record Deduplication?

Record-level deduplication is not a single operation. The type of deduplication you need depends on whether you are cleaning within a single system, linking across systems, or maintaining ongoing data quality. The table below defines each type and its primary use case.

| Deduplication Type | Definition | Primary Use Case |

|---|---|---|

| Intra-source deduplication | Identifies and merges duplicates within a single database, CRM, or data system. | Cleaning a Salesforce instance with 200K+ contacts accumulated over 5 years. |

| Cross-source deduplication | Matches and links records across two or more separate systems without a shared unique identifier. | Linking customer records from an ERP, CRM, and marketing automation platform into a unified view. |

| Real-time (inline) deduplication | Checks each incoming record against the existing master dataset at the point of entry. | Preventing duplicates during web form submissions, call center entries, or API-based data ingestion. |

| Batch deduplication | Processes the full dataset (or a defined subset) in a scheduled run, typically nightly or weekly. | Quarterly database cleanup before marketing campaigns or annual compliance audits. |

| Incremental deduplication | Matches only new or modified records against the existing master, reducing processing time. | Daily sync of new CRM leads against a 10M-record customer master to catch duplicates within hours of creation. |

Most enterprise environments require a combination of batch and incremental deduplication. The initial batch run cleans the historical backlog. Incremental runs maintain quality going forward. Real-time deduplication at the point of data entry is the highest-maturity approach, preventing duplicates from ever entering the system. MatchLogic supports all three modes through its API-driven architecture and configurable scheduling.

Frequently Asked Questions

What is the difference between dedupe software and data matching software?

Dedupe software is a subset of data matching software. Data matching identifies related records across or within datasets for purposes including linkage, enrichment, and deduplication. Dedupe software specifically focuses on the identification and resolution (merge, link, or flag) of duplicate records representing the same real-world entity. All dedupe software uses data matching; not all data matching software is designed for deduplication workflows.

How much does enterprise dedupe software cost?

Pricing varies significantly by vendor and deployment model. CRM-specific plugins (like Salesforce-native tools) range from $5,000 to $25,000 per year. Mid-market platforms with fuzzy matching capabilities typically cost $25,000 to $75,000 annually. Enterprise platforms with on-premise deployment, advanced matching, and multi-source connectors range from $50,000 to $250,000+ depending on record volume and feature requirements. Always factor in implementation, training, and ongoing support when calculating total cost of ownership.

Can dedupe software handle multilingual data?

Enterprise-grade dedupe software supports multilingual data through locale-specific phonetic algorithms, Unicode-aware string comparison, and configurable transliteration rules. For example, matching "Muller" and "Müller" or "Beijing" and "北京" requires algorithms designed for specific character sets and naming conventions. Not all tools support this; verify multilingual matching on your specific languages during evaluation.

How long does a typical enterprise deduplication project take?

Timeline depends on data volume, number of source systems, and the complexity of survivorship rules. A single-source CRM deduplication with 500,000 records can be completed in 2 to 4 weeks including profiling, configuration, testing, and production run. A multi-source project spanning 5+ systems and 20 million records typically requires 8 to 16 weeks. The matching engine itself processes millions of records in minutes to hours; the majority of project time is spent on data profiling, rule configuration, and manual review of edge cases.

What is a golden record in deduplication?

A golden record is the single, authoritative version of an entity created by merging the best available data from all duplicate records. The golden record contains the most complete, most accurate, and most current field values as determined by survivorship rules. For example, if three duplicate customer records exist, the golden record might combine the most recent email from Record A, the most complete mailing address from Record B, and the original account creation date from Record C.

Does deduplication delete records permanently?

Properly configured dedupe software does not permanently delete source records. Non-destructive deduplication links duplicates to a golden record while retaining the original records in an archive or audit log. This approach preserves data lineage, supports regulatory compliance requirements (including GDPR right-of-access obligations), and allows merges to be reversed if rules are later modified. Any tool that permanently deletes records without an undo mechanism is unsuitable for enterprise use.

.svg)