What Is Data Deduplication Software?

Key Takeaways

- ✓Data deduplication software identifies and resolves duplicate records using fuzzy, phonetic, and probabilistic matching, not just exact-match rules.

- ✓According to a Black Book Research survey, healthcare organizations average an 18% duplicate record rate, costing $1,950 per duplicate inpatient stay.

- ✓Enterprise deduplication requires both one-time batch cleanup and continuous monitoring to prevent duplicate re-accumulation.

- ✓On-premise deployment is critical for regulated industries where HIPAA, GDPR, or SOX prohibit data transfers to external cloud environments.

- ✓A deduplication maturity model spans five levels: from reactive manual cleanup to proactive, API-driven prevention at the point of data entry.

Data deduplication software is an enterprise data quality platform that detects, flags, and resolves duplicate records within and across databases, CRMs, data warehouses, and other structured data systems. It uses a combination of deterministic rules, fuzzy matching algorithms, phonetic encoding, and probabilistic scoring to identify records that represent the same real-world entity (person, organization, location, or product) even when those records contain misspellings, abbreviations, formatting inconsistencies, or missing fields.

Many deduplication platforms also support batch merge purge workflows for consolidating multiple source files into a single master list.

The core function separates data deduplication software from basic "find exact duplicates" features built into tools like Excel, Salesforce, or HubSpot. Native CRM deduplication relies on identical field values: same email, same phone number. Enterprise data deduplication software catches the other 30% to 40% of duplicates, the ones where "Robert J. Smith at 123 Main St." and "Bob Smith, 123 Main Street, Apt. 2B" are actually the same person. For a full explanation of techniques and matching methods, see our data deduplication guide.

According to a 2025 IBM Institute for Business Value report, over 25% of organizations estimate data quality problems cost them more than $5 million annually. Gartner has predicted that through 2026, 60% of AI projects will be abandoned due to data that is not AI-ready, with duplicates and inconsistencies among the primary causes. For enterprises building customer 360 views, running AI/ML models, or preparing for system migrations, deduplication is not an optional cleanup task; it is infrastructure.

Why Do Native CRM and Database Tools Fall Short?

Salesforce includes a duplicate management feature that checks for exact matches on email, name, and phone at the point of record creation. HubSpot offers a similar tool that flags contacts with identical email addresses. These features catch the obvious duplicates, perhaps 60% to 70% of the total, and miss everything else.

The gap is structural. Native tools compare single fields using exact-match or basic similarity. Enterprise deduplication software compares multiple fields simultaneously, weights each field differently based on reliability (email is more reliable than name; ZIP code is more reliable than city), and produces a composite match score. A record pair that scores 45% on name similarity alone might score 92% when address, phone, and account number are factored in.

Native tools also lack survivorship logic. When Salesforce merges two contacts, it applies a simple "most recently modified wins" rule. Enterprise scenarios demand field-level control: keep the email from System A, the phone from System B, and the address from whichever source was updated most recently. Without that granularity, every automated merge risks overwriting good data with bad data.

How Does Enterprise Data Deduplication Software Work?

Enterprise deduplication follows a structured pipeline. Each stage builds on the output of the previous stage, and skipping any step degrades the accuracy of downstream results.

Stage 1: Connect and Ingest

The platform connects to every data source in scope: CRMs, ERPs, data warehouses, flat files, APIs, and cloud applications. A mid-size financial services firm might connect Salesforce (customer records), SAP (billing), a legacy AS/400 system (policy data), and three acquired-company databases. The platform normalizes schemas across these sources into a unified record format for comparison.

Stage 2: Profile and Assess

Before any matching begins, the software profiles the ingested data. Profiling reveals field completeness rates (for example, 94% of records have an email, but only 68% have a date of birth), format consistency, value distributions, and a preliminary estimate of duplicate density. This stage prevents wasted effort: if 30% of address fields are blank, address-based blocking will miss those records entirely, and the matching strategy must account for that gap.

Stage 3: Standardize and Cleanse

The platform normalizes values so that matching algorithms compare equivalent representations. "Corp." becomes "Corporation," "St" becomes "Street," "NY" becomes "New York," and "(555) 867-5309" becomes "5558675309." Name parsing splits "Dr. Robert James Smith III" into title, first, middle, last, and suffix components. This step alone can increase match accuracy by 15% to 25%, because many apparent non-matches are simply formatting differences.

Stage 4: Block and Index

Blocking partitions the dataset into comparison groups to make pairwise matching computationally feasible. Comparing every record to every other record in a 10-million-row dataset would require approximately 50 trillion comparisons. Blocking on the first three characters of last name plus ZIP code reduces this to a manageable number. The best platforms support multiple, overlapping blocking keys (so a typo in the last name does not prevent a match) and adaptive blocking that selects optimal keys based on data characteristics.

Stage 5: Match and Score

Within each block, the software compares record pairs across multiple fields using layered algorithms. A typical configuration might apply Jaro-Winkler similarity on names, Levenshtein distance on addresses, exact matching on email, and phonetic encoding (Double Metaphone) as a secondary name check. Each field comparison produces a similarity score. The platform combines these into a weighted composite score, where higher-reliability fields (email, SSN, account number) carry more weight than lower-reliability fields (name, city).

Stage 6: Classify, Review, and Merge

Records above the auto-merge threshold (typically 90+ on a 100-point scale) are merged automatically using survivorship rules. Records in the review zone (typically 70 to 89) are routed to a manual review queue with side-by-side record comparisons and match explanations. Records below the threshold are classified as distinct entities. The merged output is a golden record for each unique entity, with full audit trail documenting which source contributed which field value.

What Do Duplicate Records Actually Cost?

Duplicate records impose measurable financial costs across every department that relies on data. The costs vary by industry, but the pattern is consistent: duplicates inflate operating expenses, reduce revenue, and create compliance risk.

| Industry | Specific Cost of Duplicates | Source |

|---|---|---|

| Healthcare | $1,950 per duplicate record per inpatient stay; average hospital spends $1.5M/year resolving duplicates; 33% of denied claims linked to patient identification errors | Black Book Research survey of 1,392 health technology managers |

| Cross-Industry | Over 25% of organizations lose more than $5M annually from poor data quality; 7% report losses exceeding $25M | IBM Institute for Business Value, 2025 CDO Study |

| Marketing/Sales | 10% average duplicate rate inflates campaign costs, distorts customer counts, and generates 32% wasted time on data issues vs. revenue activities | Edgewater Consulting (duplicate rate); TDAN (wasted time) |

| AI/ML Initiatives | 60% of AI projects predicted to be abandoned by 2026 due to data not being AI-ready, with duplicates as a primary quality issue | Gartner (as cited by IBM) |

These figures make the ROI calculation straightforward. A 500-bed hospital system with an 18% duplicate rate across 2 million patient records carries approximately 360,000 duplicates. At $1,950 per duplicate inpatient encounter, even resolving a fraction of those duplicates generates savings that dwarf the cost of any enterprise deduplication platform.

Where Does Your Organization Fall on the Deduplication Maturity Scale?

Not every organization needs the same level of deduplication capability. A five-level maturity model helps teams assess their current state and plan a practical path forward.

| Level | Name | Description | Typical Tools |

|---|---|---|---|

| 1 | Reactive | No formal deduplication process. Duplicates discovered ad hoc when reports look wrong or customers complain. | Manual Excel review, native CRM merge |

| 2 | Periodic Cleanup | Scheduled batch deduplication (quarterly or before campaigns). Exact-match rules with limited fuzzy matching. | CRM plugins, basic dedupe tools |

| 3 | Systematic | Defined deduplication workflow with profiling, standardization, fuzzy matching, and survivorship rules. Cross-source matching across 2 to 3 systems. | Mid-market deduplication platforms |

| 4 | Continuous | Incremental deduplication runs daily or on data change events. Full audit trails. Multi-source matching across 5+ systems. Manual review queues for edge cases. | Enterprise deduplication software (e.g., MatchLogic) |

| 5 | Preventive | Real-time, API-driven deduplication at the point of data entry. New records checked against the master before they are committed. Near-zero duplicate creation rate. | Enterprise platform with API integration and real-time matching. |

Case Scenario: Deduplication Before an EHR Migration

A 400-bed hospital system preparing to migrate from three legacy EHR platforms to a single Epic instance discovered that its combined patient master index contained 3.2 million records. An initial data profile using automated tools revealed an estimated duplicate rate of 16.4%, approximately 525,000 records that represented patients already in the system under a different medical record number.

The deduplication team configured matching rules across five fields: first name (Jaro-Winkler, weight 20%), last name (Jaro-Winkler, weight 25%), date of birth (exact match, weight 25%), SSN last four digits (exact match, weight 20%), and address (composite Levenshtein, weight 10%). Blocking keys combined the first two characters of last name with birth year. The initial automated pass resolved 412,000 records (78.5% of estimated duplicates) above the 92-point auto-merge threshold. Another 68,000 records (13%) entered the manual review queue. The remaining 45,000 required clinical review due to conflicting medication histories.

Post-deduplication, the hospital's actual unique patient count dropped from 3.2 million to 2.74 million. The migration to Epic proceeded with a clean master patient index, eliminating what the project team estimated would have been $2.3 million in post-migration duplicate remediation costs based on the Black Book Research figure of $1,950 per duplicate inpatient record.

What Should Enterprise Buyers Prioritize When Evaluating Data Deduplication Software?

The evaluation criteria for data deduplication software depend on your organization's maturity level, data volume, regulatory environment, and number of source systems. However, five priorities apply universally.

First, test on your own data. Vendor demos use curated datasets that showcase matching strengths. Your data contains inconsistencies, missing fields, multilingual entries, and legacy artifacts that no demo dataset replicates. Request a proof-of-concept against a representative sample of your production data before signing any contract.

Second, evaluate survivorship logic as carefully as matching accuracy. Identifying duplicates is necessary but not sufficient. The merge process determines whether the golden record is actually better than any individual source record. Field-level survivorship with conditional logic ("prefer CRM email unless blank, then use marketing automation email") is the minimum requirement.

Third, confirm deployment model compatibility. For regulated industries, on-premise or private cloud deployment is often a non-negotiable compliance requirement. Tools that require sending data to vendor-hosted cloud infrastructure may be disqualified before feature evaluation begins. For a more detailed comparison of evaluation criteria, see our dedupe software options.

Fourth, plan for continuous operations. One-time batch deduplication solves the backlog but does not prevent re-accumulation. Evaluate whether the platform supports incremental matching, scheduled runs, and real-time API integration for ongoing duplicate prevention.

Fifth, require full audit trails. Every merge decision, every survivorship rule applied, every manual review outcome must be logged with timestamps and user attribution. Regulatory auditors will ask for this. Internal data governance teams will ask for this. If the tool cannot produce it, it is not enterprise-ready. For a broader view of how deduplication fits into the data matching category, see our data matching software.

Frequently Asked Questions

What is the difference between data deduplication software and data cleansing software?

Data deduplication software specifically targets duplicate records: finding, scoring, and resolving records that represent the same entity. Data cleansing software addresses a broader set of quality issues including formatting inconsistencies, invalid values, missing fields, and standardization errors. Most enterprise deduplication platforms include cleansing and standardization as preprocessing steps because clean data produces more accurate matching. The two categories overlap but are not interchangeable.

How does data deduplication software handle false positives?

False positives (records incorrectly flagged as duplicates) are managed through configurable match thresholds and manual review queues. Records above the auto-merge threshold are merged automatically. Records in the gray zone (below auto-merge but above the dismissal threshold) are routed to human reviewers who see side-by-side comparisons with field-level match scores. The width of the gray zone is configurable: narrower zones mean more automation but higher false-positive risk; wider zones mean more manual review but fewer errors.

Can data deduplication software work with unstructured data?

Record-level deduplication software operates on structured or semi-structured data: database tables, CSV files, CRM records, and similar tabular formats. Unstructured data (PDFs, emails, free-text notes) typically requires extraction and parsing into structured fields before deduplication can be applied. Some platforms include text extraction capabilities; others require a separate ETL or data preparation step.

How often should an enterprise run deduplication?

Frequency depends on the rate at which new data enters the system. A CRM that ingests 5,000 new leads per week should run incremental deduplication daily. A data warehouse refreshed monthly can run deduplication on each refresh cycle. Organizations at maturity Level 5 run real-time deduplication at the point of data entry, preventing duplicates from ever being committed. The goal is to match the deduplication cadence to the data ingestion cadence.

Is open-source deduplication software viable for enterprise use?

Open-source tools like Dedupe. io (Python library) and OpenEMPI (healthcare-focused) provide matching algorithms but lack the surrounding enterprise infrastructure: GUI configuration, survivorship management, multi-source connectors, audit trails, and vendor support. They are viable for teams with strong data engineering capabilities and modest data volumes. For organizations processing millions of records across multiple source systems with regulatory audit requirements, commercial enterprise platforms provide the operational reliability and support that open-source alternatives do not.

What record volumes can enterprise deduplication software handle?

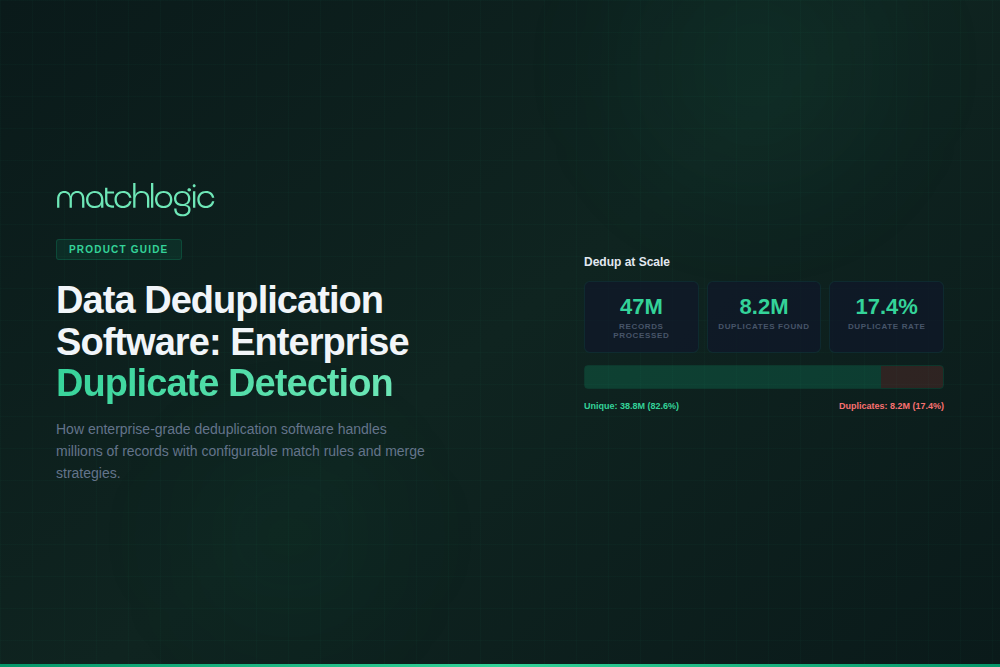

Enterprise platforms are designed for datasets ranging from hundreds of thousands to hundreds of millions of records. Performance depends on the matching algorithm complexity, number of blocking keys, and hardware resources. MatchLogic processes 10 million records in minutes on standard enterprise hardware. The key metric to evaluate is not just throughput on a single run, but sustained performance across incremental runs as the master dataset grows over time.

.svg)