Data Cleansing vs. Data Scrubbing vs. Data Washing: Definitions and Differences

Data cleansing, data scrubbing, and data washing are three terms that describe overlapping processes for improving data quality. Data cleansing is the broadest term, covering any structured program that identifies and corrects errors in datasets, including governance, manual review, and automated tools. Data scrubbing refers specifically to the automated, technical process of detecting and fixing errors during data import, transformation, or batch processing. Data washing is the least formally defined, typically describing light-touch cleanup operations like removing obvious formatting errors or stripping invalid characters from a dataset before analysis.

In practice, most enterprise software vendors use these terms interchangeably in their marketing. The operational differences matter more than the labels. What enterprise buyers need is a platform that combines automated error detection, rule-based standardization, deduplication, and ongoing monitoring in a governed, auditable workflow, regardless of whether the vendor calls it "cleansing," "scrubbing," or something else entirely. For a complete treatment of the broader discipline, see our data cleansing guide.

Key Takeaways

- ✓Data cleansing, scrubbing, and washing are overlapping terms for data quality improvement. Most vendors and practitioners use them interchangeably.

- ✓The useful distinction: cleansing = broad program (governance + tools + process); scrubbing = automated technical execution; washing = light-touch pre-analysis cleanup.

- ✓Enterprise buyers should evaluate tool capabilities, not terminology. The critical features are profiling, standardization, deduplication, audit trails, and pipeline integration.

- ✓"Data washing" lacks a formal industry definition and does not appear in the DAMA-DMBOK framework. It is primarily a colloquial term.

What Is Data Cleansing?

Data cleansing (also called data cleaning) is the structured process of identifying and correcting errors, inconsistencies, and inaccuracies across an organization's datasets to improve data quality for business use. The DAMA-DMBOK 2.0 framework treats data cleansing as a core activity within the Data Quality knowledge area, encompassing profiling, standardization, validation, enrichment, deduplication, and monitoring.

Cleansing is the most commonly used term in enterprise software and industry standards. When a data governance team builds a "data cleansing program," they are describing the full scope: policies defining quality standards, tools that automate error correction, workflows for manual review, and monitoring dashboards that track quality metrics over time. Cleansing is not a single action; it is an ongoing program.

What Is Data Scrubbing?

Data scrubbing is the automated, technical subset of data cleansing that focuses on detecting and correcting errors during specific processing events: data import, system transfers, batch updates, or scheduled maintenance runs. Scrubbing typically involves applying deterministic rules (if value matches pattern X, replace with Y) or statistical methods to identify and fix formatting errors, invalid values, and duplicate entries.

The term "scrubbing" carries a more technical connotation than "cleansing." When a data engineer says they need to "scrub the dataset," they usually mean running automated rules against the data to fix known error patterns before the data enters a production system. Scrubbing is an execution step within a broader cleansing program. For a detailed look at scrubbing tools, see data scrubbing software.

What Is Data Washing?

Data washing is the least formally defined of the three terms. It does not appear in the DAMA-DMBOK framework, ISO 8000, or most enterprise data management literature. The term is used colloquially to describe light-touch data cleanup: removing leading/trailing whitespace, stripping non-printable characters, fixing obvious typos, or normalizing capitalization before importing data into an analysis tool.

In some contexts, "data washing" carries a negative connotation similar to "whitewashing" or "greenwashing," implying superficial cleanup that makes data appear cleaner than it actually is without addressing root causes. Enterprise data teams should treat "data washing" as a descriptive label for basic preprocessing, not as a substitute for a structured cleansing program.

How Do Data Cleansing, Scrubbing, and Washing Compare?

| Attribute | Data Cleansing | Data Scrubbing | Data Washing |

|---|---|---|---|

| Scope | Broad: governance + tools + processes + monitoring across the organization. | Narrow: automated error detection and correction during specific processing events. | Minimal: basic formatting fixes and character-level cleanup before analysis. |

| Industry Recognition | DAMA-DMBOK, ISO 8000, Gartner, TDWI. Standard enterprise terminology. | Widely used in technical contexts. Recognized by DAMA-DMBOK as a subset of cleansing. | No formal definition in DAMA-DMBOK, ISO, or major analyst frameworks. Colloquial usage only. |

| Duration | Ongoing program with continuous monitoring and quarterly reviews. | Event-driven: runs at import, scheduled batch, or pipeline trigger. | One-time: performed before a specific analysis or import task. |

| Typical Activities | Profiling, validation, standardization, deduplication, enrichment, monitoring, governance. | Rule-based error correction, format standardization, duplicate detection, invalid value replacement. | Whitespace removal, character encoding fixes, capitalization normalization, obvious typo correction. |

| Audit Trail | Required for compliance. Every change logged with rule, timestamp, and user. | Typically logged at the batch/job level; record-level logging varies by tool. | Rarely logged. Changes are usually applied in-place without documentation. |

| Compliance Suitability | Meets HIPAA, SOX, GDPR requirements when implemented with proper governance. | Can meet compliance if audit logging is enabled and rules are versioned. | Insufficient for regulatory compliance. No governance, no audit trail, no monitoring. |

What Should Enterprise Buyers Actually Look For?

Terminology debates distract from the practical question: does the tool solve your data quality problem in a governed, repeatable way? When evaluating vendors, focus on capabilities rather than labels. The five capabilities that matter for enterprise data quality, regardless of what the vendor calls their product, are: automated profiling (to understand what is wrong), rule-based standardization (to fix format problems), deduplication and matching (to eliminate duplicates), audit logging and rule versioning (to satisfy compliance), and pipeline integration (to prevent recurrence).

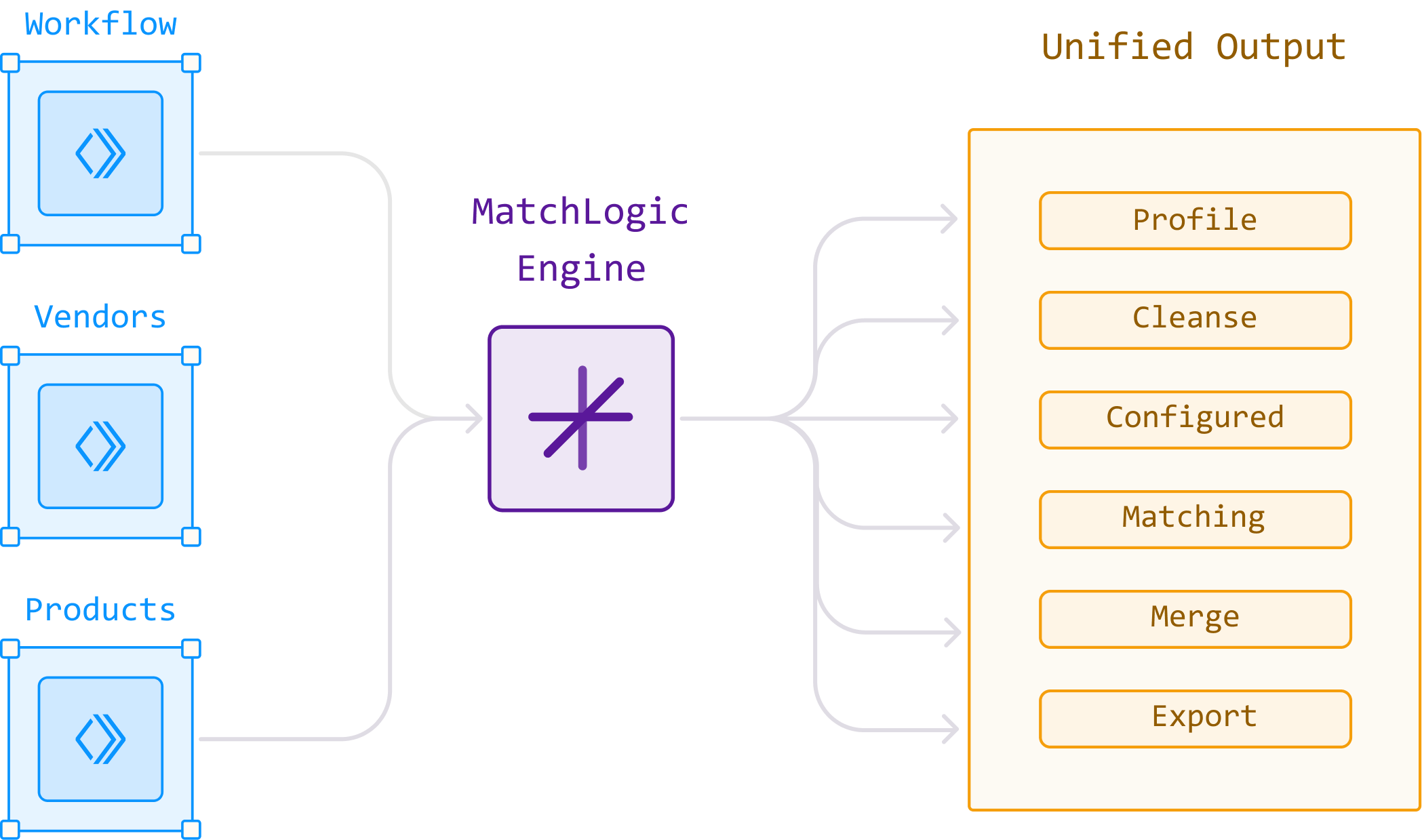

MatchLogic combines all five capabilities in a single on-premise platform. Whether you call it cleansing, scrubbing, or simply "making your data work," the result is the same: profiled, standardized, deduplicated, and monitored data with a full audit trail. For guidance on building repeatable enterprise workflows, see data cleaning for enterprise.

Visual Reference

Frequently Asked Questions

What is the difference between data cleansing and data scrubbing?

Data cleansing is the broad, ongoing program that includes governance policies, automated tools, manual review workflows, and monitoring. Data scrubbing is the automated, technical subset focused on detecting and fixing errors during specific processing events like data imports or batch updates. Scrubbing is one execution step within a larger cleansing program.

Is data washing a real industry term?

Data washing is a colloquial term that does not appear in the DAMA-DMBOK framework, ISO 8000, or major analyst reports. It informally describes basic, light-touch cleanup like removing whitespace or fixing character encoding. Enterprise data teams should not confuse data washing with the structured, governed processes that actual data quality improvement requires.

Are data cleansing and data cleaning the same thing?

Yes. "Data cleansing" and "data cleaning" are used interchangeably across industry standards, analyst reports, and vendor documentation. Both refer to the process of identifying and correcting errors to improve data quality. The DAMA-DMBOK uses "data quality" as the overarching term and treats cleansing/cleaning as equivalent activities within that domain.

Which term should I use in vendor evaluations?

Use whichever term the vendor uses, but evaluate capabilities rather than terminology. Ask specifically about profiling, standardization, deduplication, audit logging, and pipeline integration. A vendor calling their product "data scrubbing software" that delivers all five capabilities is more useful than a vendor selling a "data cleansing platform" that only handles format standardization.

.svg)