Data Cleaning for Enterprise: Building Repeatable Data Quality Workflows

Enterprise data cleaning is the practice of building governed, automated, and repeatable workflows that detect and correct data errors across an organization's systems on an ongoing basis. Unlike one-time cleanup projects that address a backlog and then end, enterprise data cleaning embeds quality controls into data pipelines, assigns ownership through data stewardship roles, and measures outcomes against defined quality dimensions such as accuracy, completeness, consistency, and timeliness.

According to Gartner, poor data quality costs organizations an average of $12.9 million per year. The State of Enterprise Data Quality 2024 report by Anomalo found that 95% of enterprise data leaders have experienced a data quality issue that directly affected business outcomes. These costs compound because most organizations treat data cleaning as an ad hoc response to visible problems rather than a continuous operational function. This guide presents a six-phase framework for building enterprise data cleaning workflows that prevent errors from reaching downstream systems, with specific role assignments, tool evaluation criteria, and compliance considerations. For foundational context on data quality techniques, see our data cleansing guide.

Key Takeaways

- ✓Enterprise data cleaning is a continuous operational function, not a one-time project. Organizations that treat it as a project see quality degrade within 90 days.

- ✓The DAMA-DMBOK framework identifies nine data quality dimensions. Enterprise cleaning workflows should measure at least four: accuracy, completeness, consistency, and timeliness.

- ✓Repeatable workflows require three components: automated rules, assigned data stewardship roles, and measurable quality thresholds with alerting.

- ✓Profiling before cleaning prevents the most common enterprise mistake: writing rules to fix assumed problems rather than measured ones.

- ✓On-premise data cleaning is a compliance requirement (not a preference) for organizations handling PHI, PII, or data subject to sovereignty regulations.

Why Does One-Time Data Cleaning Fail at Enterprise Scale?

Every enterprise data team has experienced the same cycle: a major initiative (a CRM migration, a regulatory audit, an analytics platform launch) triggers a cleaning sprint. The team spends weeks or months scrubbing records, standardizing formats, and removing duplicates. The data looks good. The project ships. Within 90 days, quality degrades back to pre-cleanup levels.

This happens because the sources of dirty data continue operating unchanged. New records enter systems with the same inconsistencies. Existing records decay as contacts change roles, companies merge, and addresses update. Research published in the MIT Sloan Management Review estimates that contact data decays at roughly 2% per month, meaning a perfectly clean database loses approximately 22% of its accuracy within a year without ongoing maintenance.

The fix is architectural, not procedural. Enterprise data cleaning must operate as a continuous function embedded in data pipelines, with automated rules that fire at ingestion, scheduled batch processes that catch drift, and monitoring dashboards that surface quality degradation before it reaches reports or models.

What Data Quality Dimensions Should Enterprise Cleaning Address?

The DAMA-DMBOK 2.0 (revised in 2024) defines nine data quality dimensions that provide a standardized vocabulary for measuring and communicating quality across teams: accuracy, validity, completeness, integrity, uniqueness, timeliness, consistency, currency, and reasonableness. Not every enterprise cleaning workflow needs to address all nine simultaneously. Most organizations start with the four dimensions that have the highest operational impact.

Priority Data Quality Dimensions for Enterprise Cleaning

| Dimension | Definition | Enterprise Example | Cleaning Action |

|---|---|---|---|

| Accuracy | Data correctly represents the real-world entity or event it describes. | A customer record shows "123 Main St, New York, CA 90210" where the city and state/ZIP do not match. | Cross-field validation rules that check city/state/ZIP consistency against postal reference data. |

| Completeness | All required fields contain values; no mandatory data is missing. | 30% of vendor records in an ERP system lack a tax ID, blocking automated payment processing. | Completeness profiling to identify gaps, followed by enrichment from authoritative sources or routing to manual review. |

| Consistency | The same fact is represented the same way across all systems and records. | A customer appears as "Acme Corp" in the CRM, "ACME Corporation" in billing, and "Acme" in the marketing platform. | Standardization rules that normalize naming conventions, followed by cross-system matching to link records. |

| Timeliness | Data is current enough for its intended use; stale records are flagged or refreshed. | A mailing list includes 18,000 addresses that have not been validated in over 24 months. | Scheduled re-validation against reference databases and age-based flagging for records exceeding defined freshness thresholds. |

The remaining five DAMA dimensions (validity, integrity, uniqueness, currency, and reasonableness) become relevant as an enterprise cleaning program matures. Validity and integrity checks are typically implemented during the first expansion cycle, followed by uniqueness (deduplication) as the organization builds matching capabilities.

What Does a Repeatable Enterprise Data Cleaning Workflow Look Like?

The following six-phase framework converts ad hoc cleaning into a governed, measurable operational workflow. Each phase has a defined input, output, responsible role, and tooling requirement.

Phase 1: Scope and Prioritize

Not all data requires the same level of cleaning effort. Start by mapping datasets to business criticality and regulatory exposure. Customer master data in a healthcare CRM that feeds clinical systems requires higher quality standards than a marketing contact list used for newsletter distribution. Prioritize datasets where quality failures create compliance risk, revenue impact, or operational disruption.

Output: A ranked list of datasets with assigned quality tier (Tier 1: regulatory/mission-critical, Tier 2: operational, Tier 3: informational).

Phase 2: Profile and Baseline

Run automated profiling across every Tier 1 and Tier 2 dataset to establish a quantified baseline of current quality. Profiling reveals completeness rates, format distributions, uniqueness percentages, and outlier patterns for every field. This baseline becomes the measurement reference for all subsequent improvement efforts. See our guide to data profiling tools for detailed profiling methodology.

Output: A profiling report showing quality scores per field, per dataset, with identified issues ranked by record volume affected.

Phase 3: Define Rules and Thresholds

Translate profiling findings into specific, testable cleaning rules. Each rule should specify the condition it detects, the transformation it applies, and the quality dimension it addresses. Define thresholds for automated correction versus manual review. A rule that standardizes state abbreviations from "Calif" to "CA" is safe to automate. A rule that changes a customer's legal name requires human approval.

Output: A versioned rule library with each rule mapped to a quality dimension, a severity level, and an automation/manual-review classification.

Phase 4: Execute and Validate

Apply cleaning rules to the prioritized datasets in a staging environment before promoting changes to production. Validate results against the profiling baseline: did completeness improve from 72% to 95%? Did format consistency reach the target threshold? Flag any rule that produced unexpected changes for review before the next execution cycle.

Output: Cleaned datasets with before/after quality scores, a change log documenting every transformation applied, and a list of records routed to manual review.

Phase 5: Embed in Pipelines

Move validated cleaning rules from batch execution into real-time data pipelines. Rules fire at the point of data ingestion, preventing dirty records from entering production systems. This is the step that converts cleaning from a periodic project into a continuous function. API-based integration with ETL/ELT tools, CRMs, ERPs, and data warehouses ensures that every new record passes through the same quality gates that cleaned the historical backlog.

Output: Cleaning rules deployed as pipeline stages with event-triggered and scheduled execution modes.

Phase 6: Monitor and Improve

Deploy quality monitoring dashboards that track each dimension's score over time. Set alert thresholds: if completeness drops below 90% or duplicate rates exceed 5%, the system notifies the assigned data steward. Review rule performance quarterly. Rules that fire on less than 0.1% of records may be candidates for retirement. New error patterns identified through monitoring become new rules in the library.

Output: Quality dashboards with trend data, automated alerts, and a quarterly rule review cadence.

Who Owns Enterprise Data Cleaning?

Enterprise cleaning programs fail when ownership is ambiguous. According to Info-Tech Research Group, up to 75% of data governance initiatives fail because accountability is unclear. The following RACI model maps cleaning responsibilities to the roles that typically exist in enterprise data teams.

Enterprise Data Cleaning RACI Matrix

| Activity | Data Steward | Data Engineer | Business Analyst | CDO / Data Director |

|---|---|---|---|---|

| Scope and prioritize datasets | Consulted | Informed | Responsible | Accountable |

| Profile and baseline | Responsible | Consulted | Informed | Accountable |

| Define cleaning rules | Responsible | Consulted | Consulted | Informed |

| Execute and validate | Consulted | Responsible | Informed | Informed |

| Embed in pipelines | Informed | Responsible | Informed | Accountable |

| Monitor and improve | Responsible | Consulted | Responsible | Accountable |

The data steward role is the operational backbone of this model. Data stewards own the cleaning rules for their domain (customer data, vendor data, product data), review records flagged for manual intervention, and report quality metrics to the CDO. Without designated stewardship, rules drift, exceptions accumulate, and the cleaning program degrades into the same ad hoc state it was designed to replace.

How Does Enterprise Data Cleaning Work in Practice?

A mid-market insurance company with 1,200 employees operated three core systems: a Salesforce CRM with 2.8 million policyholder records, a Guidewire claims management system with 4.1 million claims records, and a legacy IBM AS/400 underwriting system with 1.6 million policy records. Before implementing a continuous cleaning program, the company's data team estimated they spent 35% of their time on reactive data fixes triggered by downstream report failures.

The team profiled all three systems and discovered that 41% of policyholder records had inconsistent name formats across systems, 28% of claims records referenced policy numbers that did not exist in the underwriting system (orphaned references), and 19% of address records contained format variations that prevented automated correspondence generation.

After implementing a six-phase cleaning workflow with 47 automated rules and 3 assigned data stewards, the company reduced reactive data fix time from 35% to 8% of the data team's capacity within 6 months. The automated rules caught an average of 12,400 errors per week at ingestion, preventing them from propagating into claims processing and regulatory reporting.

The financial impact: the company estimated $340,000 in annual savings from reduced manual rework, $180,000 in avoided regulatory penalties from improved reporting accuracy (state insurance commissioner filings), and a 14% improvement in claims processing cycle time because clean data eliminated the manual lookup steps that had been required when policyholder records did not match across systems.

What Should Enterprise Teams Look for in Data Cleaning Tools?

Enterprise data cleaning tools must support the six-phase workflow described above, not just the execution phase. Tools that only clean records without profiling, versioning, monitoring, and pipeline integration force teams to build the remaining infrastructure themselves, which rarely survives organizational changes.

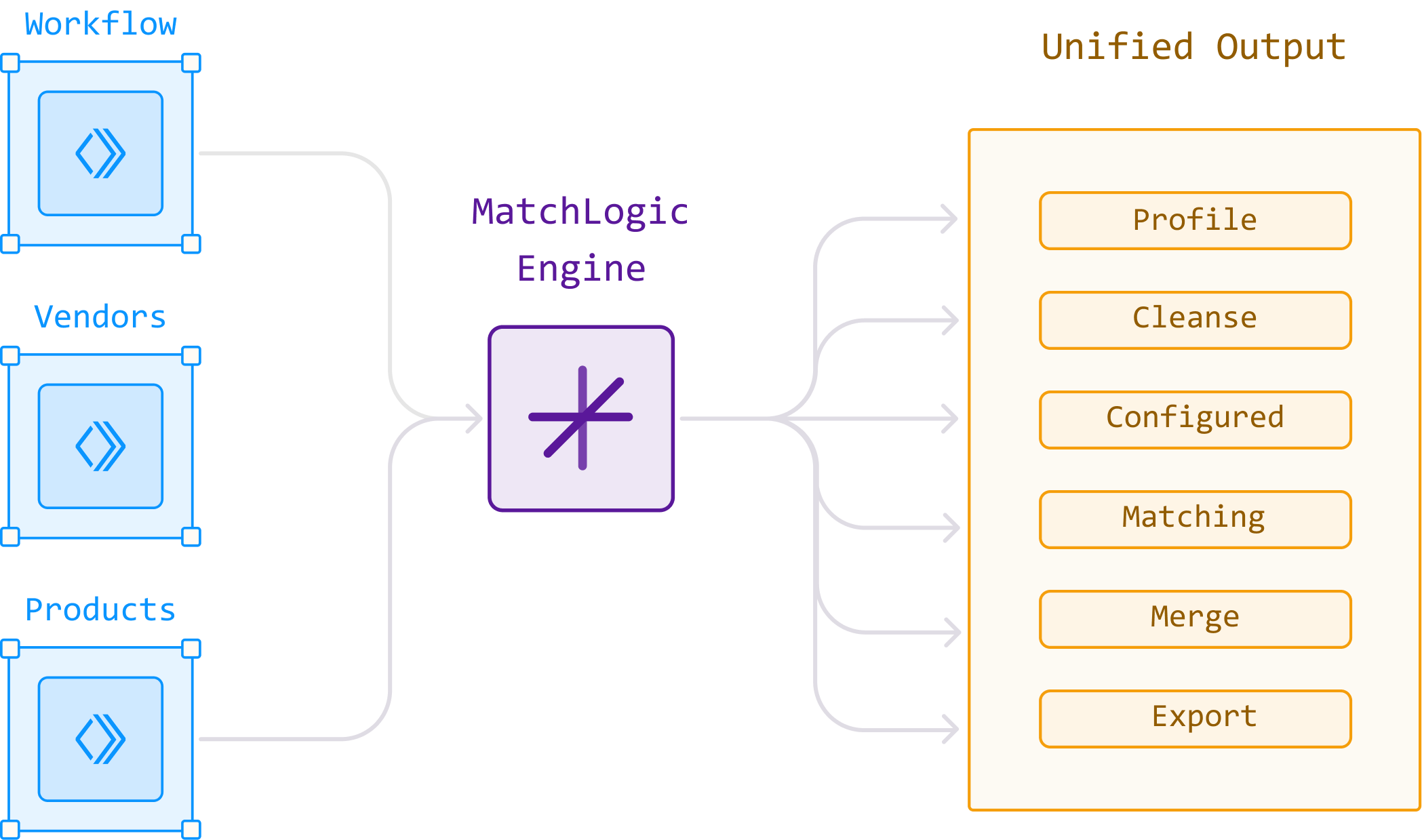

The critical differentiator for enterprise buyers is whether the tool treats cleaning as an isolated function or as part of an integrated data quality pipeline. Platforms like MatchLogic combine profiling, standardization, cleaning, matching, and monitoring in a single engine, eliminating data handoffs between disconnected tools. Every rule, every transformation, and every quality score lives in one auditable system. For organizations in regulated industries, this integration also satisfies the lineage and traceability requirements of frameworks like SOX Section 404, HIPAA, and GDPR Article 5(1)(d), which requires that personal data be accurate and kept up to date.

Deployment model is the other non-negotiable evaluation criterion. Organizations processing protected health information, personally identifiable financial data, or government records subject to data sovereignty regulations need on-premise or private-cloud deployment where all data processing happens within the organization's controlled environment. Cloud-only tools that require data export to vendor infrastructure may disqualify themselves before feature evaluation begins. For more on standardization as a cleaning prerequisite, see our data standardization guide.

Visual Reference: MatchLogic Platform

Frequently Asked Questions

What is enterprise data cleaning?

Enterprise data cleaning is the practice of building repeatable, governed workflows that detect and correct data errors across an organization's systems on a continuous basis. Unlike one-time cleanup projects, enterprise cleaning embeds quality rules into data pipelines, assigns ownership through data stewardship roles, and measures outcomes against defined quality dimensions from the DAMA-DMBOK framework. According to Gartner, organizations that implement continuous data quality programs reduce error-related costs by up to 60%.

How often should enterprise data be cleaned?

Continuously, not periodically. The most effective enterprise cleaning programs apply rules at the point of data ingestion (real-time) and run supplemental batch processes on a daily or weekly schedule. Research from MIT Sloan Management Review indicates that contact data decays at approximately 2% per month, so even a perfectly clean database loses roughly 22% accuracy within a year without ongoing maintenance. Quarterly or annual cleaning projects cannot keep pace with this decay rate.

What is the DAMA-DMBOK framework for data quality?

DAMA-DMBOK 2.0 (revised 2024) is a vendor-neutral framework published by DAMA International that defines nine data quality dimensions: accuracy, validity, completeness, integrity, uniqueness, timeliness, consistency, currency, and reasonableness. It provides standardized vocabulary and measurement approaches for enterprise data quality programs. The framework is widely used as the basis for data quality metrics, stewardship role definitions, and CDMP certification.

How do you measure the ROI of enterprise data cleaning?

Track three categories: direct cost savings (reduced manual rework hours, eliminated duplicate mailing costs), risk avoidance (regulatory penalties prevented, audit failures avoided), and operational efficiency gains (faster processing cycle times, reduced error investigation time). The insurance company scenario in this article achieved $520,000 in annualized savings from a cleaning program that cost approximately $150,000 to implement, including tooling and steward allocation.

Should enterprise data cleaning happen on-premise or in the cloud?

That depends on your regulatory environment and data classification. Healthcare organizations processing PHI under HIPAA, financial institutions subject to SOX and GLBA, and government agencies bound by FedRAMP cannot send production data to third-party cloud endpoints. For these organizations, on-premise deployment is a compliance requirement. Organizations without these constraints can evaluate cloud options, but should verify that the vendor's data processing location and practices align with their internal data governance policies.

What is the difference between data cleaning and data governance?

Data cleaning is an operational activity that fixes specific errors in specific records. Data governance is the organizational framework of policies, roles, and standards that defines how data is managed across the enterprise. Cleaning is one activity within a governance program; governance provides the authority, accountability, and measurement framework that makes cleaning sustainable. A cleaning program without governance produces clean data nobody trusts. A governance program without cleaning produces policies nobody follows.

.svg)