Data Matching Software: Features, Pricing, and Vendor Evaluation Guide

Data matching software automates the process of comparing records across one or more datasets to identify entries that refer to the same real-world entity. It uses deterministic rules, probabilistic scoring, fuzzy string algorithms, and machine learning to connect records despite formatting differences, spelling variations, missing fields, and inconsistent identifiers. Enterprise data matching software goes beyond basic comparison by integrating data profiling, cleansing, standardization, matching, and merge/purge into a unified pipeline.

The market includes enterprise platforms (Informatica, IBM QualityStage, MatchLogic, SAS DataFlux), mid-market tools (Data Ladder, WinPure, Melissa), open-source libraries (dedupe.io, Splink, Zingg), and cloud services (AWS Entity Resolution). Choosing between them requires evaluating technical capabilities, deployment model, pricing structure, and organizational fit. This guide provides the evaluation framework. For the underlying matching techniques, see our [INTERNAL LINK: 1A, data matching techniques breakdown]. For fuzzy matching specifically, see our [INTERNAL LINK: 1B, fuzzy matching software guide]. For the complete matching process, see our [INTERNAL LINK: Pillar 1, data matching guide].

Key Takeaways

- Enterprise data matching software integrates profiling, cleansing, standardization, matching, and merge/purge in a unified pipeline.

- The market spans enterprise platforms ($50K-$500K+/year), mid-market tools ($5K-$50K/year), open-source libraries (free), and cloud services (per-record pricing).

- Key evaluation criteria: algorithm flexibility, scale, deployment model, auditability, pipeline integration, and total cost of ownership.

- Pricing models include perpetual license, annual subscription, per-record, and consumption-based; each has different TCO implications at scale.

- On-premise deployment is critical for regulated industries; cloud deployment suits smaller datasets and POC projects.

- MatchLogic processes 1 million records in under 8 seconds on-premise with 95%+ accuracy and full audit trails.

What Features Should Enterprise Data Matching Software Include?

Matching Algorithms

- Essential Capabilities: Deterministic (exact rules), probabilistic (Fellegi-Sunter), fuzzy (Jaro-Winkler, Levenshtein, Soundex), and ML-based. Configurable per field type.

- Why It Matters: No single algorithm works for all data. The tool must support the right method for each field: Jaro-Winkler for names, Levenshtein for addresses, exact for identifiers.

Data Profiling

- Essential Capabilities: Automated assessment of completeness, consistency, validity, and duplicate rate before matching begins.

- Why It Matters: Matching accuracy depends on input quality. Profiling reveals the actual quality baseline and informs rule configuration.

Standardization

- Essential Capabilities: Format normalization, name parsing, address standardization (USPS CASS), abbreviation dictionaries, vocabulary governance.

- Why It Matters: Standardizing before matching improves accuracy by 40-50%. Integrated standardization eliminates pipeline breaks.

Blocking and Indexing

- Essential Capabilities: Multi-pass blocking with configurable keys. Sorted neighborhood algorithms. Phonetic blocking for name-heavy datasets.

- Why It Matters: Without blocking, matching is computationally infeasible at enterprise scale (10M+ records).

Merge/Purge

- Essential Capabilities: Per-field survivorship rules. Before/after merge preview. Audit trail for every merge decision.

- Why It Matters: Matching identifies duplicates; merge/purge resolves them. Preview prevents irreversible data destruction.

Automation

- Essential Capabilities: Scheduled batch matching. Event-triggered real-time matching via API. Continuous monitoring of duplicate rates.

- Why It Matters: One-time matching is a depreciating asset. Automation prevents duplicates from re-accumulating.

Auditability

- Essential Capabilities: Full logging of algorithms applied, scores produced, thresholds used, and reviewer decisions for every record pair.

- Why It Matters: HIPAA, SOX, GDPR require documented evidence of data processing decisions. Audit trails are non-negotiable in regulated industries.

Deployment

- Essential Capabilities: On-premise, cloud, or hybrid. Air-gapped environment support. Infrastructure independence.

- Why It Matters: Regulated industries require on-premise. Cloud suits smaller datasets and POC projects.

Algorithms

- Essential Capabilities: Deterministic, probabilistic, fuzzy, ML. Configurable per field.

- Why It Matters: No single algorithm works for all data.

Profiling

- Essential Capabilities: Completeness, consistency, validity assessment.

- Why It Matters: Quality baseline informs rule configuration.

Standardization

- Essential Capabilities: Format normalization, name parsing, USPS CASS.

- Why It Matters: Improves accuracy 40-50%.

Blocking

- Essential Capabilities: Multi-pass, configurable keys, sorted neighborhood.

- Why It Matters: Required for scale (10M+ records).

Merge/Purge

- Essential Capabilities: Per-field survivorship, preview, audit trail.

- Why It Matters: Preview prevents data destruction.

Automation

- Essential Capabilities: Scheduled batch, real-time API, monitoring.

- Why It Matters: Prevents duplicate re-accumulation.

Auditability

- Essential Capabilities: Full logging of algorithms, scores, thresholds.

- Why It Matters: Required by HIPAA, SOX, GDPR.

Deployment

- Essential Capabilities: On-premise, cloud, hybrid, air-gapped.

- Why It Matters: Regulated industries need on-premise.

How Do Data Matching Software Pricing Models Compare?

Perpetual License

- How It Works: One-time purchase plus annual maintenance (15-20% of license). Own the software indefinitely.

- Best For: Organizations with predictable, long-term matching needs and existing infrastructure.

- Watch Out For: High upfront cost. Maintenance fees compound over time. Upgrade costs may be additional.

Annual Subscription

- How It Works: Annual fee for access. Includes updates and support. Cancel anytime (with data export).

- Best For: Mid-size organizations that prefer OpEx over CapEx. Predictable annual budget.

- Watch Out For: TCO exceeds perpetual license after 3-4 years. Vendor lock-in risk if export is complicated.

Per-Record

- How It Works: Pay for each record processed. Common in cloud matching services (e.g., AWS Entity Resolution).

- Best For: Variable-volume workloads. POC projects. Organizations that process records infrequently.

- Watch Out For: Costs spike unpredictably with volume growth. $0.001/record becomes $10,000 at 10M records per month.

Consumption-Based

- How It Works: Pay based on compute resources consumed. Common in cloud-native platforms (Informatica IDMC).

- Best For: Organizations with fluctuating workloads. Cloud-first architectures.

- Watch Out For: Difficult to predict costs. Heavy matching jobs can generate surprise bills. Budget planning is harder.

How Should You Evaluate Data Matching Software Vendors?

Step 1: Define Your Matching Requirements

Before evaluating vendors, document: what entity types you need to match (customers, vendors, products, patients), how many records you process, what data sources are involved, what compliance frameworks apply, and whether you need on-premise or cloud deployment. These requirements narrow the vendor field significantly.

Step 2: Run a Proof of Concept on Your Data

Never select a matching tool based on vendor demos using clean sample data. Request a POC on your actual data. Measure precision, recall, and F1 score against a labeled validation set of at least 500 record pairs from your own systems. Compare the POC results across vendors using the same validation set.

Step 3: Evaluate Total Cost of Ownership (3-Year)

Calculate TCO across three years, including license/subscription fees, infrastructure costs (for on-premise), implementation services, training, and ongoing maintenance. Per-record pricing can be deceptively cheap at POC scale but expensive at production volume. A tool that costs $5,000 for a POC may cost $120,000 per year at 10 million records per month.

Step 4: Assess Pipeline Integration

The matching tool must fit into your existing data infrastructure. Does it connect to your databases, CRMs, ERPs, and data warehouses? Does it offer API-based automation? Can it run as a step in your ETL/ELT pipeline? Tools that require manual data export/import between each stage create friction and introduce errors.

"As part of the journey we've gone through with MatchLogic, we're becoming more data-first, moving from assumption to assurance around data quality."

— Daniel Hughes, VP of Analytics, Finverse Bank

Step 5: Verify Auditability and Compliance

For regulated industries, confirm that the tool logs every match decision with full transparency: algorithms applied, scores produced, thresholds used, reviewer actions. Request audit log samples. Verify that the log format meets your compliance team's documentation requirements.

Where Does MatchLogic Fit in the Data Matching Software Market?

MatchLogic is an on-premise enterprise data matching platform that integrates profiling, cleansing, standardization, fuzzy matching, probabilistic scoring, and merge/purge in a single deployment. It is positioned for regulated industries (healthcare, financial services, government) and large enterprises that require data residency, processing control, and full auditability.

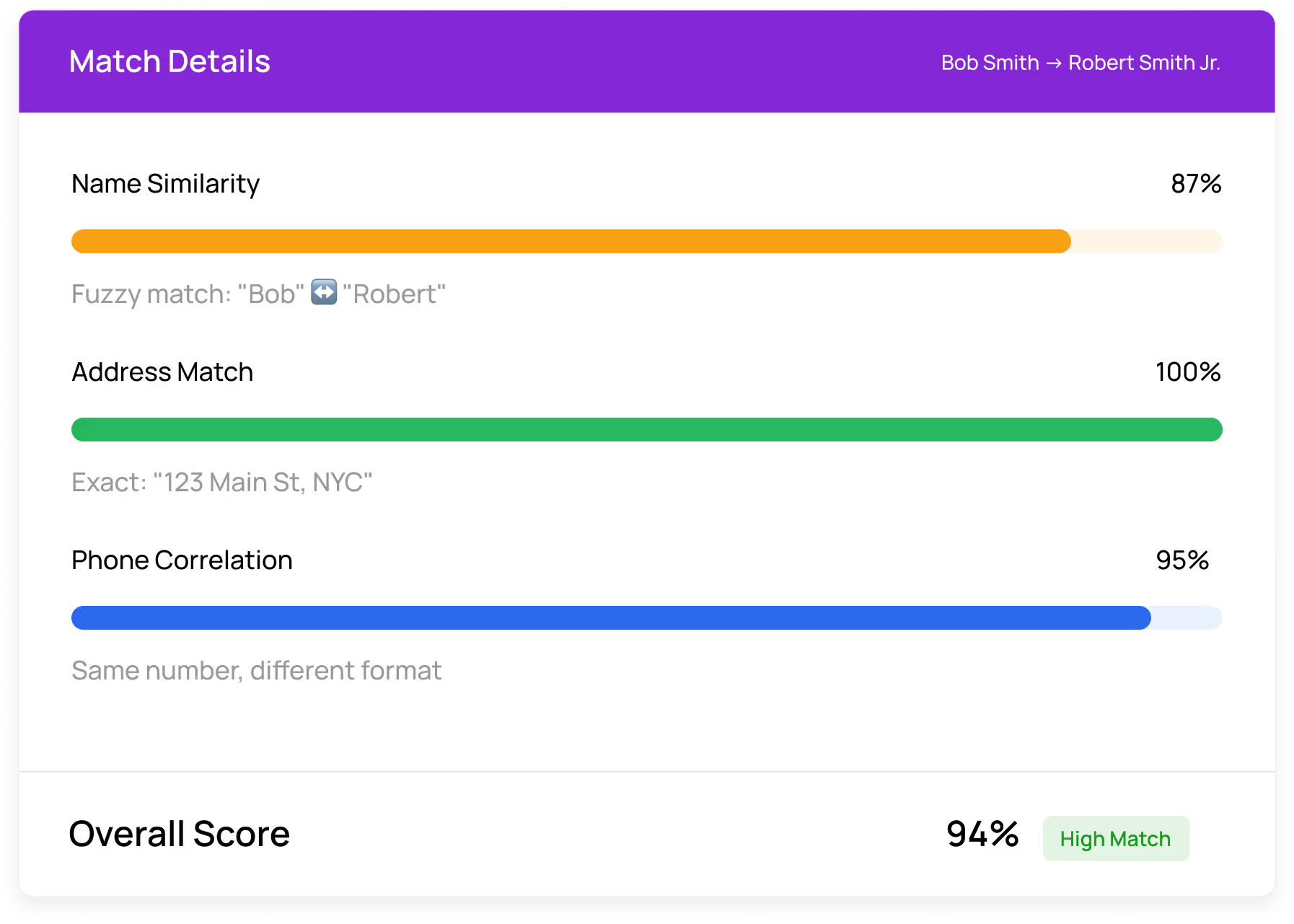

Key differentiators: processes 1 million records in under 8 seconds with 95%+ accuracy; configurable matching algorithms per field type; visual threshold tuning with real-time precision/recall feedback; per-field survivorship rules with before/after merge preview; complete audit trails for every match decision; and on-premise deployment that ensures sensitive data never leaves your secured infrastructure.

"Matched 1.8 million records across three systems with under 2% false positives. Finally have a single source of truth we actually trust."

— Robert Tanaka, Director of Data Operations, Summit Financial Group

1.8M records matched with transparent scoring

Frequently Asked Questions

What is data matching software?

Data matching software automates the comparison of records across datasets to identify entries that refer to the same entity. It uses deterministic rules, probabilistic scoring, fuzzy algorithms, and ML to connect records despite formatting differences, spelling variations, and missing fields.

How much does data matching software cost?

Pricing varies by model: perpetual licenses range from $20K to $500K+, annual subscriptions from $5K to $200K+, per-record pricing from $0.001 to $0.01+ per record, and consumption-based pricing varies by compute usage. TCO analysis over three years (including implementation, infrastructure, and maintenance) is the most reliable comparison method.

What is the difference between data matching and data integration?

Data integration tools (ETL/ELT) move data between systems. Data matching identifies which records across those systems refer to the same entity. Integration moves data; matching links it. Most enterprises need both: integration centralizes data, and matching determines which records belong together.

Can data matching software run on-premise?

Yes. MatchLogic is built for on-premise deployment, processing all matching within your secured infrastructure. Match scores, algorithms, and audit trails are generated locally, ensuring PII and regulated data never leave your network.

How do you measure data matching software accuracy?

Three metrics: precision (percentage of declared matches that are correct), recall (percentage of true matches found), and F1 score (harmonic mean of precision and recall). Run a POC on your actual data against a labeled validation set of 500+ record pairs and compare vendors using the same metrics.

.svg)