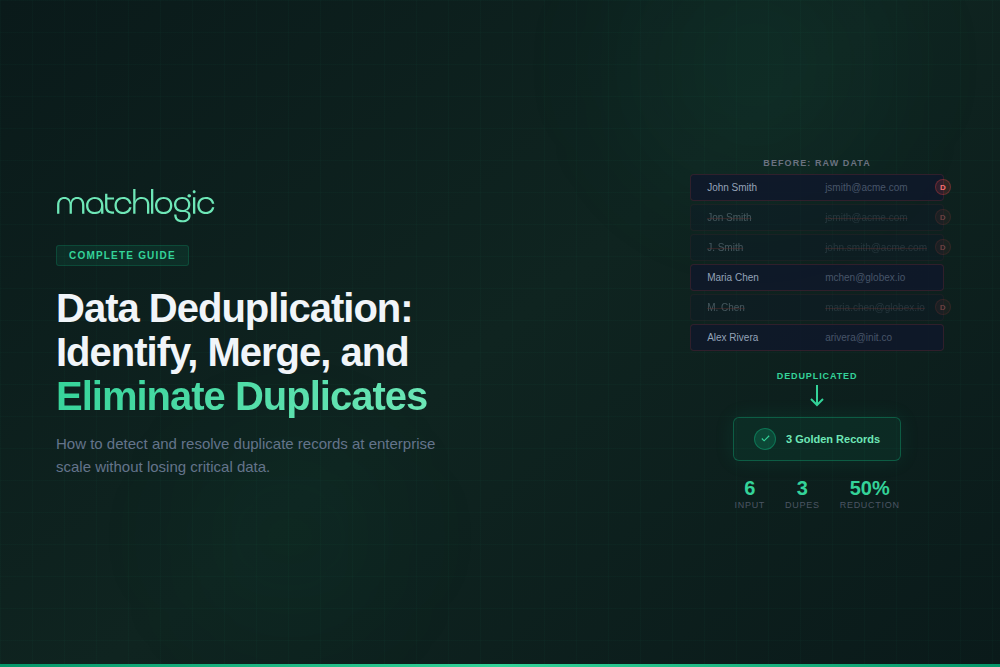

Data Deduplication: How to Identify, Merge, and Eliminate Duplicate Records

Data deduplication is the process of identifying records within a dataset that refer to the same real-world entity and merging or removing the redundant entries to produce a clean, non-redundant data set. In enterprise contexts, deduplication (also called dedupe) targets customer records, vendor entries, product catalogs, mailing lists, and any structured data where the same entity appears multiple times with inconsistent formatting, spelling variations, or incomplete fields. It is distinct from storage-level deduplication, which eliminates redundant data blocks in backup systems; record-level deduplication focuses on the business entities your organization operates on every day.

Duplicate records are not a minor inconvenience. According to Gartner, poor data quality costs organizations an average of $12.9 million per year, and duplicate records are one of the most common and measurable contributors. Enterprises typically discover 25–35% duplicate records in their first deduplication scan (MatchLogic customer benchmarks). This guide covers how duplicates form, the techniques used to find them, the [INTERNAL LINK: 3C, merge purge process] for eliminating them, and best practices for keeping your data clean permanently.

Key Takeaways

- Record-level deduplication identifies and merges duplicate business records (customers, vendors, products), distinct from storage-level dedup.

- Enterprises typically discover 25–35% duplicate records in their first deduplication scan, costing millions in wasted spend and compliance risk.

- Deduplication uses data matching techniques (deterministic, probabilistic, fuzzy) to identify duplicates, then applies survivorship rules to create golden records.

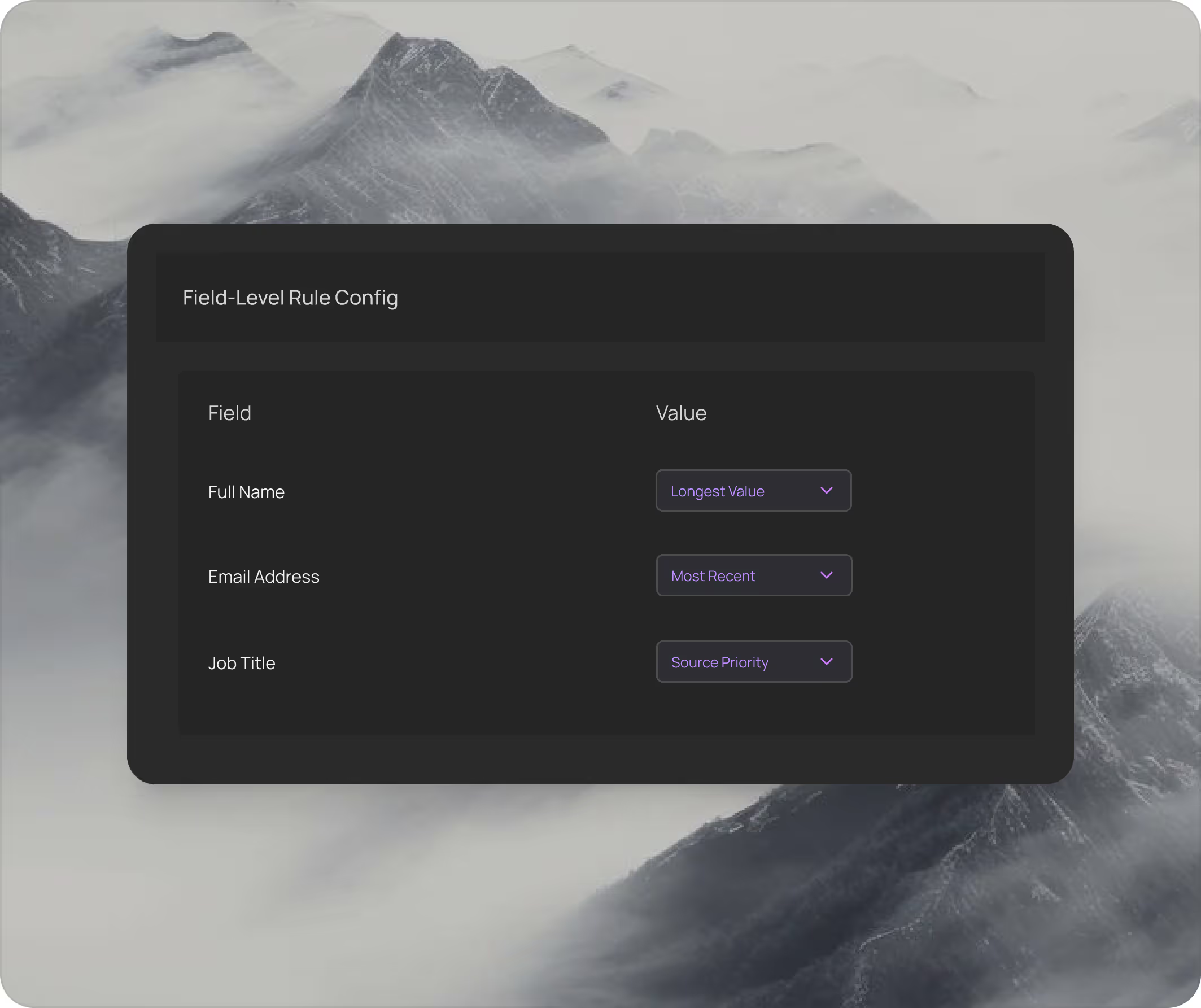

- Survivorship rules determine which field values survive the merge: most recent, most complete, longest value, or source-priority based.

- Ongoing deduplication (automated matching on every new record) prevents duplicates from re-accumulating after initial cleanup.

- On-premise deduplication platforms address data residency requirements for industries handling PII, PHI, or regulated financial data.

How Do Duplicate Records Accumulate in Enterprise Systems?

Duplicates do not appear from a single failure; they accumulate through dozens of small, compounding causes across every system that touches entity data.

Multiple Data Entry Points

When customers can register through a website, mobile app, call center, retail store, and third-party marketplace, each channel creates a new record. Without real-time duplicate checking at the point of entry, the same person gets a fresh record every time. A national retailer with 5 entry channels and 3 million annual new customer interactions can generate 300,000–900,000 duplicate records per year.

System Migrations and Mergers

Every CRM migration, ERP upgrade, and company acquisition introduces duplicate risk. When two Salesforce instances merge, or a legacy system's data is imported into a new platform, records that represent the same entity but use different identifiers or formatting create instant duplicates. Post-merger integrations are particularly risky: two companies' customer databases may have 15–40% overlap, and without deduplication before migration, that overlap becomes permanent duplication.

Manual Data Entry Errors

"McDonald's" becomes "McDonalds," "McDnlds," and "McDonald's Corp" across different systems because different people entered the same entity name. Phone numbers get entered with and without country codes. Addresses use "Street" in one record and "St." in another. These variations are individually minor but collectively create thousands of hidden duplicates.

Lack of Unique Identifiers

Many business entities lack a universal unique identifier. Unlike SSNs for individuals (which are themselves imperfect), vendors, products, and organizational entities often have no standard ID that persists across systems. Without a shared key, the same entity gets a different ID in every system it touches.

“First profile revealed 40% missing data and format chaos we never suspected. Helped us fix issues before migration.”

— Michael Chen, VP Data Governance, Global Logistics Inc.

40% missing data identified pre-migration

What Is the Business Cost of Duplicate Records?

The financial impact of duplicates is both direct and measurable.

Marketing Waste

- How Duplicates Cause Damage: Same customer receives 2–3x the emails, direct mail, and ad impressions. Duplicate records inflate audience counts, causing overspend.

- Typical Cost: 15–25% of marketing budget wasted (Experian Data Quality)

Sales Inefficiency

- How Duplicates Cause Damage: Multiple reps contact the same prospect. Lead scoring is unreliable because engagement is split across duplicate records.

- Typical Cost: 27% of sales time wasted on bad data (ZoomInfo)

Compliance Risk

- How Duplicates Cause Damage: GDPR right-to-erasure requests miss duplicate records. HIPAA audits flag inconsistent patient records. KYC screening misses entity links.

- Typical Cost: Regulatory fines from $10K to $100M+ depending on jurisdiction and violation

Analytics Distortion

- How Duplicates Cause Damage: Customer counts, churn rates, lifetime value, and segmentation are all wrong when built on duplicated data.

- Typical Cost: Every downstream metric is unreliable; decision quality degrades silently

Operational Errors

- How Duplicates Cause Damage: Duplicate vendor records cause duplicate payments. Duplicate product records cause inventory miscount. Duplicate patient records cause safety risks.

- Typical Cost: $1.9M average savings from eliminating duplicate payments (MatchLogic benchmarks)

How Does Data Deduplication Work?

Record-level deduplication follows a four-stage process: profile, match, review, and merge. Each stage builds on the previous one, and skipping any stage degrades the quality of the output.

Stage 1: Profile and Assess

Before deduplication begins, profile the dataset to understand its quality baseline. How many records exist? What is the completeness rate per field? What format variations are present? What is the estimated duplicate rate? Profiling answers these questions in minutes and provides the data-driven foundation for configuring match rules. MatchLogic's profiling engine scans 1 million records in under 5 seconds, revealing completeness scores, format patterns, and duplicate risk before any matching begins.

MatchLogic's profiling heat maps reveal duplicate clusters and quality failures at a glance, letting you configure match rules based on actual data patterns.

Stage 2: Match and Identify Duplicates

The matching stage compares records using [INTERNAL LINK: Cluster 1 Pillar, data matching techniques] (deterministic, probabilistic, fuzzy, and ML-based) to identify candidate duplicate pairs. Blocking reduces the comparison space so that matching remains computationally feasible at enterprise scale. The output is a set of duplicate groups: clusters of records that the system believes refer to the same entity, each with a confidence score.

MatchLogic groups duplicate records into visual clusters, showing field-by-field comparisons and confidence scores so reviewers can validate matches before merging.

Stage 3: Review and Validate

High-confidence matches (above your configured threshold) can be auto-merged. Low-confidence matches require human review. The review queue should be manageable: if more than 5% of candidate pairs need manual review, your matching rules or blocking strategy need tuning. Best practice is to review a sample of auto-merged records periodically to confirm the system's precision remains high.

Stage 4: Merge and Create Golden Records

Once duplicates are confirmed, survivorship rules determine which field values survive into the merged golden record. This is where deduplication becomes operationally consequential: incorrect survivorship rules can destroy good data or preserve bad data. For a complete guide to merge/purge operations, see our [INTERNAL LINK: 3C, merge purge guide].

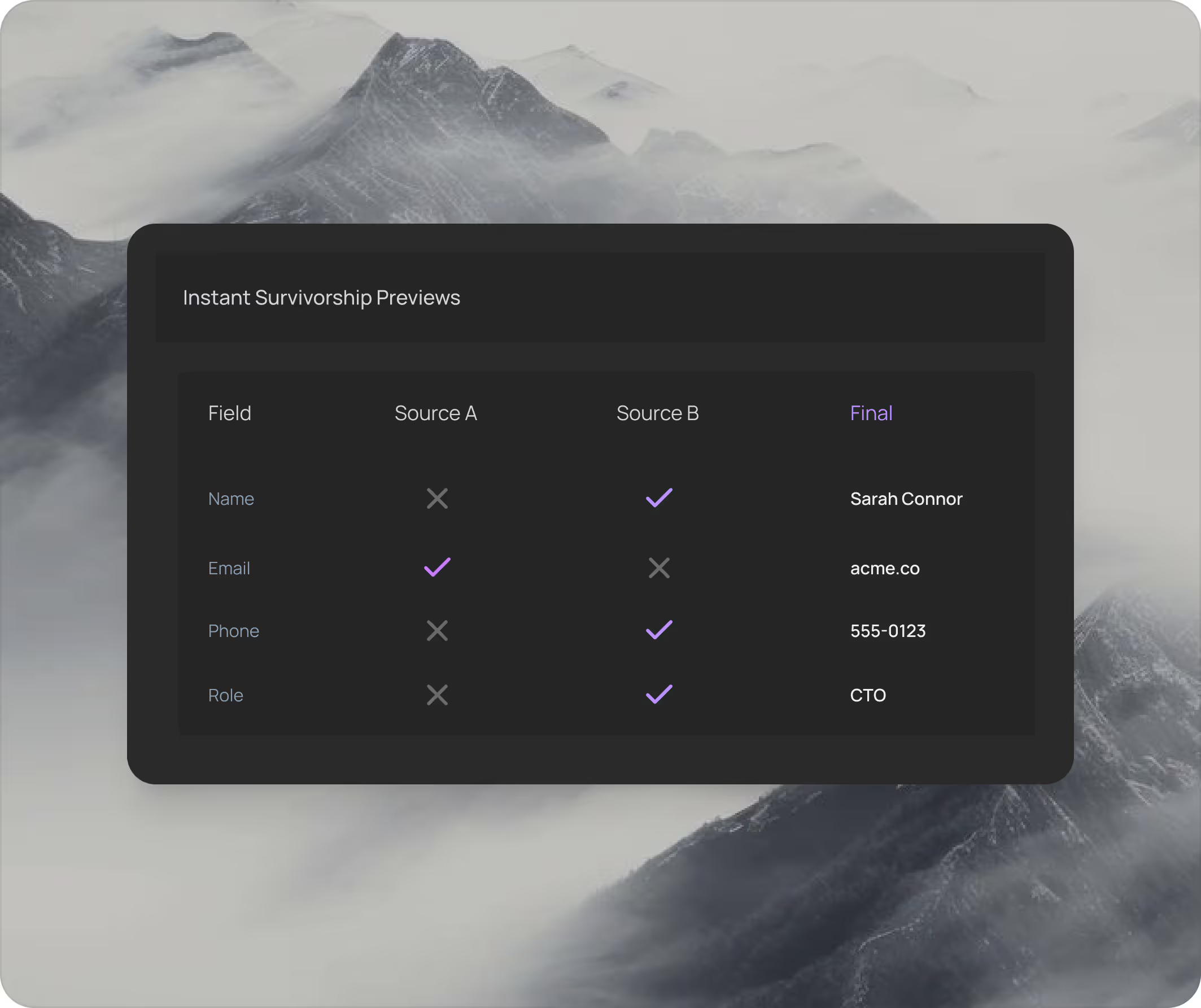

MatchLogic shows survivorship previews before any merge executes: see exactly which values will survive and which will be purged, field by field.

$1.9M

Average savings from eliminating duplicate processes

<6 sec

To merge 1 million duplicates into golden records

40%

Average record reduction after first merge purge

What Are Survivorship Rules and Why Do They Matter?

Survivorship rules define the logic for choosing which field values "win" when duplicate records are merged. Without explicit rules, merge operations either destroy valuable data or preserve incorrect data.

Most Recent

- Logic: The value from the most recently updated record wins.

- Best Used For: Dates, addresses, phone numbers, email addresses

Most Complete

- Logic: The longest or most-populated value wins.

- Best Used For: Names ("Robert J. Smith" beats "R. Smith"), addresses with full suite/unit

Source Priority

- Logic: Values from a designated "authoritative" system win regardless of recency or completeness.

- Best Used For: Identifiers from system of record (ERP for financial data, HRIS for employee data)

Aggregate

- Logic: Values from all duplicate records are combined (e.g., all email addresses preserved).

- Best Used For: Multi-value fields: email addresses, phone numbers, tags, categories

Manual Override

- Logic: A human reviewer selects the correct value from among the duplicates.

- Best Used For: Edge cases where no automated rule produces a reliable result

MatchLogic lets you configure survivorship rules per field: names use the longest value, dates use the most recent, addresses use the most complete, and source priority determines which system wins for identifiers.

How Should You Approach Deduplication for Specific Systems?

CRM Deduplication (Salesforce, HubSpot, Dynamics 365)

CRM systems are the most common deduplication target because they accumulate duplicates rapidly from web forms, imports, manual entry, and integrations. A typical Salesforce instance with 500,000 records contains 15–30% duplicates. The key challenge is that CRM records have business logic attached: opportunities, activities, cases, and campaign memberships are all linked to the contact or account record. Merging CRM duplicates without preserving these relationships destroys operational data. For CRM-specific strategies, see our [INTERNAL LINK: 3E, deduplication for CRM guide].

Mailing List Deduplication (Merge Purge)

Direct mail and email marketing lists require deduplication to eliminate wasted spend and avoid sending multiple communications to the same person. The industry term for this process is "merge purge": merge records from multiple lists into a single file, then purge the duplicates. A healthcare nonprofit running merge purge on its 200,000-record mailing list eliminated 60,000 duplicates and cut direct mail costs by 34% in the first quarter (Beacon Health Partners, MatchLogic customer). See our [INTERNAL LINK: 3C, merge purge guide] for the complete process.

“Merge purge eliminated 60,000 duplicate records from our mailing list. Cut direct mail costs by 34% in the first quarter.”

— Sarah Caldwell, VP Marketing Operations, Beacon Health Partners

34% cost reduction in first quarter

Database and Warehouse Deduplication

Data warehouses and lakes accumulate duplicates from upstream source systems. Deduplicating at the warehouse level ensures that analytics, BI dashboards, and ML models operate on clean data. The challenge is scale: warehouse-level dedup may involve tens of millions of records across hundreds of tables. [INTERNAL LINK: 1I, Data matching software] designed for enterprise scale handles these volumes without performance degradation.

How Should You Evaluate Deduplication Software?

Not all deduplication tools are built for enterprise complexity. When evaluating [INTERNAL LINK: 3A, dedupe software options], assess these criteria:

Matching Flexibility

- What to Assess: Does it support deterministic, probabilistic, fuzzy, and hybrid matching? Can you configure rules per entity type?

- Why It Matters: Different data types require different matching approaches. One-size-fits-all tools miss nuanced duplicates.

Survivorship Control

- What to Assess: Can you configure survivorship rules per field? Can you preview merge results before executing?

- Why It Matters: Incorrect merges destroy data. Preview and per-field control prevent costly mistakes.

Scale

- What to Assess: Can it process 10M+ records? What throughput? Does accuracy degrade at volume?

- Why It Matters: Enterprise datasets are large and growing. Performance must be predictable.

Automation

- What to Assess: Can it run scheduled or event-triggered dedup? API support for pipeline integration?

- Why It Matters: One-time dedup is wasted if duplicates re-accumulate. Ongoing automation is essential.

Audit Trail

- What to Assess: Does it log every merge decision with before/after snapshots? Can you reverse a merge?

- Why It Matters: Compliance requires documented evidence. Reversibility provides a safety net.

Deployment

- What to Assess: On-premise, cloud, or hybrid? Data residency compliance?

- Why It Matters: Regulated industries require on-premise processing for PII and PHI.

What Are the Best Practices for Enterprise Deduplication?

Profile Before You Deduplicate

Run data profiling on every dataset before configuring match rules. Profiling reveals the actual duplicate rate, format variations, and completeness gaps. Configuring match rules without profiling is guessing.

Start with High-Confidence Auto-Merge, Then Expand

Set your initial match threshold conservatively high to auto-merge only obvious duplicates (exact email + exact last name, for example). Review the results. Then gradually lower the threshold and add fuzzy matching rules to catch more nuanced duplicates. This approach minimizes false positive risk while building confidence in the system.

Never Deduplicate Without Survivorship Rules

Deleting duplicate records without defining which values survive is data destruction. Always configure field-level survivorship rules before executing any merge. Always preview merge results before committing. MatchLogic shows before/after comparisons for every merge, letting you validate quality before any data moves.

“Matched 1.8 million records across three systems with under 2% false positives. Finally have a single source of truth we actually trust.”

— Robert Tanaka, Director of Data Operations, Summit Financial Group

1.8M records matched with <2% false positives

Automate Ongoing Deduplication

A one-time dedup project is a depreciating asset. Within six months, new records re-introduce duplicates at the same rate. Embed deduplication into your data pipelines: check every new record against existing data at the point of entry, and run batch matching on a weekly or monthly cadence to catch drift.

Measure and Monitor

Track your duplicate rate over time. If it rises after initial cleanup, your prevention mechanisms are insufficient. Key metrics: duplicate rate (percentage of total records), merge rate (records merged per batch), precision (percentage of merges that were correct), and time-to-golden-record (from duplicate identification to merged output).

Eliminating Duplicates Is the First Step to Trustworthy Data

Duplicate records are the most visible symptom of fragmented enterprise data, and they are the most actionable to fix. The deduplication process (profile, match, review, merge) is well-established, and the technology exists to execute it at enterprise scale with full transparency and auditability.

The critical success factor is treating deduplication as an ongoing discipline, not a one-time project. Automated matching on ingest, scheduled batch scans, survivorship rules that preserve your best data, and continuous monitoring of duplicate rates keep your data clean permanently.

MatchLogic provides the on-premise infrastructure for enterprise deduplication: profiling that reveals your actual duplicate rate in seconds, matching that identifies duplicates across millions of records, survivorship rules configured per field, and audit trails that document every merge decision. For organizations where data residency and compliance are non-negotiable, the platform processes everything within your secured environment.

Frequently Asked Questions

What is data deduplication and how does it differ from storage deduplication?

Record-level data deduplication identifies and merges duplicate business records (customers, vendors, products) within databases and CRMs. Storage deduplication eliminates redundant data blocks in backup and storage systems. They solve different problems: record dedup improves data quality and business operations; storage dedup reduces disk consumption and backup costs. This guide focuses on record-level deduplication.

How many duplicates does a typical enterprise dataset contain?

Most enterprises discover 25–35% duplicate records in their first deduplication scan. The rate varies by industry and data entry practices. Organizations with multiple data entry channels, frequent system migrations, or manual data entry tend to have higher duplicate rates. CRM systems average 15–30% duplicates.

What are survivorship rules in data deduplication?

Survivorship rules define which field values are preserved when duplicate records are merged into a golden record. Common rules include: most recent value wins (for dates and addresses), most complete value wins (for names), source priority (for identifiers from authoritative systems), and aggregate (for multi-value fields like email addresses). Without explicit survivorship rules, merges either destroy good data or preserve incorrect data.

Can data deduplication run on-premise for regulated industries?

Yes. On-premise deduplication platforms process all data within your secured infrastructure. MatchLogic is built for on-premise deployment, ensuring PII, PHI, and regulated financial data never leave your network. All match decisions, merge operations, and audit trails are generated and stored locally.

How do you prevent duplicates from re-accumulating after cleanup?

Implement automated matching at the point of data entry so every new record is checked against existing data before it is created. Run scheduled batch matching (weekly or monthly) to catch duplicates that slip through real-time checks. Monitor your duplicate rate as a KPI and investigate any upward trend immediately.

What is the ROI of enterprise deduplication?

ROI varies by industry and data volume, but measurable returns include: reduced marketing waste (15–25% of spend on duplicates eliminated), prevention of duplicate vendor payments ($1.9M average savings per MatchLogic customer benchmarks), improved sales efficiency (eliminating 27% time waste on bad data), and reduced compliance risk. Most enterprises achieve positive ROI within the first quarter of implementation.

.svg)